Operations Management

Browse operations management learning materials including case studies, simulations, and online courses. Introduce core concepts and real-world challenges to create memorable learning experiences for your students.

Browse by Topic

- Capacity Planning

- Demand Planning

- Inventory Management

- Process Analysis

- Process Improvement

- Production Planning

- Project Management

- Quality Management

New! Quick Cases in Operations Management

Quickly immerse students in focused and engaging business dilemmas. No student prep time required.

Fundamentals of Case Teaching

Our new, self-paced, online course guides you through the fundamentals for leading successful case discussions at any course level.

New in Operations Management

Explore the latest operations management learning materials

1325 word count

1563 word count

1098 word count

2320 word count

1841 word count

3547 word count

1648 word count

1840 word count

Looking for something specific?

Explore materials that align with your operations management learning objectives

Operations Management Simulations

Give your students hands-on experience making decisions.

Operations Management Cases with Female Protagonists

Explore a collection of operations management cases featuring female protagonists curated by the HBS Gender Initiative.

Operations Management Cases with Protagonists of Color

Discover operations management cases featuring protagonists of color that have been recommended by Harvard Business School faculty.

Foundational Operations Management Readings

Discover readings that cover the fundamental concepts and frameworks that business students must learn about operations management.

Bestsellers in Operations Management

Explore what other educators are using in their operations management courses

Start building your courses today

Register for a free Educator Account and get exclusive access to our entire catalog of learning materials, teaching resources, and online course planning tools.

We use cookies to understand how you use our site and to improve your experience, including personalizing content. Learn More . By continuing to use our site, you accept our use of cookies and revised Privacy Policy .

Smart. Open. Grounded. Inventive. Read our Ideas Made to Matter.

Which program is right for you?

Through intellectual rigor and experiential learning, this full-time, two-year MBA program develops leaders who make a difference in the world.

A rigorous, hands-on program that prepares adaptive problem solvers for premier finance careers.

A 12-month program focused on applying the tools of modern data science, optimization and machine learning to solve real-world business problems.

Earn your MBA and SM in engineering with this transformative two-year program.

Combine an international MBA with a deep dive into management science. A special opportunity for partner and affiliate schools only.

A doctoral program that produces outstanding scholars who are leading in their fields of research.

Bring a business perspective to your technical and quantitative expertise with a bachelor’s degree in management, business analytics, or finance.

A joint program for mid-career professionals that integrates engineering and systems thinking. Earn your master’s degree in engineering and management.

An interdisciplinary program that combines engineering, management, and design, leading to a master’s degree in engineering and management.

Executive Programs

A full-time MBA program for mid-career leaders eager to dedicate one year of discovery for a lifetime of impact.

This 20-month MBA program equips experienced executives to enhance their impact on their organizations and the world.

Non-degree programs for senior executives and high-potential managers.

A non-degree, customizable program for mid-career professionals.

Teaching Resources Library

Operations Management Case Studies

Browse Course Material

Course info, instructors.

- Prof. Charles H. Fine

- Prof. Tauhid Zaman

Departments

- Sloan School of Management

As Taught In

- Mathematics

- Social Science

Introduction to Operations Management

Cases and readings.

The required readings for this course include:

- Cases listed in the Cases/Readings column below

- Goldratt, Eliyah M., and Jeff Cox. The Goal: A Process of Ongoing Improvement . 2nd revised ed. North River Press, 1992. ISBN: 9780884270614.

- [MSD] = Cachon, Gerard, and Christian Terwiesch. Matching Supply with Demand: An Introduction to Operations Management . 3rd ed. McGraw-Hill, 2012. ISBN: 9780073525204.

You are leaving MIT OpenCourseWare

To read this content please select one of the options below:

Please note you do not have access to teaching notes, case research in operations management.

International Journal of Operations & Production Management

ISSN : 0144-3577

Article publication date: 1 February 2002

This paper reviews the use of case study research in operations management for theory development and testing. It draws on the literature on case research in a number of disciplines and uses examples drawn from operations management research. It provides guidelines and a roadmap for operations management researchers wishing to design, develop and conduct case‐based research.

- Operations management

- Methodology

- Case studies

Voss, C. , Tsikriktsis, N. and Frohlich, M. (2002), "Case research in operations management", International Journal of Operations & Production Management , Vol. 22 No. 2, pp. 195-219. https://doi.org/10.1108/01443570210414329

Copyright © 2002, MCB UP Limited

Related articles

We’re listening — tell us what you think, something didn’t work….

Report bugs here

All feedback is valuable

Please share your general feedback

Join us on our journey

Platform update page.

Visit emeraldpublishing.com/platformupdate to discover the latest news and updates

Questions & More Information

Answers to the most commonly asked questions here

- SUGGESTED TOPICS

- The Magazine

- Newsletters

- Managing Yourself

- Managing Teams

- Work-life Balance

- The Big Idea

- Data & Visuals

- Reading Lists

- Case Selections

- HBR Learning

- Topic Feeds

- Account Settings

- Email Preferences

Operations strategy

- Business management

- Operations and supply chain management

- Supply chain management

The New Human-Machine Relationship

- Ben Armstrong

- Nita A. Farahany

- Mike Seymour

- Dan Lovallo

- Alan R. Dennis

- Lingyao Ivy Yuan

- February 28, 2023

Raising Wages Is the Right Thing to Do, and Doesn’t Have to Be Bad for Your Bottom Line

- April 18, 2019

Getting Control of Just-in-Time

- Uday Karmarkar

- From the September–October 1989 Issue

Lessons from the U.S.'s Rocky Vaccine Rollout

- Robert S. Huckman

- Bradley R. Staats

- Bradley Staats

- January 28, 2021

Deep Change: How Operational Innovation Can Transform Your Company (HBR OnPoint Enhanced Edition)

- Michael Hammer

- April 01, 2004

Companies Are Working with Consumers to Reduce Waste

- Mark Esposito

- Terence Tse

- Khaled Soufani

- June 07, 2016

Hospitals Can’t Improve Without Better Management Systems

- John S. Toussaint

- October 21, 2015

How to Survive Climate Change and Still Run a Thriving Business: Checklists for Smart Leaders

- Eric Lowitt

- From the April 2014 Issue

Coupling Strategy to Operating Plans

- John M. Hobbs

- Donald F. Heany

- From the May 1977 Issue

Firms Need a Blueprint for Building Their IT Systems

- Donald A. Marchand

- Joe Peppard

- June 18, 2015

Integrate Data into Products, or Get Left Behind

- Thomas C. Redman

- June 28, 2012

The Department of Mobility

- Rex Runzheimer

- From the November 2005 Issue

Pain in the (Supply) Chain (HBR Case Study and Commentary)

- John Butman

- From the May 2002 Issue

Breaking the Trade-Off Between Efficiency and Service

- Frances X. Frei

- From the November 2006 Issue

How Loyalty Programs Are Saving Airlines

- So Yeon Chun

- Evert de Boer

- April 02, 2021

How Kenvue De-Risked Its Supply Chain

- Michael Altman

- Atalay Atasu

- Evren Özkaya

- October 18, 2023

What Every Leader Should Know About Real Estate

- Mahlon Apgar, IV

- From the November 2009 Issue

The World's Housing Crisis Doesn't Need a Revolutionary Solution

- Lola Woetzel

- Jan Mischke

- Sangeeth Ram

- December 25, 2014

Is Your Supply Chain Ready for the Congestion Crisis?

- George Stalk, Jr.

- Petros Paranikas

- June 22, 2015

Customer Intimacy and Other Value Disciplines

- Michael Treacy

- Fred Wiersema

- From the January–February 1993 Issue

FreeMarkets OnLine

- V. Kasturi Rangan

- February 27, 1998

Apple Pay and Mobile Payments in Australia (A)

- Susan Athey

- September 13, 2018

SANY: Going Global

- Stefan Lippert

- Nancy Hua Dai

- November 11, 2012

Apple Pay and Mobile Payments in Australia (B)

Ilinko: enterprise systems implementation all over again.

- January 11, 2013

Nike in China (Abridged)

- James E. Austin

- April 11, 1990

A3 Thinking

- Elliott N. Weiss

- Austin English

- June 03, 2020

The Writing Process in Systems Thinking

- Robert D. Landel

- Jennifer Corle

- March 04, 2004

Mibanco: Meeting the Mainstreaming of Microfinance

- Michael Chu

- Gustavo A. Herrero

- Jean Steege Hazell

- August 23, 2011

Booking.com

- Stefan Thomke

- Daniela Beyersdorfer

- October 15, 2018

ExtendSim (R) Simulation Exercises in Process Analysis (B2)

- Roy D. Shapiro

- September 22, 1994

Clean Core Thorium Energy and the Role of Nuclear Power in the Low-carbon Transition

- Gernot Wagner

- July 10, 2023

Rich-Con Steel

- Andrew McAfee

- January 27, 1999

Boeing 787: Manufacturing a Dream

- Rory McDonald

- Suresh Kotha

- February 12, 2015

Messer Griesheim (A)

- Josh Lerner

- Ann-Kristin Achleitner

- Eva Nathusius

- Kerry Herman

- February 18, 2009

From Correlation to Causation

- Karim R. Lakhani

- August 31, 2015

Integron, Inc.: The Integrated Components Division (ICD)

- David M. Upton

- Michelle Jarrard

- Laurie Thomas

- June 30, 1995

Southeastern Mills: The Eighth Element?

- Rebecca O. Goldberg

- Andrew Moon

- December 23, 2009

Industrial Grinders N.V.

- M. Edgar Barrett

- Rohan S. Weerasinghe

- March 01, 1975

Advanced Glass Technologies, Inc.: The ZX Project

- November 22, 2011

Whole Foods under Amazon, Teaching Note

- Dennis Campbell

- Tatiana Sandino

- Kyle Thomas

- February 22, 2019

CFNA Credit Corporation: Call Center Outsourcing, Spreadsheet

- Timothy M. Laseter

- March 28, 2011

Popular Topics

Partner center.

- About / Contact

- Privacy Policy

- Alphabetical List of Companies

- Business Analysis Topics

Walmart’s Operations Management: 10 Strategic Decisions & Productivity

Walmart Inc.’s operations management involves a variety of approaches that are focused on managing the supply chain and inventory, as well as sales performance. The company’s success is significantly based on effective performance in retail operations management. Specifically, Walmart’s management covers all the 10 decision areas of operations management. These strategic decision areas pertain to the issues managers deal with on a daily basis as they optimize the e-commerce company’s operations. Walmart’s application of the 10 decisions of operations management reflects managers’ prioritization of business objectives. In turn, this prioritization shows the strategic significance of the different decision areas of operations management in the retail company’s business. This approach to operations aligns with Walmart’s corporate mission statement and corporate vision statement . The retail enterprise is a business case of how to achieve high efficiency in operations to ensure long-term growth and success in the global market.

The 10 decisions of operations management are effectively addressed in Walmart’s business through a combination of approaches that emphasize supply chain management, inventory management, and sales and marketing. This approach leads to strategies that strengthen the business against competitors, like Amazon and its subsidiary, Whole Foods , as well as Home Depot , eBay, Costco , Best Buy, Macy’s, Kroger, Alibaba, IKEA, Target, and Lowe’s.

The 10 Strategic Decision Areas of Operations Management at Walmart

1. Design of Goods and Services . This decision area of operations management involves the strategic characterization of the retail company’s products. In this case, the decision area covers Walmart’s goods and services. As a retailer, the company offers retail services. However, Walmart also has its own brands of goods, such as Great Value and Sam’s Choice. The company’s operations management addresses the design of retail service by emphasizing the variables of efficiency and cost-effectiveness. Walmart’s generic strategy for competitive advantage, and intensive growth strategies emphasize low costs and low selling prices. To fulfill these strategies, the firm focuses on maximum efficiency of its retail service operations. To address the design of goods in this decision area of operations management, Walmart emphasizes minimal production costs, especially for the Great Value brand. The firm’s consumer goods are designed in a way that they are easy to mass-produce. The strategic approach in this operations management area affects Walmart’s marketing mix or 4Ps and the corporation’s strategic planning for product development and retail service expansion.

2. Quality Management . Walmart approaches this decision area of operations management through three tiers of quality standards. The lowest tier specifies the minimum quality expectations of the majority of buyers. Walmart keeps this tier for most of its brands, such as Great Value. The middle tier specifies market average quality for low-cost retailers. This tier is used for some products, as well as for the job performance targets of Walmart employees, especially sales personnel. The highest tier specifies quality levels that exceed market averages in the retail industry. This tier is applied to only a minority of Walmart’s outputs, such as goods under the Sam’s Choice brand. This three-tier approach satisfies quality management objectives in the strategic decision areas of operations management throughout the retail business organization. Appropriate quality measures also contribute to the strengths identified in the SWOT analysis of Walmart Inc .

3. Process and Capacity Design . In this strategic decision area, Walmart’s operations management utilizes behavioral analysis, forecasting, and continuous monitoring. Behavioral analysis of customers and employees, such as in the brick-and-mortar stores and e-commerce operations, serves as basis for the company’s process and capacity design for optimizing space, personnel, and equipment. Forecasting is the basis for Walmart’s ever-changing capacity design for human resources. The company’s HR process and capacity design evolves as the retail business grows. Also, to satisfy concerns in this decision area of operations management, Walmart uses continuous monitoring of store capacities to inform corporate managers in keeping or changing current capacity designs.

4. Location Strategy . This decision area of operations management emphasizes efficiency of movement of materials, human resources, and business information throughout the retail organization. In this regard, Walmart’s location strategy includes stores located in or near urban centers and consumer population clusters. The company aims to maximize market reach and accessibility for consumers. Materials and goods are made available to Walmart’s employees and target customers through strategic warehouse locations. On the other hand, to address the business information aspect of this decision area of operations management, Walmart uses Internet technology and related computing systems and networks. The company has a comprehensive set of online information systems for real-time reports and monitoring that support managing individual retail stores as well as regional market operations.

5. Layout Design and Strategy . Walmart addresses this decision area of operations management by assessing shoppers’ and employees’ behaviors for the layout design of its brick-and-mortar stores, e-commerce websites, and warehouses or storage facilities. The layout design of the stores is based on consumer behavioral analysis and corporate standards. For example, Walmart’s placement of some goods in certain areas of its stores, such as near the entrance/exit, maximizes purchase likelihood. On the other hand, the layout design and strategy for the company’s warehouses are based on the need to rapidly move goods across the supply chain to the stores. Walmart’s warehouses maximize utilization and efficiency of space for the company’s trucks, suppliers’ trucks, and goods. With efficiency, cost-effectiveness, and cost-minimization, the retail company satisfies the needs in this strategic decision area of operations management.

6. Human Resources and Job Design . Walmart’s human resource management strategies involve continuous recruitment. The retail business suffers from relatively high turnover partly because of low wages, which relate to the cost-leadership generic strategy. Nonetheless, continuous recruitment addresses this strategic decision area of operations management, while maintaining Walmart’s organizational structure and corporate culture . Also, the company maintains standardized job processes, especially for positions in its stores. Walmart’s training programs support the need for standardization for the service quality standards of the business. Thus, the company satisfies concerns in this decision area of operations management despite high turnover.

7. Supply Chain Management . Walmart’s bargaining power over suppliers successfully addresses this decision area of operations management. The retailer’s supply chain is comprehensively integrated with advanced information technology, which enhances such bargaining power. For example, supply chain management information systems are directly linked to Walmart’s ability to minimize costs of operations. These systems enable managers and vendors to collaborate in deciding when to move certain amounts of merchandise across the supply chain. This condition utilizes business competitiveness with regard to competitive advantage, as shown in the Porter’s Five Forces analysis of Walmart Inc . As one of the biggest retailers in the world, the company wields its strong bargaining power to impose its demands on suppliers, as a way to address supply chain management issues in this strategic decision area of operations management. Nonetheless, considering Walmart’s stakeholders and corporate social responsibility strategy , the company balances business needs and the needs of suppliers, who are a major stakeholder group.

8. Inventory Management . In this decision area of operations management, Walmart focuses on the vendor-managed inventory model and just-in-time cross-docking. In the vendor-managed inventory model, suppliers access the company’s information systems to decide when to deliver goods based on real-time data on inventory levels. In this way, Walmart minimizes the problem of stockouts. On the other hand, in just-in-time cross-docking, the retail company minimizes the size of its inventory, thereby supporting cost-minimization efforts. These approaches help maximize the operational efficiency and performance of the retail business in this strategic decision area of operations management (See more: Walmart: Inventory Management ).

9. Scheduling . Walmart uses conventional shifts and flexible scheduling. In this decision area of operations management, the emphasis is on optimizing internal business process schedules to achieve higher efficiencies in the retail enterprise. Through optimized schedules, Walmart minimizes losses linked to overcapacity and related issues. Scheduling in the retailer’s warehouses is flexible and based on current trends. For example, based on Walmart’s approaches to inventory management and supply chain management, suppliers readily respond to changes in inventory levels. As a result, most of the company’s warehouse schedules are not fixed. On the other hand, Walmart store processes and human resources in sales and marketing use fixed conventional shifts for scheduling. Such fixed scheduling optimizes the retailer’s expenditure on human resources. However, to fully address scheduling as a strategic decision area of operations management, Walmart occasionally changes store and personnel schedules to address anticipated changes in demand, such as during Black Friday. This flexibility supports optimal retail revenues, especially during special shopping occasions.

10. Maintenance . With regard to maintenance needs, Walmart addresses this decision area of operations management through training programs to maintain human resources, dedicated personnel to maintain facilities, and dedicated personnel to maintain equipment. The retail company’s human resource management involves training programs to ensure that employees are effective and efficient. On the other hand, dedicated personnel for facility maintenance keep all of Walmart’s buildings in shape and up to corporate and regulatory standards. In relation, the company has dedicated personnel as well as third-party service providers for fixing and repairing equipment like cash registers and computers. Walmart also has personnel for maintaining its e-commerce websites and social media accounts. This combination of maintenance approaches contributes to the retail company’s effectiveness in satisfying the concerns in this strategic decision area of operations management. Effective and efficient maintenance supports business resilience against threats in the industry environment, such as the ones evaluated in the PESTEL/PESTLE Analysis of Walmart Inc .

Determining Productivity at Walmart Inc.

One of the goals of Walmart’s operations management is to maximize productivity to support the minimization of costs under the cost leadership generic strategy. There are various quantitative and qualitative criteria or measures of productivity that pertain to human resources and related internal business processes in the retail organization. Some of the most notable of these productivity measures/criteria at Walmart are:

- Revenues per sales unit

- Stockout rate

- Duration of order filling

The revenues per sales unit refers to the sales revenues per store, average sales revenues per store, and sales revenues per sales team. Walmart’s operations managers are interested in maximizing revenues per sales unit. On the other hand, the stockout rate is the frequency of stockout, which is the condition where inventories for certain products are empty or inadequate despite positive demand. Walmart’s operations management objective is to minimize stockout rates. Also, the duration of order filling is the amount of time consumed to fill inventory requests at the company’s stores. The operations management objective in this regard is to minimize the duration of order filling, as a way to enhance Walmart’s business performance.

- Reid, R. D., & Sanders, N. R. (2023). Operations Management: An Integrated Approach . John Wiley & Sons.

- Szwarc, E., Bocewicz, G., Golińska-Dawson, P., & Banaszak, Z. (2023). Proactive operations management: Staff allocation with competence maintenance constraints. Sustainability, 15 (3), 1949.

- Walmart Inc. – Form 10-K .

- Walmart Inc. – History .

- Walmart Inc. – Location Facts .

- Walmart’s E-commerce Website .

- Copyright by Panmore Institute - All rights reserved.

- This article may not be reproduced, distributed, or mirrored without written permission from Panmore Institute and its author/s.

- Educators, Researchers, and Students: You are permitted to quote or paraphrase parts of this article (not the entire article) for educational or research purposes, as long as the article is properly cited and referenced together with its URL/link.

- Browse All Articles

- Newsletter Sign-Up

ServiceOperations →

No results found in working knowledge.

- Were any results found in one of the other content buckets on the left?

- Try removing some search filters.

- Use different search filters.

Operations Management

A primary challenge for governments and organizations is to manage their resources as efficiently as possible. The teaching cases in this section challenge students to become decisive managers through a host of topics including budgeting and finance, infrastructure, regulatory policy, and transportation.

Mayoral Transitions: How Three Mayors Stepped into the Role, in Their Own Words

Publication Date: February 29, 2024

New mayors face distinct challenges as they assume office. In these vignettes depicting three types of mayoral transitions, explore how new leaders can make the most of their first one hundred days by asserting their authority and...

Shoring Up Child Protection in Massachusetts: Commissioner Spears & the Push to Go Fast

Publication Date: July 13, 2023

In January 2015, when incoming Massachusetts Governor Charlie Baker chose Linda Spears as his new Commissioner of the Department of Children and Families, he was looking for a reformer. Following the grizzly death of a child under DCF...

OneBlood and COVID-19: Building an Agile Supply Chain Epilogue

Publication Date: October 20, 2021

This epilogue accompanies HKS Case 2233.0. The blood supply chain is under pressure from COVID-19. How should the 3rd largest blood bank in the US, OneBlood, respond? Is adopting an agile supply chain philosophy an effective...

OneBlood and COVID-19: Building an Agile Supply Chain

The blood supply chain is under pressure from COVID-19. How should the 3rd largest blood bank in the US, OneBlood, respond? Is adopting an agile supply chain philosophy an effective approach? The case provides an overview of the...

“A Difficult Lady”: Shutting Down Pollution in Kampala, Uganda Practitioner Guide

Publication Date: October 15, 2021

This practitioner guide accompanies HKS Case 2231.0. In 2011, sanitation and environmental management expert Judith Tumusiime joined the Kampala Capital City Authority (KCCA), where she and KCCA Executive Director Jennifer Musisi quickly became...

“A Difficult Lady”: Shutting Down Pollution in Kampala, Uganda

In 2011, sanitation and environmental management expert Judith Tumusiime joined the Kampala Capital City Authority (KCCA), where she and KCCA Executive Director Jennifer Musisi quickly became a dynamic team, working together to execute a mandate...

“Pressing the Right Buttons”: Jennifer Musisi for New City Leadership Epilogue

Publication Date: September 9, 2020

This epilogue accompanies HKS Case 2186.0. Jennifer Musisi, a career civil servant most recently with the Uganda Revenue Authority, was appointed by President Museveni as executive director (equivalent to city manager) of a new governing body...

“Pressing the Right Buttons”: Jennifer Musisi for New City Leadership Practitioner Guide

This practitioner guide accompanies HKS Case 2186.0. Jennifer Musisi, a career civil servant most recently with the Uganda Revenue Authority, was appointed by President Museveni as executive director (equivalent to city manager) of a new...

“Pressing the Right Buttons”: Jennifer Musisi for New City Leadership

Jennifer Musisi, a career civil servant most recently with the Uganda Revenue Authority, was appointed by President Museveni as executive director (equivalent to city manager) of a new governing body for Uganda’s capital, the Kampala...

The “Garbage Lady” Cleans Up Kampala: Turning Quick Wins into Lasting Change Practitioner Guide

Publication Date: June 30, 2020

This practitioner guide accompanies HKS Case 2181.0. In 2011, at the newly formed Kampala Capital City Authority (KCCA), Judith Tumusiime, an impassioned technocrat who prided herself on operating outside of politics, was charged with...

The “Garbage Lady” Cleans Up Kampala: Turning Quick Wins into Lasting Change (Epilogue)

This epilogue accompanies HKS Case 2181.0. In 2011, at the newly formed Kampala Capital City Authority (KCCA), Judith Tumusiime, an impassioned technocrat who prided herself on operating outside of politics, was charged with transforming a...

The “Garbage Lady” Cleans Up Kampala: Turning Quick Wins into Lasting Change

In 2011, at the newly formed Kampala Capital City Authority (KCCA), Judith Tumusiime, an impassioned technocrat who prided herself on operating outside of politics, was charged with transforming a “filthy city” to a clean, habitable,...

Top 40 Most Popular Case Studies of 2021

Two cases about Hertz claimed top spots in 2021's Top 40 Most Popular Case Studies

Two cases on the uses of debt and equity at Hertz claimed top spots in the CRDT’s (Case Research and Development Team) 2021 top 40 review of cases.

Hertz (A) took the top spot. The case details the financial structure of the rental car company through the end of 2019. Hertz (B), which ranked third in CRDT’s list, describes the company’s struggles during the early part of the COVID pandemic and its eventual need to enter Chapter 11 bankruptcy.

The success of the Hertz cases was unprecedented for the top 40 list. Usually, cases take a number of years to gain popularity, but the Hertz cases claimed top spots in their first year of release. Hertz (A) also became the first ‘cooked’ case to top the annual review, as all of the other winners had been web-based ‘raw’ cases.

Besides introducing students to the complicated financing required to maintain an enormous fleet of cars, the Hertz cases also expanded the diversity of case protagonists. Kathyrn Marinello was the CEO of Hertz during this period and the CFO, Jamere Jackson is black.

Sandwiched between the two Hertz cases, Coffee 2016, a perennial best seller, finished second. “Glory, Glory, Man United!” a case about an English football team’s IPO made a surprise move to number four. Cases on search fund boards, the future of malls, Norway’s Sovereign Wealth fund, Prodigy Finance, the Mayo Clinic, and Cadbury rounded out the top ten.

Other year-end data for 2021 showed:

- Online “raw” case usage remained steady as compared to 2020 with over 35K users from 170 countries and all 50 U.S. states interacting with 196 cases.

- Fifty four percent of raw case users came from outside the U.S..

- The Yale School of Management (SOM) case study directory pages received over 160K page views from 177 countries with approximately a third originating in India followed by the U.S. and the Philippines.

- Twenty-six of the cases in the list are raw cases.

- A third of the cases feature a woman protagonist.

- Orders for Yale SOM case studies increased by almost 50% compared to 2020.

- The top 40 cases were supervised by 19 different Yale SOM faculty members, several supervising multiple cases.

CRDT compiled the Top 40 list by combining data from its case store, Google Analytics, and other measures of interest and adoption.

All of this year’s Top 40 cases are available for purchase from the Yale Management Media store .

And the Top 40 cases studies of 2021 are:

1. Hertz Global Holdings (A): Uses of Debt and Equity

2. Coffee 2016

3. Hertz Global Holdings (B): Uses of Debt and Equity 2020

4. Glory, Glory Man United!

5. Search Fund Company Boards: How CEOs Can Build Boards to Help Them Thrive

6. The Future of Malls: Was Decline Inevitable?

7. Strategy for Norway's Pension Fund Global

8. Prodigy Finance

9. Design at Mayo

10. Cadbury

11. City Hospital Emergency Room

13. Volkswagen

14. Marina Bay Sands

15. Shake Shack IPO

16. Mastercard

17. Netflix

18. Ant Financial

19. AXA: Creating the New CR Metrics

20. IBM Corporate Service Corps

21. Business Leadership in South Africa's 1994 Reforms

22. Alternative Meat Industry

23. Children's Premier

24. Khalil Tawil and Umi (A)

25. Palm Oil 2016

26. Teach For All: Designing a Global Network

27. What's Next? Search Fund Entrepreneurs Reflect on Life After Exit

28. Searching for a Search Fund Structure: A Student Takes a Tour of Various Options

30. Project Sammaan

31. Commonfund ESG

32. Polaroid

33. Connecticut Green Bank 2018: After the Raid

34. FieldFresh Foods

35. The Alibaba Group

36. 360 State Street: Real Options

37. Herman Miller

38. AgBiome

39. Nathan Cummings Foundation

40. Toyota 2010

Case Related Links

Case studies collection.

Business Strategy Marketing Finance Human Resource Management IT and Systems Operations Economics Leadership and Entrepreneurship Project Management Business Ethics Corporate Governance Women Empowerment CSR and Sustainability Law Business Environment Enterprise Risk Management Insurance Innovation Miscellaneous Business Reports Multimedia Case Studies Cases in Other Languages Simplified Case Studies

Short Case Studies

Business Ethics Business Environment Business Strategy Consumer Behavior Human Resource Management Industrial Marketing International Marketing IT and Systems Marketing Communications Marketing Management Miscellaneous Operations Sales and Distribution Management Services Marketing More Short Case Studies >

JavaScript seems to be disabled in your browser. For the best experience on our site, be sure to turn on Javascript in your browser.

We use cookies to make your experience better. To comply with the new e-Privacy directive, we need to ask for your consent to set the cookies. Learn more .

- Compare Products

- Case Collection

- Operations Management

Items 1 - 10 of 14

- You're currently reading page 1

The case is centered around the timeline of the Telangana graduates’ MLC elections 2021, which were held against the backdrop of a known unknown: the COVID-19 pandemic. The electoral officials had to be mindful of the numerous security protocols and complexities involved in implementing the election process in such uncertain times. They had to incorporate additional steps and plan for contingencies to mitigate risks while executing the election process. Halfway through the election planning process, it became clear that the number of voters and candidates was unprecedentedly large. This unexpected development necessitated a revision of the prior plan for conducting the elections. Shashank Goel, Chief Electoral Officer (CEO), and M. Satyavani, Deputy CEO, were architecting the plan for conducting the elections with an unexpectedly large number of voters and candidates under pandemic-induced disruptions. Goel was also reflecting on how to develop contingency plans for these elections, given the uncertainty produced by unforeseen external factors and the associated risks. Although he had the mandate to conduct free and fair elections within the stipulated timelines and was assured that the required resources would be provided, several factors had to be considered. According to the constitutional guidelines for the graduates' MLC elections, qualified and registered graduate voters could cast their vote by ranking candidates preferentially. Paper ballots had to be used because electronic voting machines (EVMs) could not handle preferential voting. The scale and magnitude of the elections necessitated jumbo ballot boxes. To manage the process, the number of polling stations had to be increased, and manpower had to be trained. Further, the presence of healthcare workers to ensure the safety of voters and the deployed staff was imperative. The Telangana CEO’s office had to meet the increased logistical and technical requirements and ensure high voting turnouts while executing the election process.

Postponing the election was not an option for the ECI from the standpoint of the legal code of conduct. The Telangana CEO's office prepared a revised election plan. The project plan was amended to incorporate the need for additional resources and logistical support to execute the election process. As the efforts of the staff were maximized effectively, the elections could be conducted smoothly and transparently although a large number of candidates were in the fray.

Teaching and Learning Objectives:

The key case objectives are to enable students to:

- Appreciate the importance of effective project management, planning, and execution in public administration against the backdrop of uncertainties and complexities.

- Understand the importance of risk identification, risk planning, and prioritization.

- Learn strategies to manage various project risks in a real-life situation.

- Identify the characteristics of effective leadership in times of crisis and the key takeaways from such scenarios

The case is designed to be used in courses on Nonprofit Operations Management, Data Analytics, Six Sigma, and Business Process Excellence/Improvement in MBA or Executive MBA programs. It is suitable for teaching students about the common problem of lower rates of volunteerism in nonprofit organizations. Further, the case study helps present the importance and application of inferential statistics (data analytics) to identify the impact of various factors on the problem (effect). The case is set in early 2021 when Shefali Sharma, the Strategy and Learning Manager with Teach For India (TFI), faced a few challenging questions from a professor at the Indian School of Business (ISB) during her presentation at an industry gathering in Hyderabad, India. Sharma was concerned about the low matriculation rate of TFI fellows, despite the rigorous recruitment, selection, and matriculation (RSM) process. A mere 50-60% matriculation rate was not a commensurate return for an investment of INR 6.5 million and the massive effort put into the RSM process. In 2017, Sharma organized focused informative and experiential events to motivate candidates to join the fellowship, but it was not very clear if these events impacted the TFI matriculation rate. After the industry gathering at ISB, Sharma followed up with the professor to seek his guidance in performing data analytics on the matriculation data. Sharma wondered if inferential data analysis could help her understand which demographic factors and events impact the matriculation rate.

Learning Objective

- Illustrate the importance of inferential statistics as a decision support system in resolving business problems

- Formulating and solving a hypothesis testing problem for attribute (discrete) data

- Visually depicting the flow of work across different stages of a process

In response to the uncontrollable second wave of COVID-19 in the south Indian state of Telangana in April 2021, a few like-minded social activists in the capital city of Hyderabad came together to establish a 100-bed medical care center to treat COVID-19 patients. The project was named Ashray. Dr. Chinnababu Sunkavalli (popularly known as Chinna) was the project manager of Project Ashray. In addition to the inherent inadequacy of hospital beds to accommodate the growing number of COVID- 19 patients till March 2021, the city faced a sudden spike of infections in April that worsened the situation. Consequently, the occupancy in government and private hospitals in Hyderabad increased by 485% and 311%, respectively, from March to April. According to a prediction model, Chinna knew that hospital beds would be exhausted in several parts of the city in the next few days. The Project Ashray team was concerned about the situation. The team met on April 26, 2021, to schedule the project to establish the medical care center within the next 10 days. The case is suitable for teaching students how to approach the scheduling problem of a time- constrained project systematically. It helps as a pedagogical aid in teaching management concepts such as project visualization, estimating project duration, float, and project laddering or activity splitting, and tools such as network diagrams, critical path method, and crashing. The case exposes students to a real-time problem-solving approach under uncertainty and crises and the critical role of NGOs in supporting the governments. Alongside the Project Management and Operations Management courses, other courses like Managerial decision-making in nonprofit organizations, Health care delivery, and healthcare operations could also find support from this case.

Learning Objectives:

To learn: Time-constrained projects and associated scheduling problems Project visualization using network diagrams Activity sequencing and converting sequential activities to parallel activities Critical path method (early start, early finish, late start, late finish, forward pass, backward pass, and float) to estimate a project's overall duration Project laddering to reduce the project duration wherever possible Project crashing using linear programming

The case goes on to describe the enormous challenges involved in building the 4.94 km long Bogibeel Bridge in the North Eastern Region (NER) of India. When it was finally commissioned in 2018, it was hailed as a marvel of engineering. With two rail lines and a two-lane road over it, the bridge spanned the mighty Brahmaputra river. The Bogibeel Bridge was India's longest and Asia's second-longest road and rail bridge with fully-welded bridge technology that met European codes and welding standards. The interstate connectivity provided by the bridge enabled important socio-economic developments in the NER that included improved logistics and transportation, the growth of medical and educational facilities, higher employment, and the rise of international trade and tourism. While the outcomes of the project were significant, the efforts that went into constructing the Bogibeel Bridge were equally so. This case study is designed to teach the importance of effective risk planning in project management. Further, the case introduces students to earned value analysis and project oversight in managing large projects. The case centers on Indian Railways' need to quickly discover why the Bogibeel project was not going according to plan. The case also serves as a resource to teach public operations management where the focus is on projects and operations that result in socio-economic outcomes.

- Appreciate the importance of risk planning and risk prioritization and learn strategies to manage various project risks

- Understand earned value management (EVM) and the associated metrics and calculations for project evaluation on time and cost schedules.

- Identify social impact outcomes in public/infrastructure projects.

Access to clean water is so critical for development and survival that the United Nations' Sustainable Development Goal number 6 (SDG-6) was to ensure availability and sustained management of water and sanitation. The World Health Organization (WHO) in 2006 estimated that 97 million Indians lacked clean and safe water. Fluoride and total dissolvable solids (TDS) in drinking water were dangerously high at many parts of rural India, with adverse impacts. On the other hand, buying clean drinking water from commercial vendors at market rates was not a realistic alternative, a costly recurring expense that much of India's rural population could not afford. The case tracks the efforts of Huggahalli, head of the technology group of Sri Sathya Sai Seva Organisations (SSSO), to devise a sustainable solution to the drinking water problem in rural India that is low on cost, high on impact. They eventually develop a model that satisfies all these criteria and becomes the basis for a project called Premamrutha Dhaara. Funded by Sri Sathya Sai Central Trust, the project aims to install water purification plants in more than 100 villages spanning six states in India, with the ultimate goal of turning over plant operations to the beneficiary villages and setting up a welfare fund in each village from the revenue generated. Social service projects, particularly in developing countries, have their unique challenges. The case highlights the importance of performing feasibility analysis as part of the project planning in social projects. The case also describes how the financial and operational dimensions of sustainability could lead to a self-sustainable system. The social innovation framework used to deploy the water purification project to achieve broader rural welfare has wider implications for project management, social innovation and change, sustainable operations management, strategic non-profit management, and public policy.

The case offers four possibilities for central objectives:

- To perform feasibility analysis in a Project Management course

- To design a social innovation framework in a Social Innovation and Change course

- To understand the dimensions of self-sustainability in a Sustainable Operations Management course

- To measure social impact in Strategic Non-profit Management and Public Policy courses

During the Indian general election of 2019, the Nizamabad constituency in Telangana state found itself in an unprecedented situation with a record 185 candidates competing for one seat. Most of these candidates were local farmers who saw the election as a platform for raising awareness about local issues, particularly the perceived lack of government support for guaranteeing minimum support prices for their crops. More than 185 candidates had in fact contested elections from a single constituency in a handful of elections in the past. The Election Commission of India (ECI) had declared them to be "special elections" where it made exceptions to the original election schedule to accommodate the large number of candidates. However, in the 2019 general election, the ECI made no such exceptions, announcing instead that polling in Nizamabad would be conducted as per the original schedule and results would be declared at the same time as the rest of the country. This presented a unique and unexpected challenge for Rajat Kumar, the Telangana Chief Electoral Officer (CEO) and his team. How were they to conduct free and fair and elections within the mandated timeframe with the largest number of electronic voting machines (EVMs) ever deployed to address the will of 185 candidates in a constituency with 1.55 million voters from rural and semi-urban areas? Case A describes the electoral process followed by the world's largest democracy to guarantee free and fair elections. It concludes by posing several situational questions, the answers to which will determine whether the polls in Nizamabad are conducted successfully or not. Case B, which should be revealed after students have had a chance to deliberate on the challenges posed in Case A, describes the decisions and actions taken by Kumar and his team in preparation for the Nizamabad polls and the events that took place on election day and afterward.

To demonstrate how a quantitative approach to decision making can be used in the public policy domain to achieve end goals. To learn how resource allocation decisions can be made by understanding the scale of the problem, the various resource constraints, and the end goals. To discover operational innovations in the face of regulatory and technical constraints and complete the required steps. To understand the multiple steps involved in conducting elections in the Indian context.

Set in April 2017, this case centers around the digital technology dilemma facing the protagonist Dr. Vimohan, the chief intensivist of Prashant Hospital. The case describes the critical challenges afflicting the intensive care unit (ICU) of the hospital. It then follows Dr. Vimohan as he visits the Bengaluru headquarters of Cloudphysician Healthcare, a Tele-ICU provider. The visit leaves Dr. Vimohan wondering whether he can leverage the Tele-ICU solution to overcome the challenges at Prashant Hospital. He instinctively knew that he would need to use a combination of qualitative and quantitative analysis to resolve this dilemma.

The case study enables critical thinking and decision-making to address the business situation. Assessing the pros and cons of a potential technology solution, examining the readiness of an organization and devising a framework for effective stakeholder and change management are some of the key concepts. Associated tools include cost-benefit analysis, net present value (NPV) analysis, force-field analysis, and change-readiness assessment, in addition to a brief discussion on SWOT analysis.

Set in 2016 in Hyderabad, India, the case follows Puvvala Yugandhar, Senior Vice President at Dr. Reddy's Laboratories (DRL), as he decides what to do about an underperforming production policy at their plants. Adopted a decade earlier, the policy, called Replenish to Consumption -Pooled (RTC-P), had not delivered the expected results. Specifically, the plants had been seeing an increase in production switchovers and creeping buffer levels for certain products, which had led to higher holding costs and lost sales for certain products. A senior consultant had suggested that DRL switch to a demand estimation-based policy called Replenish to Anticipation (RTA), which attempted to address the above concerns by segregating production capacity and updating buffer levels using demand estimates. However, Yugandhar, well aware of the challenges of changing production policies, wanted to explore a variant of RTC-P called Replenish to Consumption -Dedicated (RTC-D), which followed the same buffer update rules as RTC-P but maintained dedicated capacities for a subset of products.

By studying and solving the decision problem in the case, students should be able to better appreciate the challenges involved in making long-term operational changes. It gives them an opportunity to: (1) understand how each input might impact the final decision, and (2) how to weigh each of these inputs in arriving at the final decision.

We crafted the case study "Software Acquisition for Employee Engagement at Pilot Mountain Research " for use in Business Marketing, Buyer Behavior, or Operations Management courses in undergraduate, MBA, or Executive Education programs. The Pilot Mountain Market Research (PMMR) case study provides students with the opportunity to examine how buying decisions can be made utilizing online digital tools that are increasingly available to business-to-business (B2B) purchasing managers. To do so, we created fictitious research studies and data to realistically portray the kinds of information that are publicly available to B2B purchasing managers on the Internet today. In this case study, we introduce students to fit analysis, coding quality technical assessment, sentiment analysis, and ratings & reviews analyses. Students are challenged to integrate findings from these diverse analytical tools, combining both qualitative and quantitative data into concrete employee engagement software (EES) purchasing recommendations.

1. Evolving criteria for selecting a software package for organization-wide procurement in a B2B purchase decision context 2. Appreciate increasing digitalization of businesses 3. Understand importance of employee engagement in organizations and what an organization could do to enhance employee engagement among its workforce 4. Understand decision making processes in the context of digitalisation of businesses

Academia.edu no longer supports Internet Explorer.

To browse Academia.edu and the wider internet faster and more securely, please take a few seconds to upgrade your browser .

Enter the email address you signed up with and we'll email you a reset link.

- We're Hiring!

- Help Center

Case Study In Operations Management

2011, Journal of Business Case Studies (JBCS)

This case study is conducted within the context of the Theory of Constraints. The field research reported in this document contains information specific to the telecommunications industry. An examination of the history, organization design, problems and solutions for one telecommunications company are undertaken from the perspective of academic work in the Theory of Constraints. The information included in this document was developed through interviews with four senior managers including the President, the Chief Technology Officer, a Vice President and a department manager. Their responses were the basis of identifying problems and undesirable effects. The undesirable effects were diagramed in six UDE clouds dealing with the following issues: 1- unclear vision from management to employees; 2- supplier; 3- market; 4- the price and regulation environment; 5- production; and 6- bureaucracy. These undesirable effects were logically examined until a single cloud depicting the core confli...

Related Papers

CHIRANJIB BHOWMIK

The aim of this paper is to implement TOC in forging area in which the constraints prevents the throughput of the system to enhance the quality and reduce errors. Many quality improvement (QI) approaches have a limited evaluation of the factors in the selection of QI projects. Theory of constraints (TOC) has been proposed as a remedy for the better selection of QI projects. The strategic Thinking Processes (TP) of Theory of constraints is designed to struggle an enormous problem faced by organizations. The paper proposes an improvement of TOC–based TP in one of the leading forging industry in India to identify and overcome the system constraints in the business. The result shows that the TOC-TP identifies the production constraints and suggests measures to improve the system. The research is applicable to any production house in which product quality reduces the throughput of the organization. This is the first time that the theory of constraints philosophy has been used to maximize...

Jesus Ramon Melendez

The investigations began with the drum-buffer-rope architecture, as the basis of the Theory of Constraints (TOC). Currently, TOC has been applied in various business sectors. With the support of mathematical models and simulation, it has been possible to optimize the productive processes. The objective of this study was to determine the investigative tendencies of the TOC in the different productive sectors and its application in business management environments. The results establish that its application increases the efficiency of the process.

Nigerian Chapter of Arabian Journal of Business and Management Review

Hamed Alizadeh

In today’s economic climate, many organizations struggle with declining sales and increasing costs. Some choose to hunker down and weather the storm, hoping for better results in the future. However, layoffs and workforce reductions jeopardize future competitiveness. However, organizations that have implemented the Theory of Constraints (TOC) continue to thrive and grow in difficult times, continuing to achieve real bottom line growth, whether by improving productivity or increased revenues. In this paper, the organization dealing with the furniture manufacturing has been studied and the main constraints for the maximum throughput are identified by applying a thinking process tool called as “Theory of Constraints” (TOC). The Drum Buffer Rope (DBR) has been applied for capacity planning and the time for each identified processes is calculated and workload for each work center is calculated. Then the capacity constraint machine is identified. The proper solution has been provided to o...

Niek Du Preez

Erkam Guresen

Theory of constraints (TOC) is a technique that produces solutions for every kind of bottleneck in a short time. The philosophy of the theory is to determine the weaker part of the process chain and to eliminate this constraint point by taking action. After improvement, the next weaker part of the process chain is determined, and so on, for continuous improvement. The main goal is to apply improvement actions continuously to reach an excellent system structure. This paper describes how the five main steps of the theory of constraints were applied to eliminate waste at a supplier firm in Turkey..

Aitor Lizarralde

Purpose: The theory of constraints (TOC) drum-buffer-rope methodology is appropriate when managing a production plant in complex environments, such as make-to-order (MTO) scenarios. However, some difficulties have been detected in implementing this methodology in such changing environments. This case study analyses a MTO company to identify the key factors that influence the execution of the third step of TOC. It also aims to evaluate in more depth the research started by Lizarralde et al. (2020) and compare the results with the existing literature. Design/methodology/approach: The case study approach is selected as a research methodology because of the need to investigate a current phenomenon in a real environment. Findings: In the case study analysed, the protective capacity of non-bottleneck resources is found to the key factor when subordinating the MTO system to a bottleneck (BN). Furthermore, it coincides with one of the two key factors defined by the literature, namely protec...

Information Systems and e-Business Management

Niv Ahituv , Nitza Geri

Decision Line

Vicky Mabin

Alexei Sharpanskykh

RELATED PAPERS

João Vitor Motta Machado

Discourse and Communication for Sustainable Education

Ilga Salite

Joe Molinaro

Physical Review B

Paul Hansma

Izvestiya Wysshikh Uchebnykh Zawedeniy, Yadernaya Energetika

Dinah Changwony

Linear Algebra and its Applications

Milton Maritz

Petrus Marjono

Nanotechnology

The European Physical Journal B - Condensed Matter

Augusto Schianchi

Joseph McCarthy

Journal of Experimental Biology

Rosemary Smith

Saidon Amri

Helimarcos Pereira

ACS Catalysis

Philippe Lainé

Indian Journal of Hematology and Blood Transfusion

Yavuz Sanisoglu

Biochemical Pharmacology

Zafer Gashi

Education Sciences

Ximo Gual Arnau

Journal of Biomedical Optics

Susana Puig

Physica B+C

Wiebe Geertsma

Nepal Journal of Science and Technology

Manjusha Kulkarni

The Oncologist

Sébastien Salas

Abderraouf HILALI

Northeastern Naturalist

RELATED TOPICS

- We're Hiring!

- Help Center

- Find new research papers in:

- Health Sciences

- Earth Sciences

- Cognitive Science

- Mathematics

- Computer Science

- Academia ©2024

Machine Learning and image analysis towards improved energy management in Industry 4.0: a practical case study on quality control

- Original Article

- Open access

- Published: 13 May 2024

- Volume 17 , article number 48 , ( 2024 )

Cite this article

You have full access to this open access article

- Mattia Casini 1 ,

- Paolo De Angelis 1 ,

- Marco Porrati 2 ,

- Paolo Vigo 1 ,

- Matteo Fasano 1 ,

- Eliodoro Chiavazzo 1 &

- Luca Bergamasco ORCID: orcid.org/0000-0001-6130-9544 1

With the advent of Industry 4.0, Artificial Intelligence (AI) has created a favorable environment for the digitalization of manufacturing and processing, helping industries to automate and optimize operations. In this work, we focus on a practical case study of a brake caliper quality control operation, which is usually accomplished by human inspection and requires a dedicated handling system, with a slow production rate and thus inefficient energy usage. We report on a developed Machine Learning (ML) methodology, based on Deep Convolutional Neural Networks (D-CNNs), to automatically extract information from images, to automate the process. A complete workflow has been developed on the target industrial test case. In order to find the best compromise between accuracy and computational demand of the model, several D-CNNs architectures have been tested. The results show that, a judicious choice of the ML model with a proper training, allows a fast and accurate quality control; thus, the proposed workflow could be implemented for an ML-powered version of the considered problem. This would eventually enable a better management of the available resources, in terms of time consumption and energy usage.

Avoid common mistakes on your manuscript.

Introduction

An efficient use of energy resources in industry is key for a sustainable future (Bilgen, 2014 ; Ocampo-Martinez et al., 2019 ). The advent of Industry 4.0, and of Artificial Intelligence, have created a favorable context for the digitalisation of manufacturing processes. In this view, Machine Learning (ML) techniques have the potential for assisting industries in a better and smart usage of the available data, helping to automate and improve operations (Narciso & Martins, 2020 ; Mazzei & Ramjattan, 2022 ). For example, ML tools can be used to analyze sensor data from industrial equipment for predictive maintenance (Carvalho et al., 2019 ; Dalzochio et al., 2020 ), which allows identification of potential failures in advance, and thus to a better planning of maintenance operations with reduced downtime. Similarly, energy consumption optimization (Shen et al., 2020 ; Qin et al., 2020 ) can be achieved via ML-enabled analysis of available consumption data, with consequent adjustments of the operating parameters, schedules, or configurations to minimize energy consumption while maintaining an optimal production efficiency. Energy consumption forecast (Liu et al., 2019 ; Zhang et al., 2018 ) can also be improved, especially in industrial plants relying on renewable energy sources (Bologna et al., 2020 ; Ismail et al., 2021 ), by analysis of historical data on weather patterns and forecast, to optimize the usage of energy resources, avoid energy peaks, and leverage alternative energy sources or storage systems (Li & Zheng, 2016 ; Ribezzo et al., 2022 ; Fasano et al., 2019 ; Trezza et al., 2022 ; Mishra et al., 2023 ). Finally, ML tools can also serve for fault or anomaly detection (Angelopoulos et al., 2019 ; Md et al., 2022 ), which allows prompt corrective actions to optimize energy usage and prevent energy inefficiencies. Within this context, ML techniques for image analysis (Casini et al., 2024 ) are also gaining increasing interest (Chen et al., 2023 ), for their application to e.g. materials design and optimization (Choudhury, 2021 ), quality control (Badmos et al., 2020 ), process monitoring (Ho et al., 2021 ), or detection of machine failures by converting time series data from sensors to 2D images (Wen et al., 2017 ).

Incorporating digitalisation and ML techniques into Industry 4.0 has led to significant energy savings (Maggiore et al., 2021 ; Nota et al., 2020 ). Projects adopting these technologies can achieve an average of 15% to 25% improvement in energy efficiency in the processes where they were implemented (Arana-Landín et al., 2023 ). For instance, in predictive maintenance, ML can reduce energy consumption by optimizing the operation of machinery (Agrawal et al., 2023 ; Pan et al., 2024 ). In process optimization, ML algorithms can improve energy efficiency by 10-20% by analyzing and adjusting machine operations for optimal performance, thereby reducing unnecessary energy usage (Leong et al., 2020 ). Furthermore, the implementation of ML algorithms for optimal control can lead to energy savings of 30%, because these systems can make real-time adjustments to production lines, ensuring that machines operate at peak energy efficiency (Rahul & Chiddarwar, 2023 ).

In automotive manufacturing, ML-driven quality control can lead to energy savings by reducing the need for redoing parts or running inefficient production cycles (Vater et al., 2019 ). In high-volume production environments such as consumer electronics, novel computer-based vision models for automated detection and classification of damaged packages from intact packages can speed up operations and reduce waste (Shahin et al., 2023 ). In heavy industries like steel or chemical manufacturing, ML can optimize the energy consumption of large machinery. By predicting the optimal operating conditions and maintenance schedules, these systems can save energy costs (Mypati et al., 2023 ). Compressed air is one of the most energy-intensive processes in manufacturing. ML can optimize the performance of these systems, potentially leading to energy savings by continuously monitoring and adjusting the air compressors for peak efficiency, avoiding energy losses due to leaks or inefficient operation (Benedetti et al., 2019 ). ML can also contribute to reducing energy consumption and minimizing incorrectly produced parts in polymer processing enterprises (Willenbacher et al., 2021 ).

Here we focus on a practical industrial case study of brake caliper processing. In detail, we focus on the quality control operation, which is typically accomplished by human visual inspection and requires a dedicated handling system. This eventually implies a slower production rate, and inefficient energy usage. We thus propose the integration of an ML-based system to automatically perform the quality control operation, without the need for a dedicated handling system and thus reduced operation time. To this, we rely on ML tools able to analyze and extract information from images, that is, deep convolutional neural networks, D-CNNs (Alzubaidi et al., 2021 ; Chai et al., 2021 ).

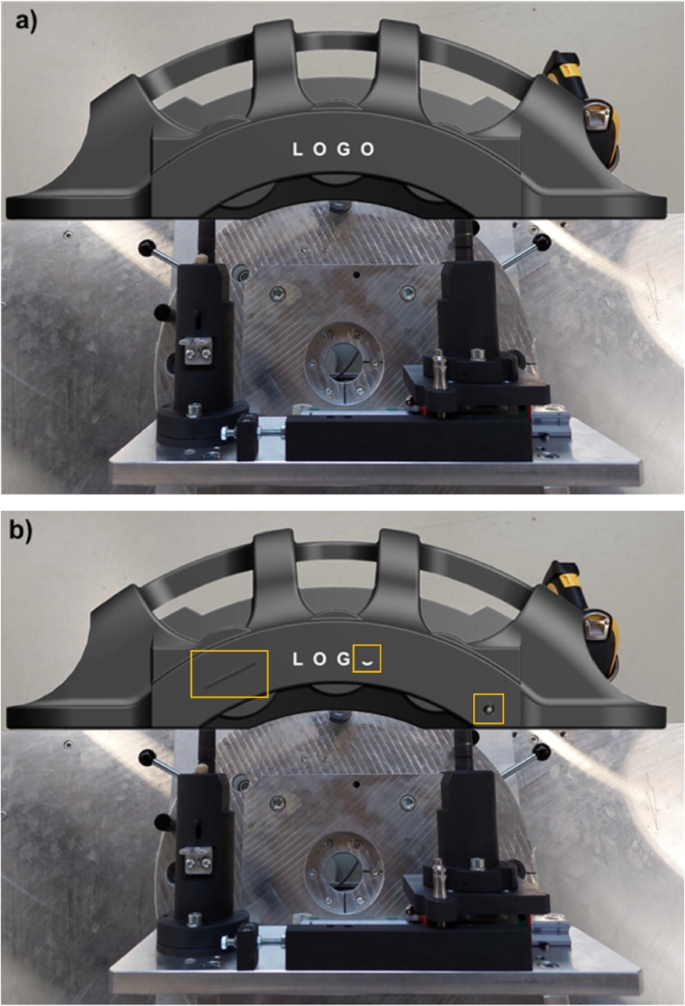

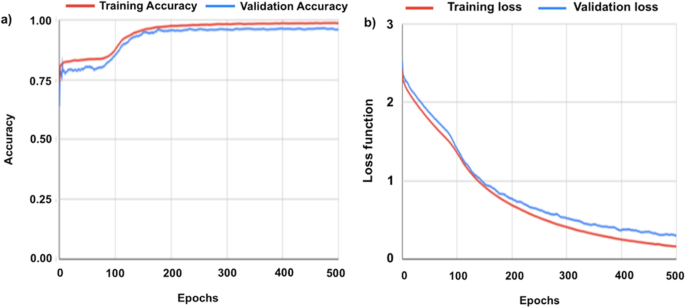

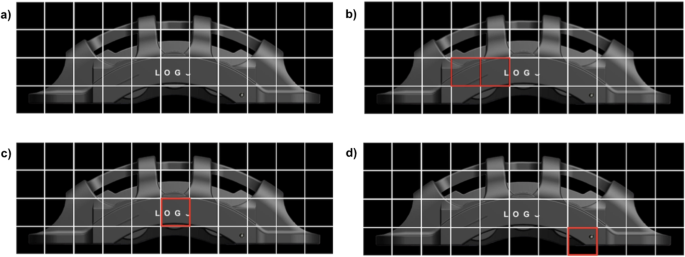

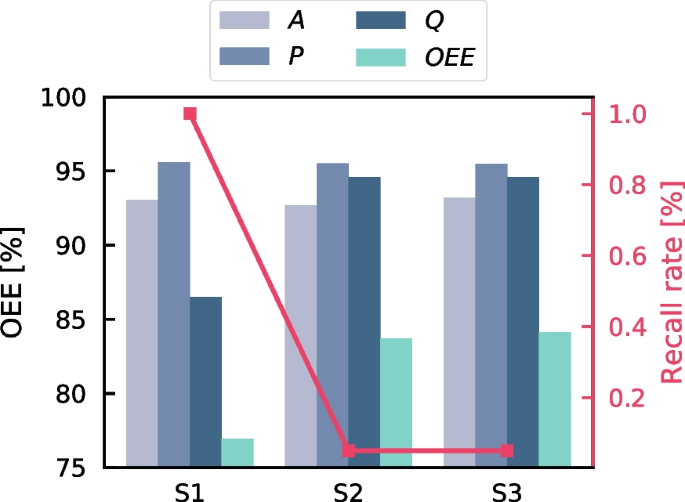

Sample 3D model (GrabCAD ) of the considered brake caliper: (a) part without defects, and (b) part with three sample defects, namely a scratch, a partially missing letter in the logo, and a circular painting defect (shown by the yellow squares, from left to right respectively)

A complete workflow for the purpose has been developed and tested on a real industrial test case. This includes: a dedicated pre-processing of the brake caliper images, their labelling and analysis using two dedicated D-CNN architectures (one for background removal, and one for defect identification), post-processing and analysis of the neural network output. Several different D-CNN architectures have been tested, in order to find the best model in terms of accuracy and computational demand. The results show that, a judicious choice of the ML model with a proper training, allows to obtain fast and accurate recognition of possible defects. The best-performing models, indeed, reach over 98% accuracy on the target criteria for quality control, and take only few seconds to analyze each image. These results make the proposed workflow compliant with the typical industrial expectations; therefore, in perspective, it could be implemented for an ML-powered version of the considered industrial problem. This would eventually allow to achieve better performance of the manufacturing process and, ultimately, a better management of the available resources in terms of time consumption and energy expense.

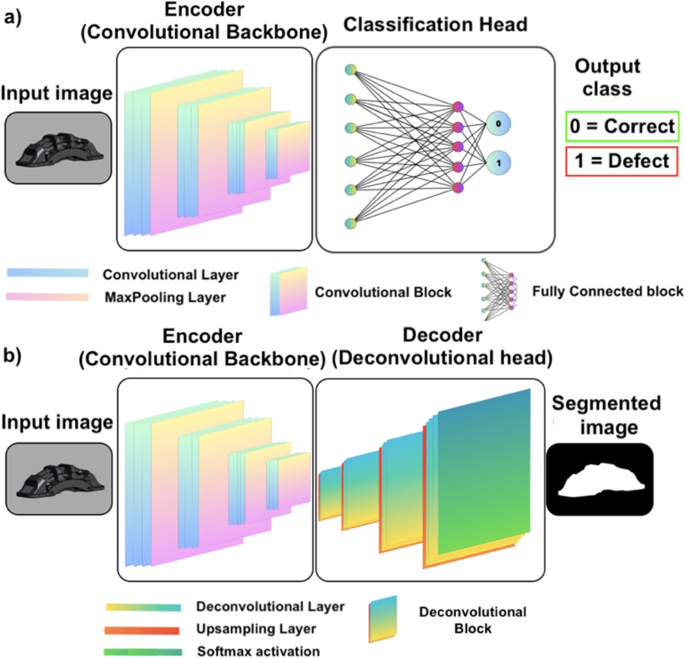

Different neural network architectures: convolutional encoder (a) and encoder-decoder (b)

The industrial quality control process that we target is the visual inspection of manufactured components, to verify the absence of possible defects. Due to industrial confidentiality reasons, a representative open-source 3D geometry (GrabCAD ) of the considered parts, similar to the original one, is shown in Fig. 1 . For illustrative purposes, the clean geometry without defects (Fig. 1 (a)) is compared to the geometry with three possible sample defects, namely: a scratch on the surface of the brake caliper, a partially missing letter in the logo, and a circular painting defect (highlighted by the yellow squares, from left to right respectively, in Fig. 1 (b)). Note that, one or multiple defects may be present on the geometry, and that other types of defects may also be considered.

Within the industrial production line, this quality control is typically time consuming, and requires a dedicated handling system with the associated slow production rate and energy inefficiencies. Thus, we developed a methodology to achieve an ML-powered version of the control process. The method relies on data analysis and, in particular, on information extraction from images of the brake calipers via Deep Convolutional Neural Networks, D-CNNs (Alzubaidi et al., 2021 ). The designed workflow for defect recognition is implemented in the following two steps: 1) removal of the background from the image of the caliper, in order to reduce noise and irrelevant features in the image, ultimately rendering the algorithms more flexible with respect to the background environment; 2) analysis of the geometry of the caliper to identify the different possible defects. These two serial steps are accomplished via two different and dedicated neural networks, whose architecture is discussed in the next section.

Convolutional Neural Networks (CNNs) pertain to a particular class of deep neural networks for information extraction from images. The feature extraction is accomplished via convolution operations; thus, the algorithms receive an image as an input, analyze it across several (deep) neural layers to identify target features, and provide the obtained information as an output (Casini et al., 2024 ). Regarding this latter output, different formats can be retrieved based on the considered architecture of the neural network. For a numerical data output, such as that required to obtain a classification of the content of an image (Bhatt et al., 2021 ), e.g. correct or defective caliper in our case, a typical layout of the network involving a convolutional backbone, and a fully-connected network can be adopted (see Fig. 2 (a)). On the other hand, if the required output is still an image, a more complex architecture with a convolutional backbone (encoder) and a deconvolutional head (decoder) can be used (see Fig. 2 (b)).

As previously introduced, our workflow targets the analysis of the brake calipers in a two-step procedure: first, the removal of the background from the input image (e.g. Fig. 1 ); second, the geometry of the caliper is analyzed and the part is classified as acceptable or not depending on the absence or presence of any defect, respectively. Thus, in the first step of the procedure, a dedicated encoder-decoder network (Minaee et al., 2021 ) is adopted to classify the pixels in the input image as brake or background. The output of this model will then be a new version of the input image, where the background pixels are blacked. This helps the algorithms in the subsequent analysis to achieve a better performance, and to avoid bias due to possible different environments in the input image. In the second step of the workflow, a dedicated encoder architecture is adopted. Here, the previous background-filtered image is fed to the convolutional network, and the geometry of the caliper is analyzed to spot possible defects and thus classify the part as acceptable or not. In this work, both deep learning models are supervised , that is, the algorithms are trained with the help of human-labeled data (LeCun et al., 2015 ). Particularly, the first algorithm for background removal is fed with the original image as well as with a ground truth (i.e. a binary image, also called mask , consisting of black and white pixels) which instructs the algorithm to learn which pixels pertain to the brake and which to the background. This latter task is usually called semantic segmentation in Machine Learning and Deep Learning (Géron, 2022 ). Analogously, the second algorithm is fed with the original image (without the background) along with an associated mask, which serves the neural networks with proper instructions to identify possible defects on the target geometry. The required pre-processing of the input images, as well as their use for training and validation of the developed algorithms, are explained in the next sections.

Image pre-processing

Machine Learning approaches rely on data analysis; thus, the quality of the final results is well known to depend strongly on the amount and quality of the available data for training of the algorithms (Banko & Brill, 2001 ; Chen et al., 2021 ). In our case, the input images should be well-representative for the target analysis and include adequate variability of the possible features to allow the neural networks to produce the correct output. In this view, the original images should include, e.g., different possible backgrounds, a different viewing angle of the considered geometry and a different light exposure (as local light reflections may affect the color of the geometry and thus the analysis). The creation of such a proper dataset for specific cases is not always straightforward; in our case, for example, it would imply a systematic acquisition of a large set of images in many different conditions. This would require, in turn, disposing of all the possible target defects on the real parts, and of an automatic acquisition system, e.g., a robotic arm with an integrated camera. Given that, in our case, the initial dataset could not be generated on real parts, we have chosen to generate a well-balanced dataset of images in silico , that is, based on image renderings of the real geometry. The key idea was that, if the rendered geometry is sufficiently close to a real photograph, the algorithms may be instructed on artificially-generated images and then tested on a few real ones. This approach, if properly automatized, clearly allows to easily produce a large amount of images in all the different conditions required for the analysis.

In a first step, starting from the CAD file of the brake calipers, we worked manually using the open-source software Blender (Blender ), to modify the material properties and achieve a realistic rendering. After that, defects were generated by means of Boolean (subtraction) operations between the geometry of the brake caliper and ad-hoc geometries for each defect. Fine tuning on the generated defects has allowed for a realistic representation of the different defects. Once the results were satisfactory, we developed an automated Python code for the procedures, to generate the renderings in different conditions. The Python code allows to: load a given CAD geometry, change the material properties, set different viewing angles for the geometry, add different types of defects (with given size, rotation and location on the geometry of the brake caliper), add a custom background, change the lighting conditions, render the scene and save it as an image.

In order to make the dataset as varied as possible, we introduced three light sources into the rendering environment: a diffuse natural lighting to simulate daylight conditions, and two additional artificial lights. The intensity of each light source and the viewing angle were then made vary randomly, to mimic different daylight conditions and illuminations of the object. This procedure was designed to provide different situations akin to real use, and to make the model invariant to lighting conditions and camera position. Moreover, to provide additional flexibility to the model, the training dataset of images was virtually expanded using data augmentation (Mumuni & Mumuni, 2022 ), where saturation, brightness and contrast were made randomly vary during training operations. This procedure has allowed to consistently increase the number and variety of the images in the training dataset.

The developed automated pre-processing steps easily allows for batch generation of thousands of different images to be used for training of the neural networks. This possibility is key for proper training of the neural networks, as the variability of the input images allows the models to learn all the possible features and details that may change during real operating conditions.

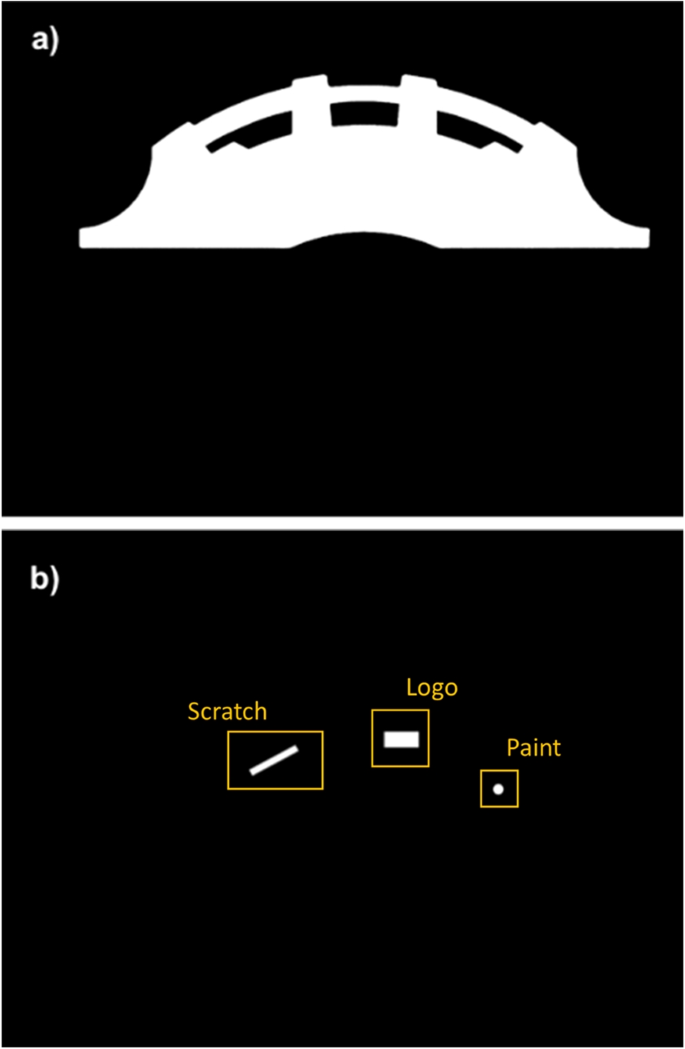

Examples of the ground truth for the two target tasks: background removal (a) and defects recognition (b)

The first tests using such virtual database have shown that, although the generated images were very similar to real photographs, the models were not able to properly recognize the target features in the real images. Thus, in a tentative to get closer to a proper set of real images, we decided to adopt a hybrid dataset, where the virtually generated images were mixed with the available few real ones. However, given that some possible defects were missing in the real images, we also decided to manipulate the images to introduce virtual defects on real images. The obtained dataset finally included more than 4,000 images, where 90% was rendered, and 10% was obtained from real images. To avoid possible bias in the training dataset, defects were present in 50% of the cases in both the rendered and real image sets. Thus, in the overall dataset, the real original images with no defects were 5% of the total.

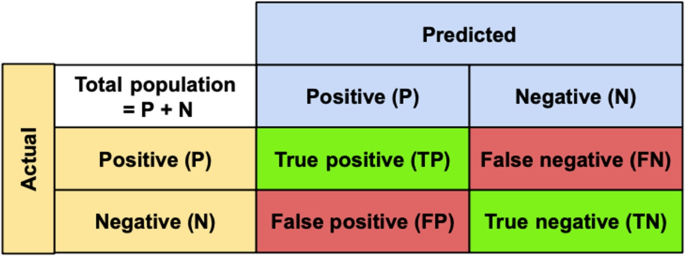

Along with the code for the rendering and manipulation of the images, dedicated Python routines were developed to generate the corresponding data labelling for the supervised training of the networks, namely the image masks. Particularly, two masks were generated for each input image: one for the background removal operation, and one for the defect identification. In both cases, the masks consist of a binary (i.e. black and white) image where all the pixels of a target feature (i.e. the geometry or defect) are assigned unitary values (white); whereas, all the remaining pixels are blacked (zero values). An example of these masks in relation to the geometry in Fig. 1 is shown in Fig. 3 .