How to Write a Conclusion for an Essay - Tips and Examples

The conclusion of your essay is like the grand finale of a fireworks display. It's the last impression you leave on your reader, the moment that ties everything together and leaves them with a lasting impact.

But for many writers, crafting a conclusion can feel like an afterthought, a hurdle to jump after the excitement of developing the main body of their work. Fear not! This article will equip you with the tools and techniques regarding how to write a conclusion for an essay that effectively summarizes your main points, strengthens your argument, and leaves your reader feeling satisfied and engaged.

What Is a Conclusion

In an essay, the conclusion acts as your final curtain call. It's where you revisit your initial claim (thesis), condense your main supporting arguments, and leave the reader with a lasting takeaway.

Imagine it as the bridge that connects your ideas to a broader significance. A well-crafted conclusion does more than simply summarize; it elevates your points and offers a sense of closure, ensuring the reader leaves with a clear understanding of your argument's impact. In the next section, you will find conclusion ideas that you could use for your essay.

Please note that our online paper writing service can provide you not only with a stand-alone conclusion but with a fully new composition as well!

Types of Conclusion

Here's a breakdown of various conclusion types, each serving a distinct purpose:

| Technique | Description | Example |

|---|---|---|

| 📣 Call to Action | Encourage readers to take a specific step. | "Let's work together to protect endangered species by supporting conservation efforts." |

| ❓ Provocative Question | Spark curiosity with a lingering question. | "With artificial intelligence rapidly evolving, will creativity remain a uniquely human trait?" |

| 💡 Universal Insight | Connect your argument to a broader truth. | "The lessons learned from history remind us that even small acts of courage can inspire change." |

| 🔮 Future Implications | Discuss the potential consequences of your topic. | "The rise of automation may force us to redefine the concept of work in the coming decades." |

| 🌍 Hypothetical Scenario | Use a "what if" scenario to illustrate your point. | "Imagine a world where everyone had access to clean water. How would it impact global health?" |

How Long Should a Conclusion Be

The ideal length of a conclusion depends on the overall length of your essay, but there are some general guidelines:

- Shorter Essays (500-750 words): Aim for 3-5 sentences. This ensures you effectively wrap up your points without adding unnecessary content.

- Medium Essays (750-1200 words): Here, you can expand to 5-8 sentences. This provides more space to elaborate on your concluding thought or call to action.

- Longer Essays (1200+ words): For these, you can have a conclusion of 8-10 sentences. This allows for a more comprehensive summary or a more nuanced exploration of the future implications or broader significance of your topic.

Here are some additional factors to consider:

- The complexity of your argument: If your essay explores a multifaceted topic, your conclusion might need to be slightly longer to address all the points adequately.

- Type of conclusion: A call to action or a hypothetical scenario might require a few extra sentences for elaboration compared to a simple summary.

Remember: The most important aspect is ensuring your conclusion effectively summarizes your main points, leaves a lasting impression, and doesn't feel rushed or tacked on.

Here's a helpful rule of thumb:

- Keep it proportional: Your conclusion should be roughly 5-10% of your total essay length.

How many sentences should a conclusion be?

| Essay Length 📝 | Recommended Sentence Range 📏 |

|---|---|

| Shorter Essays (500-750 words) 🎈 | 3-5 sentences |

| Medium Essays (750-1200 words) 📚 | 5-8 sentences |

| Longer Essays (1200+ words) 🏰 | 8-10 sentences |

Conclusion Transition Words

Transition words for conclusion act like signposts for your reader. They smoothly guide them from the main body of your essay to your closing thoughts, ensuring a clear and logical flow of ideas. Here are some transition words specifically suited for concluding your essay:

| Technique 🎯 | Examples 📝 |

|---|---|

| Summarizing & Restating 📋 | |

| Leaving the Reader with a Lasting Impression 🎨 | |

| Looking to the Future 🔮 | |

| Leaving the Reader with a Question ❓ | |

| Adding Emphasis 💡 |

Remember, the best transition word will depend on the specific type of conclusion you're aiming for.

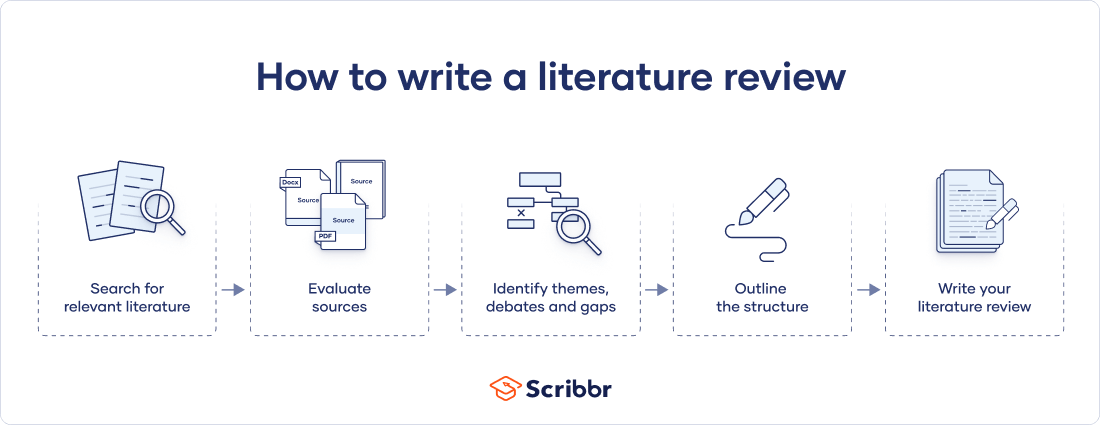

How to Write a Conclusion

Every essay or dissertation writer knows that the toughest part of working on a conclusion can be striking the right balance. You want to effectively summarize your main points without redundancy, leaving a lasting impression that feels fresh and impactful, all within a concise and focused section. Here’s a step-by-step guide to help you write a stunning essay conclusion:

Restate Your Thesis

Briefly remind your reader of your essay's central claim. This doesn't have to be a word-for-word repetition but a concise restatement that refreshes their memory.

Summarize Key Points

In a few sentences, revisit the main arguments you used to support your thesis. When writing a conclusion, don't get bogged down in details, but offer a high-level overview that reinforces your essay's focus.

Leave a Lasting Impression

This is where your knowledge of how to write a good conclusion can shine! Consider a thought-provoking question, a call to action, or a connection to a broader truth—something that lingers in the reader's mind and resonates beyond the final sentence.

Avoid Introducing New Information

The conclusion paragraph shouldn't introduce entirely new ideas. Stick to wrapping up your existing arguments and leaving a final thought.

Ensure Flow and Readability

Transition smoothly from the main body of your essay to the conclusion. Use transition words like "in conclusion," "finally," or "as a result," and ensure your closing sentences feel natural and well-connected to the rest of your work.

Note that you can simply buy essay at any time and focus on other more important assignments or just enjoy your free time.

Conclusion Paragraph Outline

Here's an outline to help you better understand how to write a conclusion paragraph:

| Step 🚶 | Description 📝 |

|---|---|

| 1. Revisit Your Thesis (1-2 sentences) 🎯 | |

| 2. Summarize Key Points (1-2 sentences) 🔑 | |

| 3. Lasting Impression (2-3 sentences) 💡 | This is where you leave your reader with a final thought. Choose one or a combination of these options: Urge readers to take a specific action related to your topic. Spark curiosity with a lingering question that encourages further exploration. Connect your arguments to a broader truth or principle. Discuss the potential long-term consequences of your topic. Evoke a strong feeling (sadness, anger, hope) for a lasting impact. Conclude with a relevant quote that reinforces your key points or offers a new perspective. |

| 4. Final Touch (Optional - 1 sentence) 🎀 | This is not essential but can be a powerful way to end your essay. Consider a: that summarizes your main point in a memorable way. (simile, metaphor) that leaves a lasting impression. that invites the reader to ponder the topic further. |

- Tailor the length of your conclusion to your essay's overall length (shorter essays: 3-5 sentences, longer essays: 8-10 sentences).

- Ensure a smooth transition from the main body using transition words.

- Avoid introducing new information; focus on wrapping up your existing points.

- Proofread for clarity and ensure your conclusion ties everything together and delivers a final impactful statement.

Read more: Persuasive essay outline .

Do’s and Don’ts of Essay Conclusion Writing

According to professional term paper writers , a strong conclusion is essential for leaving a lasting impression on your reader. Here's a list of action items you should and shouldn’t do when writing an essay conclusion:

| Dos ✅ | Don'ts ❌ |

|---|---|

| Restate your thesis in a new way. 🔄 Remind the reader of your central claim, but rephrase it to avoid redundancy. | Simply repeat your thesis word-for-word. This lacks originality and doesn't offer a fresh perspective. |

| Summarize your key points concisely. 📝 Briefly revisit the main arguments used to support your thesis. | Rehash every detail from your essay. 🔍 Focus on a high-level overview to reinforce your essay's main points. |

| Leave a lasting impression. 💡 Spark curiosity with a question, propose a call to action, or connect your arguments to a broader truth. | End with a bland statement. 😐 Avoid generic closings like "In conclusion..." or "This is important because...". |

| Ensure a smooth transition. 🌉 Use transition words like "finally," "as a result," or "in essence" to connect your conclusion to the main body. | Introduce entirely new information. ⚠️ The conclusion should wrap up existing arguments, not introduce new ideas. |

| Proofread for clarity and flow. 🔍 Ensure your conclusion feels natural and well-connected to the rest of your work. | Leave grammatical errors or awkward phrasing. 🚫 Edit and revise for a polished final sentence. |

Want to Have Better Grades?

Address to our professionals and get your task done asap!

Conclusion Examples

A strong conclusion isn't just an afterthought – it's the capstone of your essay. Here are five examples of conclusion paragraphs for essays showcasing different techniques to craft a powerful closing to make your essay stand out.

1. Call to Action: (Essay About the Importance of Recycling)

In conclusion, the environmental impact of our waste is undeniable. We all have a responsibility to adopt sustainable practices. We can collectively make a significant difference by incorporating simple changes like recycling into our daily routines. Join the movement – choose to reuse, reduce, and recycle.

2. Provocative Question: (Essay Exploring the Potential Consequences of Artificial Intelligence)

As artificial intelligence rapidly evolves, it's crucial to consider its impact on humanity. While AI holds immense potential for progress, will it remain a tool for good, or will it eventually surpass human control? This question demands our collective attention, as the decisions we make today will shape the future of AI and its impact on our world.

3. Universal Insight: (Essay Analyzing a Historical Event)

The study of history offers valuable lessons that transcend time. The events of the [insert historical event] remind us that even small acts of defiance can have a ripple effect, inspiring change and ultimately leading to a brighter future. Every voice has the power to make a difference, and courage can be contagious.

4. Future Implications: (Essay Discussing the Rise of Social Media)

Social media's explosive growth has transformed how we connect and consume information. While these platforms offer undeniable benefits, their long-term effects on social interaction, mental health, and political discourse require careful consideration. As social media continues to evolve, we must remain vigilant and ensure it remains a tool for positive connection and not a source of division.

5. Hypothetical Scenario: (Essay Arguing for the Importance of Space Exploration)

Imagine a world where our understanding of the universe is limited to Earth. We miss out on the potential for groundbreaking discoveries in physics, medicine, and our place in the cosmos. By continuing to venture beyond our planet, we push the boundaries of human knowledge and inspire future generations to reach for the stars.

Recommended for reading: Nursing essay examples .

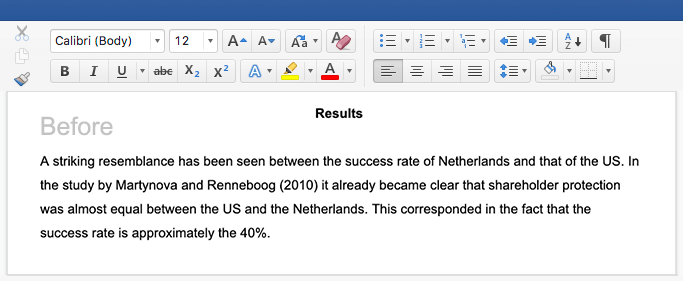

Difference Between Good and Weak Conclusions

Not all conclusions are created equal. A weak ending can leave your reader feeling stranded, unsure of where your essay has taken them. Conversely, writing a conclusion that is strong acts as a landing pad, summarizing your key points and leaving a lasting impression.

| ⚠️ Weak Conclusion | ❓ What's Wrong with It? | ✅ Good Conclusion |

|---|---|---|

| In conclusion, exercise is good for you. It helps you stay healthy and fit. | By incorporating regular exercise into our routines, we boost our physical health and energy levels and enhance our mental well-being and resilience. (Rephrased thesis & highlights benefits.) | |

| This event was very significant and had a big impact on history. | The [name of historical event] marked a turning point in [explain the historical period]. Its impact resonates today, influencing [mention specific consequences or ongoing effects]. (Connects to specifics & broader significance.) | |

| Throughout this essay, we've discussed the good and bad sides of social media. | While social media offers undeniable benefits like connection and information sharing, its impact on mental health, privacy, and political discourse necessitates responsible use and ongoing discussions about its role in society. (Connects arguments to broader issues & future implications.) |

Nailed that essay? Don't blow it with a lame ending! A good conclusion is like the mic drop at the end of a rap song. It reminds the reader of your main points but in a cool new way. Throw in a thought-provoking question, a call to action, or a connection to something bigger, and you'll leave them thinking long after they turn the page.

Need Help with Your Essays?

Our service is the best assistant the money can buy – original and reliable.

How To Write A Conclusion For An Essay?

How to write a good conclusion, how to write a conclusion for a college essay.

Daniel Parker

is a seasoned educational writer focusing on scholarship guidance, research papers, and various forms of academic essays including reflective and narrative essays. His expertise also extends to detailed case studies. A scholar with a background in English Literature and Education, Daniel’s work on EssayPro blog aims to support students in achieving academic excellence and securing scholarships. His hobbies include reading classic literature and participating in academic forums.

is an expert in nursing and healthcare, with a strong background in history, law, and literature. Holding advanced degrees in nursing and public health, his analytical approach and comprehensive knowledge help students navigate complex topics. On EssayPro blog, Adam provides insightful articles on everything from historical analysis to the intricacies of healthcare policies. In his downtime, he enjoys historical documentaries and volunteering at local clinics.

- Updated writing tips.

- Added informative tables.

- Added conclusion example.

- Added an article conclusion.

- Essay Conclusions | UMGC. (n.d.). University of Maryland Global Campus. https://www.umgc.edu/current-students/learning-resources/writing-center/writing-resources/writing/essay-conclusions

- How to Write a Conclusion for an Essay | BestColleges. (n.d.). BestColleges.com. https://www.bestcolleges.com/blog/how-to-write-a-conclusion/

- Ending the Essay: Conclusions | Harvard College Writing Center. (n.d.). https://writingcenter.fas.harvard.edu/pages/ending-essay-conclusions

Related Articles

.webp)

- Link to facebook

- Link to linkedin

- Link to twitter

- Link to youtube

- Writing Tips

How to Write an Essay Conclusion

4-minute read

- 1st October 2022

Regardless of what you’re studying, writing essays is probably a significant part of your work as a student . Taking the time to understand how to write each section of an essay (i.e., introduction, body, and conclusion) can make the entire process easier and ensure that you’ll be successful.

Once you’ve put in the hard work of writing a coherent and compelling essay, it can be tempting to quickly throw together a conclusion without the same attention to detail. However, you won’t leave an impactful final impression on your readers without a strong conclusion.

We’ve compiled a few easy steps to help you write a great conclusion for your next essay . Watch our video, or check out our guide below to learn more!

1. Return to Your Thesis

Similar to how an introduction should capture your reader’s interest and present your argument, a conclusion should show why your argument matters and leave the reader with further curiosity about the topic.

To do this, you should begin by reminding the reader of your thesis statement. While you can use similar language and keywords when referring to your thesis, avoid copying it from the introduction and pasting it into your conclusion.

Try varying your vocabulary and sentence structure and presenting your thesis in a way that demonstrates how your argument has evolved throughout your essay.

2. Review Your Main Points

In addition to revisiting your thesis statement, you should review the main points you presented in your essay to support your argument.

However, a conclusion isn’t simply a summary of your essay . Rather, you should further examine your main points and demonstrate how each is connected.

Try to discuss these points concisely, in just a few sentences, in preparation for demonstrating how they fit in to the bigger picture of the topic.

Find this useful?

Subscribe to our newsletter and get writing tips from our editors straight to your inbox.

3. Show the Significance of Your Essay

Next, it’s time to think about the topic of your essay beyond the scope of your argument. It’s helpful to keep the question “so what?” in mind when you’re doing this. The goal is to demonstrate why your argument matters.

If you need some ideas about what to discuss to show the significance of your essay, consider the following:

- What do your findings contribute to the current understanding of the topic?

- Did your findings raise new questions that would benefit from future research?

- Can you offer practical suggestions for future research or make predictions about the future of the field/topic?

- Are there other contexts, topics, or a broader debate that your ideas can be applied to?

While writing your essay, it can be helpful to keep a list of ideas or insights that you develop about the implications of your work so that you can refer back to it when you write the conclusion.

Making these kinds of connections will leave a memorable impression on the reader and inspire their interest in the topic you’ve written about.

4. Avoid Some Common Mistakes

To ensure you’ve written a strong conclusion that doesn’t leave your reader confused or lacking confidence in your work, avoid:

- Presenting new evidence: Don’t introduce new information or a new argument, as it can distract from your main topic, confuse your reader, and suggest that your essay isn’t organized.

- Undermining your argument: Don’t use statements such as “I’m not an expert,” “I feel,” or “I think,” as lacking confidence in your work will weaken your argument.

- Using generic statements: Don’t use generic concluding statements such as “In summary,” “To sum up,” or “In conclusion,” which are redundant since the reader will be able to see that they’ve reached the end of your essay.

Finally, don’t make the mistake of forgetting to proofread your essay ! Mistakes can be difficult to catch in your own writing, but they can detract from your writing.

Our expert editors can ensure that your essay is clear, concise, and free of spelling and grammar errors. Find out more by submitting a free trial document today!

Share this article:

Post A New Comment

Got content that needs a quick turnaround? Let us polish your work. Explore our editorial business services.

9-minute read

How to Use Infographics to Boost Your Presentation

Is your content getting noticed? Capturing and maintaining an audience’s attention is a challenge when...

8-minute read

Why Interactive PDFs Are Better for Engagement

Are you looking to enhance engagement and captivate your audience through your professional documents? Interactive...

7-minute read

Seven Key Strategies for Voice Search Optimization

Voice search optimization is rapidly shaping the digital landscape, requiring content professionals to adapt their...

Five Creative Ways to Showcase Your Digital Portfolio

Are you a creative freelancer looking to make a lasting impression on potential clients or...

How to Ace Slack Messaging for Contractors and Freelancers

Effective professional communication is an important skill for contractors and freelancers navigating remote work environments....

3-minute read

How to Insert a Text Box in a Google Doc

Google Docs is a powerful collaborative tool, and mastering its features can significantly enhance your...

Make sure your writing is the best it can be with our expert English proofreading and editing.

- PRO Courses Guides New Tech Help Pro Expert Videos About wikiHow Pro Upgrade Sign In

- EDIT Edit this Article

- EXPLORE Tech Help Pro About Us Random Article Quizzes Request a New Article Community Dashboard This Or That Game Popular Categories Arts and Entertainment Artwork Books Movies Computers and Electronics Computers Phone Skills Technology Hacks Health Men's Health Mental Health Women's Health Relationships Dating Love Relationship Issues Hobbies and Crafts Crafts Drawing Games Education & Communication Communication Skills Personal Development Studying Personal Care and Style Fashion Hair Care Personal Hygiene Youth Personal Care School Stuff Dating All Categories Arts and Entertainment Finance and Business Home and Garden Relationship Quizzes Cars & Other Vehicles Food and Entertaining Personal Care and Style Sports and Fitness Computers and Electronics Health Pets and Animals Travel Education & Communication Hobbies and Crafts Philosophy and Religion Work World Family Life Holidays and Traditions Relationships Youth

- Browse Articles

- Learn Something New

- Quizzes Hot

- This Or That Game

- Train Your Brain

- Explore More

- Support wikiHow

- About wikiHow

- Log in / Sign up

- Education and Communications

- College University and Postgraduate

- Academic Writing

How to Conclude an Essay (with Examples)

Last Updated: May 24, 2024 Fact Checked

Writing a Strong Conclusion

What to avoid, brainstorming tricks.

This article was co-authored by Jake Adams and by wikiHow staff writer, Aly Rusciano . Jake Adams is an academic tutor and the owner of Simplifi EDU, a Santa Monica, California based online tutoring business offering learning resources and online tutors for academic subjects K-College, SAT & ACT prep, and college admissions applications. With over 14 years of professional tutoring experience, Jake is dedicated to providing his clients the very best online tutoring experience and access to a network of excellent undergraduate and graduate-level tutors from top colleges all over the nation. Jake holds a BS in International Business and Marketing from Pepperdine University. There are 8 references cited in this article, which can be found at the bottom of the page. This article has been fact-checked, ensuring the accuracy of any cited facts and confirming the authority of its sources. This article has been viewed 3,211,370 times.

So, you’ve written an outstanding essay and couldn’t be more proud. But now you have to write the final paragraph. The conclusion simply summarizes what you’ve already written, right? Well, not exactly. Your essay’s conclusion should be a bit more finessed than that. Luckily, you’ve come to the perfect place to learn how to write a conclusion. We’ve put together this guide to fill you in on everything you should and shouldn’t do when ending an essay. Follow our advice, and you’ll have a stellar conclusion worthy of an A+ in no time.

Tips for Ending an Essay

- Rephrase your thesis to include in your final paragraph to bring the essay full circle.

- End your essay with a call to action, warning, or image to make your argument meaningful.

- Keep your conclusion concise and to the point, so you don’t lose a reader’s attention.

- Do your best to avoid adding new information to your conclusion and only emphasize points you’ve already made in your essay.

- “All in all”

- “Ultimately”

- “Furthermore”

- “As a consequence”

- “As a result”

- Make sure to write your main points in a new and unique way to avoid repetition.

- Let’s say this is your original thesis statement: “Allowing students to visit the library during lunch improves campus life and supports academic achievement.”

- Restating your thesis for your conclusion could look like this: “Evidence shows students who have access to their school’s library during lunch check out more books and are more likely to complete their homework.”

- The restated thesis has the same sentiment as the original while also summarizing other points of the essay.

- “When you use plastic water bottles, you pollute the ocean. Switch to using a glass or metal water bottle instead. The planet and sea turtles will thank you.”

- “The average person spends roughly 7 hours on their phone a day, so there’s no wonder cybersickness is plaguing all generations.”

- “Imagine walking on the beach, except the soft sand is made up of cigarette butts. They burn your feet but keep washing in with the tide. If we don’t clean up the ocean, this will be our reality.”

- “ Lost is not only a show that changed the course of television, but it’s also a reflection of humanity as a whole.”

- “If action isn’t taken to end climate change today, the global temperature will dangerously rise from 4.5 to 8 °F (−15.3 to −13.3 °C) by 2100.”

- Focus on your essay's most prevalent or important parts. What key points do you want readers to take away or remember about your essay?

- For instance, instead of writing, “That’s why I think that Abraham Lincoln was the best American President,” write, “That’s why Abraham Lincoln was the best American President.”

- There’s no room for ifs, ands, or buts—your opinion matters and doesn’t need to be apologized for!

- For instance, words like “firstly,” “secondly,” and “thirdly” may be great transition statements for body paragraphs but are unnecessary in a conclusion.

- For instance, say you began your essay with the idea that humanity’s small sense of sense stems from space’s vast size. Try returning to this idea in the conclusion by emphasizing that as human knowledge grows, space becomes smaller.

- For example, you could extend an essay on the television show Orange is the New Black by bringing up the culture of imprisonment in America.

Community Q&A

- Always review your essay after writing it for proper grammar, spelling, and punctuation, and don’t be afraid to revise. Thanks Helpful 0 Not Helpful 0

Tips from our Readers

- Have somebody else proofread your essay before turning it in. The other person will often be able to see errors you may have missed!

You Might Also Like

- ↑ https://www.uts.edu.au/current-students/support/helps/self-help-resources/grammar/transition-signals

- ↑ https://owl.purdue.edu/owl/general_writing/common_writing_assignments/argument_papers/conclusions.html

- ↑ http://writing2.richmond.edu/writing/wweb/conclude.html

- ↑ https://writingcenter.fas.harvard.edu/pages/ending-essay-conclusions

- ↑ https://www.pittsfordschools.org/site/handlers/filedownload.ashx?moduleinstanceid=542&dataid=4677&FileName=conclusions1.pdf

- ↑ https://www.cuyamaca.edu/student-support/tutoring-center/files/student-resources/how-to-write-a-good-conclusion.pdf

- ↑ https://library.sacredheart.edu/c.php?g=29803&p=185935

About This Article

To end an essay, start your conclusion with a phrase that makes it clear your essay is coming to a close, like "In summary," or "All things considered." Then, use a few sentences to briefly summarize the main points of your essay by rephrasing the topic sentences of your body paragraphs. Finally, end your conclusion with a call to action that encourages your readers to do something or learn more about your topic. In general, try to keep your conclusion between 5 and 7 sentences long. For more tips from our English co-author, like how to avoid common pitfalls when writing an essay conclusion, scroll down! Did this summary help you? Yes No

- Send fan mail to authors

Reader Success Stories

Eva Dettling

Jan 23, 2019

Did this article help you?

Mar 7, 2017

Jul 16, 2021

Gabby Suzuki

Oct 17, 2019

Nicole Murphy

Apr 26, 2017

Featured Articles

Trending Articles

Watch Articles

- Terms of Use

- Privacy Policy

- Do Not Sell or Share My Info

- Not Selling Info

wikiHow Tech Help Pro:

Develop the tech skills you need for work and life

Still have questions? Leave a comment

Add Comment

Checklist: Dissertation Proposal

Enter your email id to get the downloadable right in your inbox!

Examples: Edited Papers

Need editing and proofreading services, how to write a conclusion for an essay (examples included).

- Tags: Essay , Essay Writing

Condensing a 1,000-plus-word essay into a neat little bundle may seem like a Herculean task. You must summarize all your findings and justify their importance within a single paragraph.

But, when you discover the formula for writing a conclusion paragraph, things get much simpler!

But, how to write a conclusion paragraph for an essay, and more importantly, how to make it impactful enough? Through this article, we will walk you through the process of constructing a powerful conclusion that leaves a lingering impression on readers’ minds. We will also acquaint you with essay conclusion examples for different types of essays.

Score high with our expert essay editing services! Get started

Let’s start from the beginning: How can you write a conclusion for an essay?

How to write a conclusion for an essay

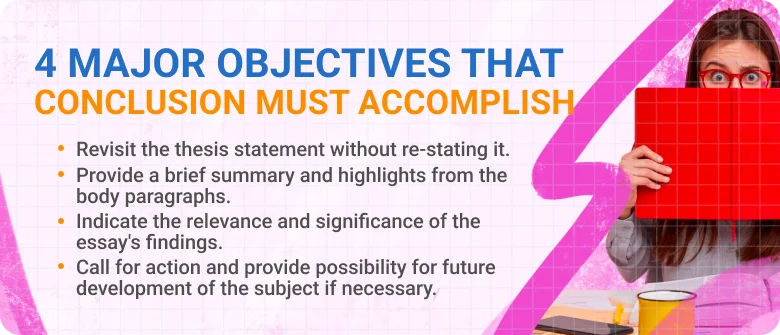

In order to write an effective conclusion, you must first understand what is a conclusion in an essay. It is not just the summary of the main points of your essay. A well-written conclusion effectively ties together the main ideas of your essay and also pays heed to their broader implications. The objectives of your concluding paragraph are as follows:

- Highlight the significance of your essay topic

- Tie together the key points of your essay

- Leave the reader with something to ponder about

A good essay conclusion begins with a modified thesis statement that is altered on the basis of the information stated throughout the essay. It then ties together all the main points of the essay and ends with a clincher that highlights the broader implications of your thesis statement.

Now that we’ve understood the basics of how to conclude an essay, let’s understand the key aspects of a good conclusion paragraph.

1. Restating your thesis statement

If you want to understand how to start a conclusion, you must realize that involves more than just restating the thesis statement word for word. Your thesis statement needs to be updated and expanded upon as per the information provided in your essay.

There are many ways to start a conclusion. One such method could be to start with the revised version of your thesis statement that hints to the significance of your argument. After this, your conclusion paragraph can organically move on to your arguments in the essay.

Let’s take a look at an effective way of writing a conclusion for an essay:

If the following claim is your thesis statement:

Virtual reality (VR) is undeniably altering the perception of reality by revolutionizing various industries, reshaping human experiences, and challenging traditional notions of what is real.

The restated thesis statement will be as follows:

Our analysis has substantiated the claim that virtual reality (VR) is significantly transforming the way we perceive reality. It has revolutionized industries, reshaped human experiences, and challenged traditional notions of reality.

2. Tying together the main points

Tying together all the main points of your essay does not mean simply summarizing them in an arbitrary manner. The key is to link each of your main essay points in a coherent structure. One point should follow the other in a logical format.

The goal is to establish how each of these points connects to the message of your essay as a whole. You can also take the help of powerful quotes or impactful reviews to shed a unique light on your essay.

Let’s take a look at an example:

VR presents a new paradigm where the distinction between the real and the virtual becomes increasingly blurred. As users dive into immersive virtual worlds, they are confronted with questions about the nature of reality, perception, and the boundaries of human consciousness.

3. Constructing an impactful conclusion

Most of us are confused about how to end an essay with a bang. The answer is quite simple! The final line of your essay should be impactful enough to create a lasting impression on the reader. More importantly, it should also highlight the significance of your essay topic. This could mean the broader implications of your topic, either in your field of study or in general.

Optionally, you could also try to end your essay on an optimistic note that motivates or encourages the reader. If your essay is about eradicating a problem in society, highlight the positive effects achieved by the eradication of that problem.

Here’s an example of how to end an essay:

In a world where virtual boundaries dissolve, VR is the catalyst that reshapes our perception of reality, forever altering the landscape of the human experience.

Here’s a combined version of all three aspects:

Our analysis has substantiated the claim that Virtual Reality (VR) is significantly transforming how we perceive reality. It has revolutionized industries, reshaped human experiences, and challenged traditional notions of reality. It presents a new paradigm where the distinction between the real and the virtual becomes increasingly blurred. As users dive into immersive virtual worlds, they are confronted with questions about the nature of reality, perception, and the boundaries of human consciousness. In a world where virtual boundaries dissolve, it is the catalyst that reshapes our perception of reality, forever altering the landscape of the human experience.

Now that we’ve understood the structure of a concluding paragraph, let’s look at what to avoid while writing a conclusion.

What to avoid in your conclusion paragraph

When learning how to write a conclusion for an essay, you must also know what to avoid. You want to strengthen your argument with the help of a compelling conclusion paragraph, and not undermine it by confusing the reader.

Let’s take a look at a few strategies to avoid in your essay conclusion:

1. Avoid including new evidence

The conclusion should not introduce new information but rather strengthen the arguments that are already made. If you come across any unique piece of information regarding your essay topic, accommodate it into your body paragraphs rather than stuffing it into your conclusion.

Including new, contradictory information in the concluding paragraph not only confuses the reader but also weakens your argument. You may include a powerful quote that strengthens the message of your essay, or an example that sheds light on the importance of your argument. However, this does not include introducing a completely new argument or making a unique point.

2. Avoid the use of concluding phrases

Your conclusion should hint towards your essay coming to an end, instead of blatantly stating the obvious. Blatant concluding statements undermine the quality of your essay, making it clumsy and amateurish. They also significantly diminish the quality of your arguments.

It is a good idea to avoid the following statements while concluding your essay:

- In conclusion,

- In summary,

While using these statements may not be incorrect per se, hinting towards a conclusion creates a better impression on the reader rather than blatantly stating it.

Here are more effective statements you could use:

- Let this essay serve as a catalyst for…

- As we navigate the intricacies of this multifaceted topic, remember…

- As I bid farewell to this subject…

3. Don’t undermine your argument

Although there might be several points of view regarding your essay topic, it is crucial that you stick to your own. You may have stated and refuted other points of view in your body paragraphs.

However, your conclusion is simply meant to strengthen your main argument. Mentioning other points of view in your essay conclusion, not only weakens your argument but also creates a poor impression of your essay.

Here are a few phrases you should avoid in your essay conclusion:

- There are several methods to approach this topic.

- There are plenty of good points for both sides of the argument.

- There is no clear solution to this problem.

Examples of essay conclusions

Different types of essays make use of different forms of conclusions. The critical question of “how to start a conclusion paragraph” has many different answers. To help you further, we’ve provided a few good conclusions for essays that are based on the four main essay types.

1. Narrative essay conclusion

The following essay conclusion example elaborates on the narrator’s unique experience with homeschooling.

- Restated thesis statement

- Body paragraph summary

- Closing statement

My experience with homeschooling has been a journey that has shaped me in profound ways. Through the challenges and triumphs, I have come to appreciate the unique advantages and personal growth that homeschooling can offer. As I reflect on my journey, I am reminded of the transformative power of this alternative education approach. It has empowered me to take ownership of my education, nurture my passions, and develop skills that extend far beyond the confines of academic achievement. Whether in traditional classrooms or homeschooling environments, it is through embracing and nurturing the unique potential within each of us that we can truly thrive and make a lasting impact on the world.

2. Descriptive essay conclusion

The following essay conclusion example elaborates on the narrator’s bond with their cat.

The enchanting presence that my cat has cannot be ignored, captivating my heart with her grace, charm, and unconditional love. Through the moments of playfulness, companionship, and affection, she has become an irreplaceable member of my family. As I continue to cherish the memories and lessons learned from her, I am reminded of the extraordinary power of the human-animal bond. In their company, we find solace, companionship, and a love that transcends words. In a world that can be challenging and tumultuous, never underestimate the profound impact that animals can have on our lives. In their presence, not only do we find love but also a profound sense of connection.

3. Argumentative essay conclusion

Here’s an essay conclusion example that elaborates on the marginalization of, and acute intolerance towards, LGBTQ+ individuals.

The journey toward equality for LGBTQ+ individuals is an ongoing battle that demands our unwavering commitment to justice and inclusion. It is evident that while progress has been made, the journey toward equality for these individuals is far from complete. It demands our continued advocacy, activism, and support for legislative change, societal acceptance, and the creation of inclusive environments. The struggle for LGBTQ+ equality is a fight for the very essence of human dignity and the recognition of our shared humanity. It is a battle that requires our collective efforts, determination, and an unyielding belief in the fundamental principles of equality and justice.

4. Expository essay conclusion

This example of an essay conclusion revolves around a psychological phenomenon named the bandwagon effect and examines its potential ill effects on society:

The bandwagon effect in psychology is a fascinating phenomenon that sheds light on the powerful influence of social conformity on individual behavior and decision-making processes. This effect serves as a reminder of the inherently social nature of human beings and the power of social influence in shaping our thoughts, attitudes, and actions. It underscores the importance of critical thinking, individual autonomy, and the ability to resist the pressure of conformity. By understanding its mechanisms and implications, we can guard against its potential pitfalls and actively foster independent thought and decision-making, also contributing to a more enlightened and progressive society.

Now that you’ve taken a closer look at different conclusions for essays, it’s time to put this knowledge to good use. If you need to take your essay up a notch and score high, professional essay editing services are your best bet.

Happy writing!

Frequently Asked Questions

How do you write a good conclusion for an essay, what comes first in a conclusion, what is the best conclusion of an essay.

Found this article helpful?

Leave a Comment: Cancel reply

Your email address will not be published.

Your vs. You’re: When to Use Your and You’re

Your organization needs a technical editor: here’s why, your guide to the best ebook readers in 2024, writing for the web: 7 expert tips for web content writing.

Subscribe to our Newsletter

Get carefully curated resources about writing, editing, and publishing in the comfort of your inbox.

How to Copyright Your Book?

If you’ve thought about copyrighting your book, you’re on the right path.

© 2024 All rights reserved

- Terms of service

- Privacy policy

- Self Publishing Guide

- Pre-Publishing Steps

- Fiction Writing Tips

- Traditional Publishing

- Additional Resources

- Dissertation Writing Guide

- Essay Writing Guide

- Academic Writing and Publishing

- Citation and Referencing

- Partner with us

- Annual report

- Website content

- Marketing material

- Job Applicant

- Cover letter

- Resource Center

- Case studies

Essay writing: Conclusions

- Introductions

- Conclusions

- Analysing questions

- Planning & drafting

- Revising & editing

- Proofreading

- Essay writing videos

Jump to content on this page:

“Pay adequate attention to the conclusion.” Kathleen McMillan & Jonathan Weyers, How to Write Essays & Assignments

Conclusions are often overlooked, cursory and written last minute. If this sounds familiar then it's time to change and give your conclusions some much needed attention. Your conclusion is the whole point of your essay. All the other parts of the essay should have been leading your reader on an inevitable journey towards your conclusion. So make it count and finish your essay in style.

Know where you are going

Too many students focus their essays on content rather than argument. This means they pay too much attention to the main body without considering where it is leading. It can be a good idea to write a draft conclusion before you write your main body. It is a lot easier to plan a journey when you know your destination!

It should only be a draft however, as quite often the writing process itself can help you develop your argument and you may feel your conclusion needs adapting accordingly.

What it should include

A great conclusion should include:

A clear link back to the question . This is usually the first thing you do in a conclusion and it shows that you have (hopefully) answered it.

A sentence or two that summarise(s) your main argument but in a bit more detail than you gave in your introduction.

A series of supporting sentences that basically reiterate the main point of each of your paragraphs but show how they relate to each other and lead you to the position you have taken. Constantly ask yourself "So what?" "Why should anyone care?" and answer these questions for each of the points you make in your conclusion.

A final sentence that states why your ideas are important to the wider subject area . Where the introduction goes from general to specific, the conclusion needs to go from specific back out to general.

What it should not include

Try to avoid including the following in your conclusion. Remember your conclusion should be entirely predictable. The reader wants no surprises.

Any new ideas . If an idea is worth including, put it in the main body. You do not need to include citations in your conclusion if you have already used them earlier and are just reiterating your point.

A change of style i.e. being more emotional or sentimental than the rest of the essay. Keep it straightforward, explanatory and clear.

Overused phrases like: “in conclusion”; “in summary”; “as shown in this essay”. Consign these to the rubbish bin!

Here are some alternatives, there are many more:

- The x main points presented here emphasise the importance of...

- The [insert something relevant] outlined above indicate that ...

- By showing the connections between x, y and z, it has been argued here that ...

Maximise marks

Remember, your conclusion is the last thing your reader (marker!) will read. Spending a little care on it will leave her/him absolutely sure that you have answered the question and you will definitely receive a higher mark than if your conclusion was a quickly written afterthought.

Your conclusion should be around 10% of your word count. There is never a situation where sacrificing words in your conclusion will benefit your essay.

The 5Cs conclusion method: (spot the typo on this video)

- << Previous: Main body

- Next: Formatting >>

- Last Updated: Nov 3, 2023 3:17 PM

- URL: https://libguides.hull.ac.uk/essays

- Login to LibApps

- Library websites Privacy Policy

- University of Hull privacy policy & cookies

- Website terms and conditions

- Accessibility

- Report a problem

- Walden University

- Faculty Portal

Writing a Paper: Conclusions

Writing a conclusion.

A conclusion is an important part of the paper; it provides closure for the reader while reminding the reader of the contents and importance of the paper. It accomplishes this by stepping back from the specifics in order to view the bigger picture of the document. In other words, it is reminding the reader of the main argument. For most course papers, it is usually one paragraph that simply and succinctly restates the main ideas and arguments, pulling everything together to help clarify the thesis of the paper. A conclusion does not introduce new ideas; instead, it should clarify the intent and importance of the paper. It can also suggest possible future research on the topic.

An Easy Checklist for Writing a Conclusion

It is important to remind the reader of the thesis of the paper so he is reminded of the argument and solutions you proposed.

Think of the main points as puzzle pieces, and the conclusion is where they all fit together to create a bigger picture. The reader should walk away with the bigger picture in mind.

Make sure that the paper places its findings in the context of real social change.

Make sure the reader has a distinct sense that the paper has come to an end. It is important to not leave the reader hanging. (You don’t want her to have flip-the-page syndrome, where the reader turns the page, expecting the paper to continue. The paper should naturally come to an end.)

No new ideas should be introduced in the conclusion. It is simply a review of the material that is already present in the paper. The only new idea would be the suggesting of a direction for future research.

Conclusion Example

As addressed in my analysis of recent research, the advantages of a later starting time for high school students significantly outweigh the disadvantages. A later starting time would allow teens more time to sleep--something that is important for their physical and mental health--and ultimately improve their academic performance and behavior. The added transportation costs that result from this change can be absorbed through energy savings. The beneficial effects on the students’ academic performance and behavior validate this decision, but its effect on student motivation is still unknown. I would encourage an in-depth look at the reactions of students to such a change. This sort of study would help determine the actual effects of a later start time on the time management and sleep habits of students.

Related Webinar

Didn't find what you need? Email us at [email protected] .

- Previous Page: Thesis Statements

- Next Page: Writer's Block

- Office of Student Disability Services

Walden Resources

Departments.

- Academic Residencies

- Academic Skills

- Career Planning and Development

- Customer Care Team

- Field Experience

- Military Services

- Student Success Advising

- Writing Skills

Centers and Offices

- Center for Social Change

- Office of Academic Support and Instructional Services

- Office of Degree Acceleration

- Office of Research and Doctoral Services

- Office of Student Affairs

Student Resources

- Doctoral Writing Assessment

- Form & Style Review

- Quick Answers

- ScholarWorks

- SKIL Courses and Workshops

- Walden Bookstore

- Walden Catalog & Student Handbook

- Student Safety/Title IX

- Legal & Consumer Information

- Website Terms and Conditions

- Cookie Policy

- Accessibility

- Accreditation

- State Authorization

- Net Price Calculator

- Contact Walden

Walden University is a member of Adtalem Global Education, Inc. www.adtalem.com Walden University is certified to operate by SCHEV © 2024 Walden University LLC. All rights reserved.

- I nfographics

- Show AWL words

- Subscribe to newsletter

- What is academic writing?

- Academic Style

- What is the writing process?

- Understanding the title

- Brainstorming

- Researching

- First draft

- Proofreading

- Report writing

- Compare & contrast

- Cause & effect

- Problem-solution

- Classification

- Essay structure

- Introduction

- Literature review

- Book review

- Research proposal

- Thesis/dissertation

- What is cohesion?

- Cohesion vs coherence

- Transition signals

- What are references?

- In-text citations

- Reference sections

- Reporting verbs

- Band descriptors

Show AWL words on this page.

Levels 1-5: grey Levels 6-10: orange

Show sorted lists of these words.

| --> |

Any words you don't know? Look them up in the website's built-in dictionary .

|

|

Choose a dictionary . Wordnet OPTED both

Conclusion How to end an essay

While getting started can be very difficult, finishing an essay is usually quite straightforward. By the time you reach the end you will already know what the main points of the essay are, so it will be easy for you to write a summary of the essay and finish with some kind of final comment , which are the two components of a good conclusion. An example essay has been given below to help you understand both of these, and there is a checklist at the end which you can use for editing your conclusion.

In short, the concluding paragraph consists of the following two parts:

- a summary of the main points;

- your final comment on the subject.

It is important, at the end of the essay, to summarise the main points. If your thesis statement is detailed enough, then your summary can just be a restatement of your thesis using different words. The summary should include all the main points of the essay, and should begin with a suitable transition signal . You should not add any new information at this point.

The following is an example of a summary for a short essay on cars ( given below ):

In conclusion, while the car is advantageous for its convenience, it has some important disadvantages, in particular the pollution it causes and the rise of traffic jams.

Although this summary is only one sentence long, it contains the main (controlling) ideas from all three paragraphs in the main body. It also has a clear transition signal ('In conclusion') to show that this is the end of the essay.

Final comment

Once the essay is finished and the writer has given a summary, there should be some kind of final comment about the topic. This should be related to the ideas in the main body . Your final comment might:

- offer solutions to any problems mentioned in the body;

- offer recommendations for future action;

- give suggestions for future research.

Here is an example of a final comment for the essay on cars :

If countries can invest in the development of technology for green fuels, and if car owners can think of alternatives such as car sharing, then some of these problems can be lessened.

This final comment offers solutions, and is related to the ideas in the main body. One of the disadvantages in the body was pollution, so the writer suggests developing 'green fuels' to help tackle this problem. The second disadvantage was traffic congestion, and the writer again suggests a solution, 'car sharing'. By giving these suggestions related to the ideas in the main body, the writer has brought the essay to a successful close.

Example essay

Below is a discussion essay which looks at the advantages and disadvantages of car ownership. This essay is used throughout the essay writing section to help you understand different aspects of essay writing. Here it focuses on the summary and final comment of the conclusion (mentioned on this page), the thesis statement and general statements of the introduction, and topic sentences and controlling ideas. Click on the different areas (in the shaded boxes to the right) to highlight the different structural aspects in this essay.

Although they were invented almost a hundred years ago, for decades cars were only owned by the rich. Since the 60s and 70s they have become increasingly affordable, and now most families in developed nations, and a growing number in developing countries, own a car. While cars have undoubted advantages, of which their convenience is the most apparent, they have significant drawbacks, most notably pollution and traffic problems . The most striking advantage of the car is its convenience. When travelling long distance, there may be only one choice of bus or train per day, which may be at an unsuitable time. The car, however, allows people to travel at any time they wish, and to almost any destination they choose. Despite this advantage, cars have many significant disadvantages, the most important of which is the pollution they cause. Almost all cars run either on petrol or diesel fuel, both of which are fossil fuels. Burning these fuels causes the car to emit serious pollutants, such as carbon dioxide, carbon monoxide, and nitrous oxide. Not only are these gases harmful for health, causing respiratory disease and other illnesses, they also contribute to global warming, an increasing problem in the modern world. According to the Union of Concerned Scientists (2013), transportation in the US accounts for 30% of all carbon dioxide production in that country, with 60% of these emissions coming from cars and small trucks. In short, pollution is a major drawback of cars. A further disadvantage is the traffic problems that they cause in many cities and towns of the world. While car ownership is increasing in almost all countries of the world, especially in developing countries, the amount of available roadway in cities is not increasing at an equal pace. This can lead to traffic congestion, in particular during the morning and evening rush hour. In some cities, this congestion can be severe, and delays of several hours can be a common occurrence. Such congestion can also affect those people who travel out of cities at the weekend. Spending hours sitting in an idle car means that this form of transport can in fact be less convenient than trains or aeroplanes or other forms of public transport. In conclusion, while the car is advantageous for its convenience , it has some important disadvantages, in particular the pollution it causes and the rise of traffic jams . If countries can invest in the development of technology for green fuels, and if car owners can think of alternatives such as car sharing, then some of these problems can be lessened.

Union of Concerned Scientists (2013). Car Emissions and Global Warming. www.ucsusa.org/clean vehicles/why-clean-cars/global-warming/ (Access date: 8 August, 2013)

GET FREE EBOOK

Like the website? Try the books. Enter your email to receive a free sample from Academic Writing Genres .

Below is a checklist for an essay conclusion. Use it to check your own writing, or get a peer (another student) to help you.

| The conclusion begins with a suitable (e.g. 'In conclusion...', 'To summarise...', 'In sum...') | ||

| The conclusion has a of the main ideas | ||

| The conclusion ends with a (the writer's idea or a recommendation) |

Next section

Find out about other writing genres (besides essays and reports) in the next section.

- Other genres

Previous section

Go back to the previous section about the main body of an essay.

Author: Sheldon Smith ‖ Last modified: 26 January 2022.

Sheldon Smith is the founder and editor of EAPFoundation.com. He has been teaching English for Academic Purposes since 2004. Find out more about him in the about section and connect with him on Twitter , Facebook and LinkedIn .

Compare & contrast essays examine the similarities of two or more objects, and the differences.

Cause & effect essays consider the reasons (or causes) for something, then discuss the results (or effects).

Discussion essays require you to examine both sides of a situation and to conclude by saying which side you favour.

Problem-solution essays are a sub-type of SPSE essays (Situation, Problem, Solution, Evaluation).

Transition signals are useful in achieving good cohesion and coherence in your writing.

Reporting verbs are used to link your in-text citations to the information cited.

Table of Contents

Ai, ethics & human agency, collaboration, information literacy, writing process, conclusions – how to write compelling conclusions.

- © 2023 by Jennifer Janechek - IBM Quantum

Conclusions generally address these issues:

- How can you restate your ideas concisely and in a new way?

- What have you left your reader to think about at the end of your paper?

- How does your paper answer the “so what?” question?

As the last part of the paper, conclusions often get the short shrift. We instructors know (not that we condone it)—many students devote a lot less attention to the writing of the conclusion. Some students might even finish their conclusion thirty minutes before they have to turn in their papers. But even if you’re practicing desperation writing, don’t neglect your conclusion; it’s a very integral part of your paper.

Think about it: Why would you spend so much time writing your introductory material and your body paragraphs and then kill the paper by leaving your reader with a dud for a conclusion? Rather than simply trailing off at the end, it’s important to learn to construct a compelling conclusion—one that both reiterates your ideas and leaves your reader with something to think about.

How do I reiterate my main points?

In the first part of the conclusion, you should spend a brief amount of time summarizing what you’ve covered in your paper. This reiteration should not merely be a restatement of your thesis or a collection of your topic sentences but should be a condensed version of your argument, topic, and/or purpose.

Let’s take a look at an example reiteration from a paper about offshore drilling:

Ideally, a ban on all offshore drilling is the answer to the devastating and culminating environmental concerns that result when oil spills occur. Given the catastrophic history of three major oil spills, the environmental and economic consequences of offshore drilling should now be obvious.

Now, let’s return to the thesis statement in this paper so we can see if it differs from the conclusion:

As a nation, we should reevaluate all forms of offshore drilling, but deep water offshore oil drilling, specifically, should be banned until the technology to stop and clean up oil spills catches up with our drilling technology. Though some may argue that offshore drilling provides economic advantages and would lessen our dependence on foreign oil, the environmental and economic consequences of an oil spill are so drastic that they far outweigh the advantages.

The author has already discussed environmental/economic concerns with oil drilling. In the above example, the author provides an overview of the paper in the second sentence of the conclusion, recapping the main points and reminding the readers that they should now be willing to acknowledge this position as viable.

Though you may not always want to take this aggressive of an approach (i.e., saying something should be obvious to the reader), the key is to summarize your main ideas without “plagiarizing” by repeating yourself word for word. Instead, you may take the approach of saying, “The readers can now see, given the catastrophic history of three major oil spills, the environmental and economic consequences of oil drilling.”

Can you give me a real-life example of a conclusion?

Think of conclusions this way: You are watching a movie, which has just reached the critical plot point (the murderer will be revealed, the couple will finally kiss, the victim will be rescued, etc.), when someone else enters the room. This person has no idea what is happening in the movie. They might lean over to ask, “What’s going on?” You now have to condense the entire plot in a way that makes sense, so the person will not have to ask any other questions, but quickly, so that you don’t miss any more of the movie.

Your conclusion in a paper works in a similar way. When you write your conclusion, imagine that a person has just showed up in time to hear the last paragraph. What does that reader need to know in order to get the gist of your paper? You cannot go over the entire argument again because the rest of your readers have actually been present and listening the whole time. They don’t need to hear the details again. Writing a compelling conclusion usually relies on the balance between two needs: give enough detail to cover your point, but be brief enough to make it obvious that this is the end of the paper.

Remember that reiteration is not restatement. Summarize your paper in one to two sentences (or even three or four, depending on the length of the paper), and then move on to answering the “So what?” question.

How can I answer the “So what?” question?

The bulk of your conclusion should answer the “So what?” question. Have you ever had an instructor write “So what?” at the end of your paper? This is not meant to offend but rather to remind you to show readers the significance of your argument. Readers do not need or want an entire paragraph of summary, so you should craft some new tidbit of interesting information that serves as an extension of your original ideas.

There are a variety of ways that you can answer the “So what?” question. The following are just a few types of such “endnotes”:

The Call to Action

The call to action can be used at the end of a variety of papers, but it works best for persuasive papers. Persuasive papers include social action papers and Rogerian argument essays, which begin with a problem and move toward a solution that serves as the author’s thesis. Any time your purpose in writing is to change your readers’ minds or you want to get your readers to do something, the call to action is the way to go. The call to action asks your readers, after having progressed through a compelling and coherent argument, to do something or believe a certain way.

Following the reiteration of the essay’s argument, here is an example call to action:

We have advanced technology that allows deepwater offshore drilling, but we lack the similarly advanced technology that would manage these spills effectively. As such, until cleanup and prevention technology are available, we gatekeepers of our coastal shores and defenders of marine wildlife should ban offshore drilling, or, at the very least, demand a moratorium on all offshore oil drilling.

This call to action requests that the readers consider a ban on offshore drilling. Remember, you need to identify your audience before you begin writing. Whether the author wants readers to actually enact the ban or just to come to this side of the argument, the conclusion asks readers to do or believe something new based upon the information they just received.

The Contextualization

The contextualization places the author’s local argument, topic, or purpose in a more global context so that readers can see the larger purpose for the piece or where the piece fits into a larger conversation. Writers do research for papers in part so they can enter into specific conversations, and they provide their readers with a contextualization in the conclusion to acknowledge the broader dialogue that contains that smaller conversation.

For instance, if we were to return to the paper on offshore drilling, rather than proposing a ban (a call to action), we might provide the reader with a contextualization:

We have advanced technology that allows deepwater offshore drilling, but we lack the advanced technology that would manage these spills effectively. Thus, one can see the need to place environmental concerns at the forefront of the political arena. Many politicians have already done so, including Senator Doe and Congresswoman Smith.

Rather than asking readers to do or believe something, this conclusion answers the “So what?” question by showing why this specific conversation about offshore drilling matters in the larger conversation about politics and environmentalism.

The twist leaves readers with a contrasting idea to consider. For instance, to continue the offshore drilling paper, the author might provide readers with a twist in the last few lines of the conclusion:

While offshore drilling is certainly an important issue today, it is only a small part of the greater problem of environmental abuse. Until we are ready to address global issues, even a moratorium on offshore drilling will only delay the inevitable destruction of the environment.

While this contrasting idea does not negate the writer’s original argument, it does present an alternative contrasting idea to weigh against the original argument. The twist is similar to a cliffhanger, as it is intended to leave readers saying, “Hmm…”

Suggest Possibilities for Future Research

This approach to answering “So what?” is best for projects that might be developed into larger, ongoing projects later or to suggest possibilities for future research someone else who might be interested in that topic could explore. This approach involves pinpointing various directions which your research might take if someone were to extend the ideas included in your paper. Research is a conversation, so it’s important to consider how your piece fits into this conversation and how others might use it in their own conversations.

For example, to suggest possibilities for future research based on the paper on offshore drilling, the conclusion might end with something like this:

I have just explored the economic and environmental repercussions of offshore drilling based on the examples we have of three major oil spills over the past thirty years. Future research might uncover more economic and environmental consequences of offshore drilling, consequences that will become clearer as the effects of the BP oil spill become more pronounced and as more time passes.

Suggesting opportunities for future research involves the reader in the paper, just like the call to action. Readers may be inspired by your brilliant ideas to use your piece as a jumping-off point!

Whether you use a call to action, a twist, a contextualization, or a suggestion of future possibilities for research, it’s important to answer the “So what?” question to keep readers interested in your topic until the very end of the paper. And, perhaps more importantly, leaving your readers with something to consider makes it more likely that they will remember your piece of writing.

Revise your own argument by using the following questions to guide you:

- What do you want readers to take away from your discussion?

- What are the main points you made, why should readers care, and what ideas should they take away?

Brevity - Say More with Less

Clarity (in Speech and Writing)

Coherence - How to Achieve Coherence in Writing

Flow - How to Create Flow in Writing

Inclusivity - Inclusive Language

The Elements of Style - The DNA of Powerful Writing

Suggested Edits

- Please select the purpose of your message. * - Corrections, Typos, or Edits Technical Support/Problems using the site Advertising with Writing Commons Copyright Issues I am contacting you about something else

- Your full name

- Your email address *

- Page URL needing edits *

- Phone This field is for validation purposes and should be left unchanged.

Other Topics:

Citation - Definition - Introduction to Citation in Academic & Professional Writing

- Joseph M. Moxley

Explore the different ways to cite sources in academic and professional writing, including in-text (Parenthetical), numerical, and note citations.

Collaboration - What is the Role of Collaboration in Academic & Professional Writing?

Collaboration refers to the act of working with others or AI to solve problems, coauthor texts, and develop products and services. Collaboration is a highly prized workplace competency in academic...

Genre may reference a type of writing, art, or musical composition; socially-agreed upon expectations about how writers and speakers should respond to particular rhetorical situations; the cultural values; the epistemological assumptions...

Grammar refers to the rules that inform how people and discourse communities use language (e.g., written or spoken English, body language, or visual language) to communicate. Learn about the rhetorical...

Information Literacy - Discerning Quality Information from Noise

Information Literacy refers to the competencies associated with locating, evaluating, using, and archiving information. In order to thrive, much less survive in a global information economy — an economy where information functions as a...

Mindset refers to a person or community’s way of feeling, thinking, and acting about a topic. The mindsets you hold, consciously or subconsciously, shape how you feel, think, and act–and...

Rhetoric: Exploring Its Definition and Impact on Modern Communication

Learn about rhetoric and rhetorical practices (e.g., rhetorical analysis, rhetorical reasoning, rhetorical situation, and rhetorical stance) so that you can strategically manage how you compose and subsequently produce a text...

Style, most simply, refers to how you say something as opposed to what you say. The style of your writing matters because audiences are unlikely to read your work or...

The Writing Process - Research on Composing

The writing process refers to everything you do in order to complete a writing project. Over the last six decades, researchers have studied and theorized about how writers go about...

Writing Studies

Writing studies refers to an interdisciplinary community of scholars and researchers who study writing. Writing studies also refers to an academic, interdisciplinary discipline – a subject of study. Students in...

Featured Articles

Academic Writing – How to Write for the Academic Community

Professional Writing – How to Write for the Professional World

Credibility & Authority – How to Be Credible & Authoritative in Speech & Writing

Academic Phrasebank

Writing conclusions.

- GENERAL LANGUAGE FUNCTIONS

- Being cautious

- Being critical

- Classifying and listing

- Compare and contrast

- Defining terms

- Describing trends

- Describing quantities

- Explaining causality

- Giving examples

- Signalling transition

- Writing about the past

Writing conclusions

Conclusions are shorter sections of academic texts which usually serve two functions. The first is to summarise and bring together the main areas covered in the writing, which might be called ‘looking back’; and the second is to give a final comment or judgement on this. The final comment may also include making suggestions for improvement and speculating on future directions.

In dissertations and research papers, conclusions tend to be more complex and will also include sections on the significance of the findings and recommendations for future work. Conclusions may be optional in research articles where consolidation of the study and general implications are covered in the Discussion section. However, they are usually expected in dissertations and essays.

Restating the aims of the study

This study set out to … This paper has argued that … This essay has discussed the reasons for … In this investigation, the aim was to assess … The aim of the present research was to examine … The purpose of the current study was to determine … The main goal of the current study was to determine … This project was undertaken to design … and evaluate … The present study was designed to determine the effect of … The second aim of this study was to investigate the effects of …

| This study set out to | predict which … establish whether … determine whether … develop a model for … assess the effects of … find a new method for … evaluate how effective … assess the feasibility of … test the hypothesis that … explore the influence of … investigate the impact of … gain a better understanding of … examine the relationship between … |

Summarising main research findings

This study has identified … The research has also shown that … The second major finding was that … These experiments confirmed that … X made no significant difference to … This study has found that generally … The investigation of X has shown that … The results of this investigation show that … X, Y and Z emerged as reliable predictors of … The most obvious finding to emerge from this study is that … The relevance of X is clearly supported by the current findings. One of the more significant findings to emerge from this study is that …

Suggesting implications for the field of knowledge

The results of this study indicate that … These findings suggest that in general … The findings of this study suggest that … Taken together, these results suggest that … An implication of this is the possibility that … The evidence from this study suggests that … Overall, this study strengthens the idea that … The current data highlight the importance of … The findings of this research provide insights for …

The results of this research support the idea that … These data suggest that X can be achieved through … The theoretical implications of these findings are unclear. The principal theoretical implication of this study is that … This study has raised important questions about the nature of … Taken together, these findings suggest a role for X in promoting Y. The findings of this investigation complement those of earlier studies. These findings have significant implications for the understanding of how … Although this study focuses on X, the findings may well have a bearing on …

Explaining the significance of the findings or contribution of the study

The findings will be of interest to … This thesis has provided a deeper insight into … The findings reported here shed new light on … The study contributes to our understanding of … These results add to the rapidly expanding field of … The contribution of this study has been to confirm … Before this study, evidence of X was purely anecdotal. This project is the first comprehensive investigation of … The insights gained from this study may be of assistance to … This work contributes to existing knowledge of X by providing …

Prior to this study it was difficult to make predictions about how … The analysis of X undertaken here, has extended our knowledge of … The empirical findings in this study provide a new understanding of … This paper contributes to recent historiographical debates concerning … This approach will prove useful in expanding our understanding of how … This new understanding should help to improve predictions of the impact of … The methods used for this X may be applied to other Xs elsewhere in the world. The X that we have identified therefore assists in our understanding of the role of … This is the first study of substantial duration which examines associations between … The findings from this study make several contributions to the current literature. First,…