Quick Links:

- Final Project information

- [4/13/2023] Homework 4 ( handout , starter code ) has been released! The assignment is due April 27.

- [3/28/2023] Homework 3 ( handout , starter code ) has been released! The assignment is due April 11. As with the previous homeworks, you should submit both your PDF writeup and your code on Gradescope; there will be separate assignments for each.

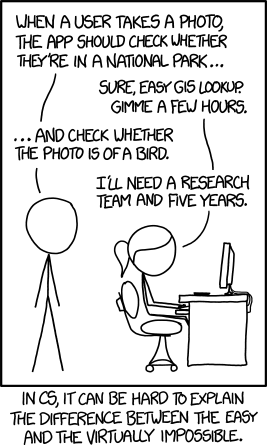

Some problems in computer science admit precise algorithmic solutions. Checking if someone is in a national park is, in some sense, straightforward: get the user’s location, get the boundaries of all national parks, and check if the user location lies within any of those boundaries.

Other problems are less straightforward. Suppose you want your computer to determine if an image contains a bird. To your computer, an image is just a matrix of red, green, and blue pixels. How do you even begin to write the function is_bird(image) ?

For problems like this, we turn to a powerful family of methods known as machine learning. The zen of machine learning is the following:

- I don’t know how to solve my problem.

- But I can obtain a dataset that describes what I want my computer to do.

- So, I will write a program that learns the desired behavior from the data.

This class will provide a broad introduction to machine learning. We will start with supervised learning, where our goal is to learn an input-to-output mapping given a set of correct input-output pairs. Next, we will study unsupervised learning, which seeks to identify hidden structure in data. Finally, we will cover reinforcement learning, in which an agent (e.g., a robot) learns from observations it makes as it explores the world.

Course Staff

- Office hours : See calendar .

- Assignments : Assignments should be submitted through Gradescope . Feedback will also be provided on Gradescope. All enrolled students should be in Gradescope automatically–let me know if you are not!

- Discussions : We will be using Ed ( sign-up link ) for general course-related questions. If you have an individual matter to discuss, email me directly (please put “CSCI 467” in the subject line) or come to my office hours. For grading questions, go to the office hours of the person who graded the problem in question.

Prerequisites

- Algorithms: CSCI 270

- Linear Algebra: MATH 225

- Probability: EE 364 or MATH 407 or BUAD 310

This class will also use some basic multivariate calculus (taking partial derivatives and gradients). However, knowledge of single-variable calculus is sufficient as we will introduce the required material during class and section.

All assignments are due by 11:59pm on the indicated date.

| Date | Topic | Related Readings | Assignments | |

|---|---|---|---|---|

| Tue Jan 10 | Introduction (slides ) | PML 1 | released | |

| Thu Jan 12 | Linear Regression ( , ) | PML 7.8, 8.2 | ||

| Fri Jan 13 | Section: Probability, Linear Algebra, & Calculus Review ( ) | |||

| Tue Jan 17 | Featurization, Convexity, Normal Equations ( ) | PML 2.6.3, 8.1, 11.1-11.2 | ||

| Thu Jan 19 | Maximum Likelihood Estimation, Logistic Regression ( ) | PML 4.2, 10.1-10.2 | Homework 0 due | |

| Fri Jan 20 | Section: Python & numpy tutorial ( ) | |||

| Tue Jan 24 | Softmax Regression, Second-order optimization ( ) | PML 8.3, 10.3 | ||

| Thu Jan 26 | Regularization, Bias and Variance ( ) | PML 4.5, 4.7, 11.3-11.4 | Homework 1 released ( , ) | |

| Fri Jan 27 | Section: Homework 0 Discussion | |||

| Tue Jan 31 | Generative Classifiers, Naive Bayes ( ) | PML 9.3-9.4 | ||

| Thu Feb 2 | Naive Bayes continued, Nearest Neighbors ( ) | PML 16.1, 16.3 | ||

| Fri Feb 3 | Section: Cross-Validation, Evaluation Metrics ( ) | |||

| Tue Feb 7 | Kernel methods ( ) | PML 17.1 | Homework 1 due | |

| Thu Feb 9 | Kernels Continued, Project Discussion ( ) | PML 4.3, 17.3 | ||

| Fri Feb 10 | Section: , | |||

| Tue Feb 14 | Support Vector Machines ( ), ML Libraries ( ) | PML 5.4 | ||

| Thu Feb 16 | Introduction to Neural Networks, Dropout, Early Stopping ( ) | PML 13.1-13.3 | Project Proposal due, Homework 2 released ( , ) | |

| Fri Feb 17 | Section: Homework 1 Discussion | |||

| Tue Feb 21 | Backpropagation ( , code ) | PML 13.4-13.5 | ||

| Thu Feb 23 | Convolutional Neural Networks ( ) | PML 14.1-14.2 | ||

| Fri Feb 24 | Section: Pytorch tutorial ( ) | |||

| Tue Feb 28 | Recurrent Neural Networks, Attention ( ) | PML 15.1-15.2 | ||

| Thu Mar 2 | Transformers, Pretraining ( ) | PML 15.4-15.7 | Homework 2 due | |

| Fri Mar 3 | Section: Midterm preparation ( ) | |||

| Tue Mar 7 | Decision Trees, Ensembling ( ) | PML 18.1-18.5 | ||

| Thu Mar 9 | ||||

| Mar 10-17 | No class or section (Spring break) | |||

| Tue Mar 21 | k-Means Clustering, Start of Gaussian Mixture Models ( ) | PML 21.3 | ||

| Thu Mar 23 | Gaussian Mixture Models, Expectation Maximization ( ) | PML 21.4, PML2 8.1-8.2 | Project Midterm Report due | |

| Fri Mar 24 | Section: Midterm Exam Discussion | |||

| Tue Mar 28 | Inference in Hidden Markov Models ( ) | PML2 29.1-29.4 | Homework 3 released ( , ) | |

| Thu Mar 30 | Learning HMMs, Dimensionality Reduction, Principal Component Analysis ( ) | PML 20.1, 20.4 | ||

| Fri Mar 31 | Section: Optimization strategies for neural networks ( ) | |||

| Tue Apr 4 | Embedding models, Word Vectors ( , ) | PML 20.5 | ||

| Thu Apr 6 | Multi-Armed Bandits ( ) | PML2 34.1-34.4 | ||

| Fri Apr 7 | Section: Practical guide to pretrained language models ( , ) | |||

| Tue Apr 11 | Markov Decision Processes, Reinforcement Learning ( ) | PML2 34.5-34.6, 35.1, 35.4 | Homework 3 due | |

| Thu Apr 13 | Q-Learning with Function Approximation, Policy Gradient ( ) | PML2 35.2-35.3 | Homework 4 released ( , ) | |

| Fri Apr 14 | Section: Practical guide to computer vision models ( , ) | |||

| Tue Apr 18 | Robustness, Adversarial Examples, Spurious Correlations ( ) | PML2 19.1-19.8 | ||

| Thu Apr 20 | Fairness in Machine Learning ( ) | FAML 1-4 | ||

| Fri Apr 21 | Section: Final Exam preparation ( ) | |||

| Tue Apr 25 | How does ChatGPT work? ( ) | |||

| Thu Apr 27 | Conclusion ( ) | Homework 4 due | ||

| Fri Apr 28 | No section (End of class) | |||

| Thu May 4 |

Grades will be based on homework assignments (40%), a class project (20%), and two exams (40%).

Homework Assignments (40% total) :

- Homework 0: 4%

- Homeworks 1-4: 9% each

Final Project (20% total) . The final project will proceed in three stages:

- Project proposal: 2%

- Midterm report: 3%

- Final project report: 15%

Exams (40% total) :

- In-class midterm: 15%

- Final exam (cumulative): 25%

You have 6 late days you may use on any assignment excluding the Project Final Report. Each late day allows you to submit the assignment 24 hours later than the original deadline. You may use a maximum of 3 late days per assignment. If you are working in a group for the project, submitting the project proposal or midterm report one day late means that each member of the group spends a late day. We do not allow use of late days for the final project report because we must grade the projects in time to submit final course grades.

If you have used up all your late days and submit an assignment late, you will lose 10% of your grade on that assignment for each day late. We will not accept any assignments more than 3 days late.

Final project

The final project can be done individually or in groups of up to 3. This is your chance to freely explore machine learning methods and how they can be applied to a task of our choice. You will also learn about best practices for developing machine learning methods—inspecting your data, establishing baselines, and analyzing your errors.

More information about the final project will be released at a later date. A list of example projects is now available at here .

While there is no required textbook for this class, you may find the following useful:

- Probabilistic Machine Learning: An Introduction (PML) and Probabilistic Machine Learning: Advanced Topics (PML2) by Kevin Murphy. You may also find PML Chapters 2-3 and 7 useful for reviewing prerequisites.

- The Elements of Statistical Learning by Trevor Hastie, Robert Tibshirani, and Jerome Friedman.

- Patterns, Predictions, and Actions: A Story about Machine Learning by Moritz Hardt and Benjamin Recht

- Fairness and Machine Learning: Limitations and Opportunities (FAML) by Solon Barocas, Moritz Hardt, and Arvind Narayanan.

To review mathematical background material, you may also find the following useful:

- Probability: Introduction to Probability by Joseph Blitzstein and Jessica Hwang. Most relevant reading: Chapters 1-5, 7, 9-10.

- Linear Algebra: Introduction to Applied Linear Algebra by Stephen Boyd and Lieven Vandenberghe. Most relevant reading: Chapters 1-3, 5-8, 10-11. (Chapters 4 and 12-14 overlap with content for this class.)

- Multivariate Calculus: Oliver Knill’s lecture notes .

Other Notes

Collaboration policy and academic integrity : Our goal is to maintain an optimal learning environment. You may discuss the homework problems at a high level with other students, but you should not look at another student’s solutions. Trying to find solutions online or from any other sources for any homework or project is prohibited, will result in zero grade and will be reported. Using AI tools to automatically generate solutions to written or programming problems is also prohibited. To prevent any future plagiarism, uploading any material from the course (your solutions, quizzes etc.) on the internet is prohibited, and any violations will also be reported. Please be considerate, and help us help everyone get the best out of this course.

Please remember the expectations set forth in the USC Student Handbook . General principles of academic honesty include the concept of respect for the intellectual property of others, the expectation that individual work will be submitted unless otherwise allowed by an instructor, and the obligations both to protect one’s own academic work from misuse by others as well as to avoid using another’s work as one’s own. All students are expected to understand and abide by these principles. Suspicion of academic dishonesty may lead to a referral to the Office of Academic Integrity for further review.

Students with disabilities : Any student requesting academic accommodations based on a disability is required to register with Disability Services and Programs (DSP) each semester. A letter of verification for approved accommodations can be obtained from DSP. Please be sure the letter is delivered to the instructor as early in the semester as possible.

- Publications

- Student Projects

Introduction to Machine Learning (2023)

Introduction.

- [05.02.2024] The 2024 winter exam and the respective solutions catalog (source file used for the grading) are now online!

- [13.08.2023] The 2023 summer exam and the respective solutions, and solutions catalog (source file used for the grading) are now online!

- [01.08.2023] The lecture notes have been updated. We have two new chapters: Clustering (provisional and not yet proof-read by the professors) and Probabilistic modelling .

- [13.07.2023] (Exam review session) There will be an exam review session on 31 July from 10-12 in ETA F 5. This will be held by Andisheh Amrollani and Mohammad Reza Karimi who were in charge of exams for the years of 2021 and 2022. They will solve the previous exam year’s questions as voted by you on Moodle and answer general questions you might have about the exam. Here is the vote on Moodle: https://moodle-app2.let.ethz.ch/mod/choice/view.php?id=920239 .

- [13.07.2023] (Plagiarism checks) We have finished plagiarism checks for the projects. If you have not received an email accusing you of plagiarism and you have a grade of 4 or above in the projects you are eligible to take the exam. If you do not satisfy any of the two above requirements, we ask you to deregister from the exam (deadline for online de-registration is 30 July). If you are not eligible and do not de-register we will give you a NO-SHOW grade.

- [13.07.2023] (Attendance only doctoral students) Your grades will be passed by tomorrow.

- [04.07.2023] We have added the exam for 2021 winter together with its solution, please find them in the Performance Assessment section.

- [24.06.2023] The lecture notes have been updated. We have two new chapters: Chapter 8 Neural Networks and Chapter 9 PCA . More coming soon!

- [07.06.2023] The two exams for 2022 academic year have been posted in the exam section to help you familiarize the exam contents.

- [07.06.2023] Project grades have been emailed. They are still subject to plagiarism checks.

- [31.05.2023] There will be a Q&A next Wednesday (07 June) presenting solutions for Project 4.

- [30.05.2023] There will be NO lectures held this week on Tuesday (30 May) and Wednesday (31 May). There still WILL BE a tutorial on Friday (2 June), as usual at 2pm, on large language models.

- [02.05.2023] A new version of the lecture notes is released! This version includes two new chapters: Chapter 6 Model Evaluation and Selection and Chapter 7 Bias-Variance Tradeoff and Regularization . In case you have anything to report, please contact Xinyu Sun .

- [03.04.2023] Because of the Easter break, there is no tutorial for this week on Friday (07.04). Instead, the session will be recorded and put online.

- [14.03.2023] For students who do not want to solve projects on their personal laptops, check out this guide by Vukasin Bozic explaining how to use Euler, the scientific computer clusters of ETH. More information regarding Euler is available here .

- [07.03.2023] The Projects and FAQ section have been updated. Importantly there will be a project introduction session on each Wednesday the project is released. And a project solution session on the Wednesday the week after the project deadline. Both to be held at the Q&A session 17(sharp)-18.

- [02.03.2023] Project 0 is online. It is ungraded and aims to help you familiarize the project workflow.

- [27.02.2023] Regarding the Q&A on March 1st: Introduction to Python for Data Science (jupyter, numpy, pandas, seaborn, matplotlib). Please check the README before the tutorial. This is for installing all the necessary libraries so you can follow along during the tutorial.

- [22.02.2023] During the Q&A session on March 1st, Olga Mineeva and Stefan Stark will present a Python libraries introduction covering Numpy and Pandas.

- [10.02.2023] Welcome to the course Introduction to Machine Learning!

| Date | Topic | Slides | Recording |

|---|---|---|---|

| Tue 21.02. | Introduction | [ ] | |

| Wed 22.02. | Linear Regression | [ ] | |

| Tue 28.02. | Optimization | [ ] | |

| Wed 01.03. | Optimization & Nonlinear Features | [ ] | |

| Tue 07.03. | Model Selection | [ ] | |

| Wed 08.03. | Bias-Variance Tradeoff & Regularization | [ ] | |

| Tue 14.03. | Classification | [ ] | |

| Wed 15.03. | Classification II | [ ] | |

| Tue 21.03. | Classification & Kernel Methods | [ ] | |

| Wed 22.03. | Kernel & Other Methods | [ ] | |

| Tue 28.03. | Neural Networks | [ ] | |

| Wed 29.03. | Neural Networks II | [ ] | |

| Tue 04.04. | Neural Networks III | [ ] | |

| Wed 05.04. | Neural Networks IV | [ ] | |

| Tue 18.04. | Clustering | [ ] | |

| Wed 19.04. | Dimension Reduction | [ ] | |

| Tue 25.04. | Dimension Reduction II | [ ] | |

| Wed 26.04. | PyTorch Tutorial | ||

| Tue 02.05. | Probabilistic Modeling | [ ] | |

| Wed 03.05. | Probabilistic Modeling II | [ ] | |

| Tue 09.05. | Probabilistic Modeling III | [ ] | |

| Tue 16.05. | Gaussian Mixture Models | [ ] | |

| Wed 17.05. | Gaussian Mixture Models II | [ ] | |

| Tue 23.05. | Gaussian Mixture Models III | [ ] | |

| Wed 24.05. | Generative Models with Neural Networks | [ ] | |

| Mon 31.07. | Exam review session |

Lecture Notes

| Last version: |

| Date | Topic | Materials | Recording | Homework/Solution |

|---|---|---|---|---|

| Fri 24.02. | Math Recap | – | ||

| Fri 03.03. | Linear Regression & Optimization | |||

| Fri 10.03. | Review of Homework 1 | |||

| Fri 17.03. | Classification | |||

| Fri 24.03. | Review of Homework 2 | |||

| Fri 31.03. | Neural Network | |||

| Fri 07.04. | Review of Homework 3 | |||

| Fri 21.04. | Clustering and Dimension Reduction | |||

| Fri 28.04. | Review of Homework 4 | |||

| Fri 05.05. | Probabilistic Modeling | |||

| Fri 12.05. | Review of Homework 5 | |||

| Fri 19.05. | Generative Models | |||

| Fri 26.05. | Review of Homework 6 | |||

| Fri 02.06. | Large Language Models | - | – |

Q&A Sessions

| Date | Topic | Recording |

|---|---|---|

| Wed 22.02. | Administration | |

| Wed 01.03. | Python Tutorial | |

| Wed 08.03. | Linear Regression & Optimization | |

| Wed 15.03. | Project 1 Introduction | |

| Wed 22.03. | Classification & Kernel Methods | |

| Wed 29.03. | Project 2 Introduction | |

| Wed 05.04. | Project 1 Solution | |

| Wed 19.04. | (No one attended) | – |

| Wed 26.04. | Project 3 Introduction | |

| Wed 03.05. | Project 2 Solution | |

| Wed 10.05. | Project 4 Introduction | |

| Wed 17.05. | Project 3 Solution | |

| Wed 24.05. | (No one attended) | – |

| Wed 07.06. | Project 4 Solution |

| Instructors | and |

| Head TA | |

| Pragnya Alatur, Parnian Kassraie, Lars Lorch, Lenart Treven, Bhavya Sukhija, David Lindner, Yarden As, Scott Sussex, Hugo Yeche, Vignesh Ram, Vukasin Bozic, Charlotte Bunne, Cynthia Chen, Sonali Andani, Mojmir Mutny, Zhenrong Lang, Gavrilopoulos Georgios, Phillip Scherer, Xinyu Sun, Zhiyuan Hu, Zhenru Jia, Rajesh Sharma, Giorgia Racca, Angeline Pouget, Yuhao Mao, Thomas Out, Javier Abad Martinez, Piersilvio De Bartolomeis, Alexandru Tifrea, Viacheslav Borovitskiy, Jannis Bolick, Stefan Stark, Olga Mineeva, Harun Mustafa, Daniel Yang | |

| Please use for questions regarding course material, organization and projects. If you need to contact the Head TA or the lecturer directly, please send an email to . Please think twice before you send an email though and make sure you read all information here carefully. |

| Tue 14-16 | ETA F5 | ETF E1 (via video) |

| Wed 14-16 | ETA F5 | ETF E1 (via video) |

| Fri 14-16 | ETA F5 | ETF E1 (via video) |

Questions & Answers

| Wed 17-18 | Virtual |

Code Projects

| Project | Release Date | End Date | Weight on Project Grade |

|---|---|---|---|

| Project 0 (dummy) | Wed, 01.03.2023 08:00 | - | 0 |

| Project 1a&b | Wed, 15.03.2023 08:00 | Wed, 29.03.2023 12:00 (Noon) | 0.25 (0.125 each) |

| Project 2 | Wed, 29.03.2023 08:00 | Wed, 26.04.2023 12:00 (Noon) | 0.25 |

| Project 3 | Wed, 26.04.2023 16:00 | Wed, 10.05.2023 12:00 (Noon) | 0.25 |

| Project 4 | Wed, 10.05.2023 08:00 | Wed, 31.05.2023 12:00 (Noon) | 0.25 |

Performance Assessment

Other resources.

- Marc Peter Deisenroth, A Aldo Faisal, and Cheng Soon Ong Mathematics for Machine Learning . Cambridge University Press, 2020.

- K. Murphy. Machine Learning: a Probabilistic Perspective . MIT Press, 2012.

- C. Bishop. Pattern Recognition and Machine Learning . Springer, 2007. (optional)

- T. Hastie, R. Tibshirani, and J. Friedman. The Elements of Statistical Learning: Data Mining, Inference and Prediction . Springer, 2001.

- L. Wasserman. All of Statistics: A Concise Course in Statistical Inference. Springer, 2004.

- G. James., D. Witten and et al. An Introduction to Statistical Learning . Springer, 2021.

NPTEL Introduction to Machine Learning Assignment 3 Answers 2023

NPTEL Introduction to Machine Learning Assignment 3 Answers 2023:- In This article, we have provided the answers of Introduction to Machine Learning Assignment 3 You must submit your assignment to your own knowledge.

NPTEL Introduction To Machine Learning Week 3 Assignment Answer 2023

1. Which of the following are differences between LDA and Logistic Regression?

- Logistic Regression is typically suited for binary classification, whereas LDA is directly applicable to multi-class problems

- Logistic Regression is robust to outliers whereas LDA is sensiti v e to outliers

- both (a) and (b)

- None of these

2. We have two classes in our dataset. The two classes have the same mean but different variance.

LDA can classify them perfectly. LDA can NOT classify them perf e ctly. LDA is not applicable in data with these properties Insufficient information

3. We have two classes in our dataset. The two classes have the same variance but different mean.

LDA can classify them perfectly. LDA can NOT classify them perfectly. LDA is not applicable in data with these prop e rties Insufficient information

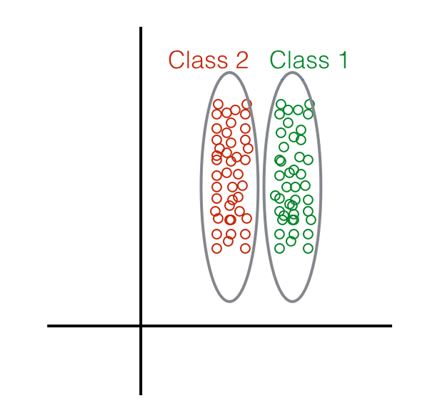

4. Given the following distribution of data points:

What method would you choose to perform Dimensionality Red u ction? Linear Discriminant Analysis Principal Component Analysis Both LDA and/or PCA. None of the above.

5. If log(1−p(x)/1+p(x))=β0+βx Wha t is p(x) ?

p(x)=1+eβ0+βx / eβ0+βx p(x)=1+eβ0+βx / 1−eβ0+βx p(x)=eβ0+βx / 1+eβ0+βx p(x)=1−eβ0+βx / 1+eβ0+βx

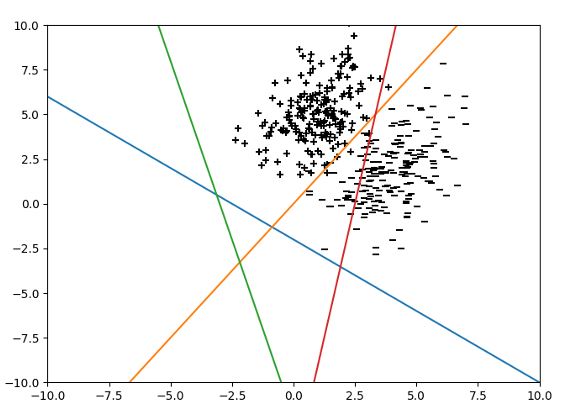

Red Orange Blue Green

7. Which of these techniques do we use to optimise Logistic Regres s ion:

Least S q uare Error Maximum Likelihood (a) or (b) are equally good (a) and (b) perform very poorly, so we generally avoid using Logistic Regression None of these

8. LDA assumes that the class data is distributed as:

Poisson Unif o rm Gaussian LDA makes no such assumption.

9. Suppose we have two variables, X and Y (the dependent variable), and we wish to find their relation. An expert tells us that relation between the two has the form Y=meX+c. Suppose the samples of the variables X and Y are available to us. Is it possible to apply linear regression to this data to estimate the values of m and c ?

No. Yes. Insufficient information. None of the above.

10. What might happen to our logistic regression model if the number of features is more th a n the number of samples in our dataset?

It will remain unaffected It will not find a hyperplane as the decision bound a ry It will over fit None of the above

NPTEL Introduction to Machine Learning Assignment 3 Answers [July 2022]

1. For linear classification we use: a. A linear function to separate the classes. b . A linear function to model the data. c. A linear loss. d. Non-linear function to fit the data.

2. Logit transformation for Pr(X=1) for given data is S=[0,1,1 , 0,1,0,1] a. 3/4 b. 4/3 c. 4/7 d . 3/7

Answers will be Uploaded Shortly and it will be Notified on Telegram, So JOIN NOW

3. The output of binary class logistic regression lies in this range. a. [−∞,∞] b. [−1,1] c. [0,1] d. [−∞ , 0]

4. If log(1−p(x)1+p(x))=β0+βxlog What is p(x)p(x)?

5. Logistic regression is robust to outliers. Why? a . The squashing of output values between [0, 1] dampens the affect of outliers. b. Linear models are robust to outliers. c. The parameters in logistic regression tend to take small values due to the nature of the problem setting and hence outliers get translated to the same range as other samples. d. The given statement is false.

6. Aim of LDA is (multiple options may apply) a. Minimize intra-class variability. b. Maximize intra-class variability. c . Minimize the distance between the mean of classes d. Maximize the distance between the mean of classes

👇 For Week 04 Assignment Answers 👇

7. We have two classes in our dataset with mean 0 and 1, and variance 2 and 3. a. LDA may be able to classify them perfectly. b. LDA will definitely be able to classify them perfectly. c. LDA will definitely NOT be able to classify them perfectly . d. None of the above.

8. We have two classes in our dataset with mean 0 and 5 , and variance 1 and 2. a. LDA may be able to classify them perfectly. b. LDA will definitely be able to classify them perfectly. c. LDA will definitely NOT be able to classify them perfectly. d. None of the above.

9. For the two classes ’+ ’ and ’-’ shown below. While performing LDA on it, which line is the most appropriate for projecting data points? a. Red b. Orange c. Blue d. Green

10. LDA assumes that the class data is distributed as: a. Poisson b. Uniform c. Gaussian d. LDA makes no such assumption .

For More NPTEL Answers:- CLICK HERE Join Our Telegram:- CLICK HERE

| Assignment 1 | |

| Assignment 2 | |

| Assignment 3 | |

| Assignment 4 | |

| Assignment 5 | |

| Assignment 6 | |

| Assignment 7 | |

| Assignment 8 | |

| Assignment 9 | |

| Assignment 10 | |

| Assignment 11 | NA |

| Assignment 12 | NA |

What is Introduction to Machine Learning?

With the increased availability of data from varied sources there has been increasing attention paid to the various data driven disciplines such as analytics and machine learning. In this course we intend to introduce some of the basic concepts of machine learning from a mathematically well motivated perspective. We will cover the different learning paradigms and some of the more popular algorithms and architectures used in each of these paradigms.

CRITERIA TO GET A CERTIFICATE

Average assignment score = 25% of the average of best 8 assignments out of the total 12 assignments given in the course. Exam score = 75% of the proctored certification exam score out of 100

Final score = Average assignment score + Exam score

YOU WILL BE ELIGIBLE FOR A CERTIFICATE ONLY IF THE AVERAGE ASSIGNMENT SCORE >=10/25 AND EXAM SCORE >= 30/75. If one of the 2 criteria is not met, you will not get the certificate even if the Final score >= 40/100.

| Assignment 1 | |

| Assignment 2 | |

| Assignment 3 | |

| Assignment 4 | |

| Assignment 5 | |

| Assignment 6 | NA |

| Assignment 7 | NA |

| Assignment 8 | NA |

| Assignment 9 | NA |

| Assignment 10 | NA |

| Assignment 11 | NA |

| Assignment 12 | NA |

NPTEL Introduction to Machine Learning Assignment 3 Answers [Jan 2022]

Q1. consider the case where two classes follow Gaussian distribution which are centered at (6, 8) and (−6, −4) and have identity covariance matrix. Which of the following is the separating decision boundary using LDA assuming the priors to be equal?

(A) x+y=2 (B) y−x=2 (C) x=y (D) both (a) and (b) (E) None of the above (F) Can not be found from the given information

Answer:- (A) x+y=2

👇 FOR NEXT WEEK ASSIGNMENT ANSWERS 👇

Q2. Which of the following are differences between PCR and LDA?

(A) PCR is unsupervised whereas LDA is supervised (B) PCR maximizes the variance in the data whereas LDA maximizes the separation between the classes (C) both (a) and (b) (D) None of these

Answer:- (A) PCR is unsupervised whereas LDA is supervised

Q3. Which of the following are differences between LDA and Logistic Regression?

(A) Logistic Regression is typically suited for binary classification, whereas LDA is directly applicable to multi-class problems (B) Logistic Regression is robust to outliers whereas LDA is sensitive to outliers (C) both (a) and (b) (D) None of these

Answer:- (C) both (a) and (b)

ALSO READ :- NPTEL Registration Steps [July – Dec 2022] NPTEL Exam Pattern Tips & Top Tricks [2022] NPTEL Exam Result 2022 | NPTEL Swayam Result Download

Q4. We have two classes in our dataset. The two classes have the same mean but different variance.

- LDA can classify them perfectly.

- LDA can NOT classify them perfectly.

- LDA is not applicable in data with these properties

- Insufficient information

Answer:- 2. LDA can NOT classify them perfectly.

Q5. We have two classes in our dataset. The two classes have the same variance but different mean.

Answer:- 1. LDA can classify them perfectly.

Q6. Which of these techniques do we use to optimise Logistic Regression:

- Least Square Error

- Maximum Likelihood

- (a) or (b) are equally good

- (a) and (b) perform very poorly, so we generally avoid using Logistic Regression

Answer:- 2.Maximum Likelihood

Q7. Suppose we have two variables, X and Y (the dependent variable), and we wish to find their relation. An expert tells us that relation between the two has the form Y = meX + c . Suppose the samples of the variables X and Y are available to us. Is it possible to apply linear regression to this data to estimate the values of m and c ?

- insufficient information

Answer:- 2.yes

Q8. What might happen to our logistic regression model if the number of features is more than the number of samples in our dataset?

- It will remain unaffected

- It will not find a hyperplane as the decision boundary

- It will overfit

- None of the above

Answer:- 3. It will overfit

Q9. Logistic regression also has an application in

- Regression problems

- Sensitivity analysis

- Both (a) and (b)

Answer:- 3. Both (a) and (b)

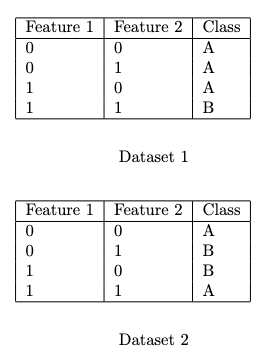

Q10. Consider the following datasets:

Which of these datasets can you achieve zero training error using Logistic Regression (without any additional feature transformations)?

- Both the datasets

- Only on dataset 1

- Only on dataset 2

- None of the datasets

Answer:- For Answer Click Here

NPTEL Introduction to Machine Learning Assignment 3 Answers 2022:- In This article, we have provided the answers of Introduction to Machine Learning Assignment 3

Disclaimer :- We do not claim 100% surety of solutions, these solutions are based on our sole expertise, and by using posting these answers we are simply looking to help students as a reference, so we urge do your assignment on your own.

For More NPTEL Answers:- CLICK HERE

Join Our Telegram:- CLICK HERE

4 thoughts on “NPTEL Introduction to Machine Learning Assignment 3 Answers 2023”

- Pingback: NPTEL Introduction To Machine Learning Assignment 4 Answers

- Pingback: NPTEL Introduction To Machine Learning Assignment 5 Answers

- Pingback: NPTEL Introduction To Machine Learning Assignment 6 Answers

- Pingback: NPTEL Introduction To Machine Learning Assignment 7 Answers

Leave a Comment Cancel reply

You must be logged in to post a comment.

Please Enable JavaScript in your Browser to Visit this Site.

10-301/601: Introduction to Machine Learning

Summer 2024.

Key Information and Links

| Instructor: | |

| Education Associate: | |

| Announcements/Q&A: | We will be using for making announcements and answering questions. |

| Lectures: | Monday, Tuesdays and Wednesdays from 9:30 AM to 10:50 AM (EDT) in SH 105. Lectures will be recorded for students to review after the fact; the recordings will be hosted by . |

| In-class Polls: | Some portion of your grade will be determined based on participation in polls during lecture. The latest poll can always be found at ; in order to respond, you must be logged in with your CMU email. |

| Recitations: | Thursdays from 9:30 AM to 10:50 AM (EDT) in SH 105. Attendance at recitations is optional and therefore, outside of extraordinary circumstances, these will not be recorded. Recitation handouts can be found under the tab. |

| Assignments: | Homework handouts will be posted to the course website under the tab. All code and written responses to empirical questions should be submitted via . |

| Office Hours: | The time and location of office hours can be found on the . |

1. Course Description

Machine Learning is concerned with computer programs that automatically improve their performance through experience, e.g., programs that learn to recognize human faces, recommend music and movies, and drive autonomous robots. This course covers the theory and practical algorithms for machine learning from a variety of perspectives. Specific topics include Bayesian networks, decision tree learning, support vector machines, statistical learning methods, unsupervised learning and reinforcement learning as well as theoretical concepts such as inductive bias, the PAC learning framework, Bayesian learning methods, margin-based learning, and Occam’s Razor. Programming assignments include hands-on experiments with various learning algorithms. This course is designed to give a graduate-level student a thorough grounding in the methodologies, technologies, mathematics and algorithms currently needed by people who do research in machine learning.

10-301 and 10-601 are identical. Undergraduates must register for 10-301 and graduate students must register for 10-601.

Learning Outcomes: By the end of the course, students should be able to:

- Implement and analyze existing learning algorithms, including well-studied methods for classification, regression, structured prediction, clustering, and representation learning.

- Integrate multiple facets of practical machine learning in a single system: data preprocessing, learning, regularization and model selection.

- Describe the formal properties of models and algorithms for learning and explain the practical implications of those results.

- Compare and contrast different paradigms for learning (supervised, unsupervised, etc...).

- Design experiments to evaluate and compare different machine learning techniques on real-world problems.

- Employ probability, statistics, calculus, linear algebra, and optimization in order to develop new predictive models or learning methods.

- Given a description of a ML technique, analyze it to identify (1) the expressive power of the formalism; (2) the inductive bias implicit in the algorithm; (3) the size and complexity of the search space; (4) the computational properties of the algorithm: (5) any guarantees (or lack thereof) regarding termination, convergence, correctness, accuracy or generalization power.

2. Prerequisites

Students entering the class are expected to have a pre-existing working knowledge of probability, linear algebra, statistics and algorithms; some recitation sessions will be held to review basic concepts.

Note: For each programming assignment, you will be required to use Python . You will be expected to know, or be able to quickly pick up, that programming language.

- You need to have, before starting this course, basic familiarity with probability and statistics , as can be achieved at CMU by having passed 36-217 (Probability Theory and Random Processes) or 36-225 (Introduction to Probability and Statistics I), or 15-259, or 21-325, or comparable courses elsewhere, with a grade of ‘C’ or higher.

- You need to have, before starting this course, college-level maturity in discrete mathematics , as can be achieved at CMU by having passed 21-127 (Concepts of Mathematics) or 15-151 (Mathematical Foundations of Computer Science), or comparable courses elsewhere, with a grade of ‘C’ or higher.

You must strictly adhere to these pre-requisites! Even if CMU’s registration system does not prevent you from registering for this course, it is still your responsibility to make sure you have all of these prerequisites before you register.

3. Recommended Textbooks

This course does not exactly follow any one textbook. However, most lectures will have some optional reading to help you better understand the material or see a different presentation/perspective. We recommend you read these after the corresponding lecture. These readings will typically be drawn from the following texts, many of which are freely available online:

- A Course in Machine Learning , Hal Daumé III.

- Machine Learning , Tom Mitchell.

- Machine Learning: a Probabilistic Perspective , Kevin P. Murphy.

- Pattern Recognition and Machine Learning , Christopher M. Bishop.

The textbook below is a great resource for those hoping to brush up on the prerequisite mathematics background for this course:

- Mathematics for Machine Learning , Marc Peter Deisenroth, A. Aldo Faisal, and Cheng Soon Ong.

4. Course Components

The graded components of this course consist of participation in lectures, midterm and final exams, programming assignments and in-class quizzes. The breakdown is as follows:

- 9 Homework Assignments = 45%

- Midterm = 25%

- Final = 25%

- Participation = 5%

We will convert numerical course grades to letter grades based on grade boundaries that are determined at the end of the semester. The following is a list of upper bounds on the grade cutoffs we will use; in all likelihood, these will be adjusted down at the end of the semester:

There are two types of homework assignments in this course: programming and written. The programming assignments will ask you to implement machine learning algorithms from scratch; they emphasize understanding of real-world applications, building end-to-end systems, and experimental design. The written assignments will focus on core concepts, “on-paper” implementations of classic learning algorithms, derivations, and understanding of theory.

LaTeX is a valuable tool for communicating machine learning concepts to others. As such, we are requiring that your homework submissions be completed in LaTex. To facilitate this, we will always release a LaTeX starter template that you can simply fill in with your answers.

Midterm and Final Exams

You are required to attend all the exams. Unless otherwise noted, all exams will be closed-book. You may bring one sheet of A4 or letter-sized paper as a cheatsheet (both back and front may be used). You are encouraged to handwrite this cheatsheet as a form of preparing for the exam but you may typeset it if you so choose.

Both exams will be scheduled by the registrar sometime during the official university exam periods; note that we will be using the mini-5 final exam date as the date of our midterm exam. The dates of both exams can be found on the lecture schedule ; please plan your travel accordingly as we will not be able accommodate individual travel needs.

If you have an unavoidable conflict with an exam (e.g., an exam in another course), notify us as soon as possible by making a private post on Piazza.

Participation

We will be using PollEverywhere for in-class polls. In order to access these polls, you must create an account using your CMU email address. You can always access the latest poll at pollev.com/301601polls .

You will always be allowed to submit multiple times so if there are multiple questions during a lecture, you should submit multiple times. Your participation grade will be based on the percentage of in-class polls answered:

- 5% for 80% or greater poll participation.

- 3% for 65%-80% poll participation.

- 1% for 50%-65% poll participation.

The correctness of your responses will not be taken into account when computing participation grades, all that matters is that you submit something. All in-class polls will only be live until the start of the next lecture or recitation (roughly a 24-hour period); you will receive 50% credit for any poll you respond to after the corresponding lecture ends, i.e., if you were to respond to every poll after the end of the corresponding lecture, then your overall participation grade would be 1%.

5. Office Hours

The schedule of office hours will always appear on the course calendar . All office hours will be held in-person. Instructor office hours will (usually) be held immediately after class in either the classroom or a nearby space. We encourage you to stick around and ask any questions you have about lecture material, homework problems, exam preparation, course logistics, etc...

In office hours, when it is your turn, you should pose your question to the TA(s) and they will determine whether or not your question would be best addressed privately or publicly, i.e., to anyone in the room who wants to listen in.

We will make use of the following (informal) rules:

- 10 Minute Rule: Each student’s question will be addressed by the TA for at most 10 minutes. The only exception to this will be if a TA is answering a question publicly that has broad interest to many other students.

- The Pseudo Code Rule: This is not a programming course; you are expected to know how to debug code. As such, if your question is of the form "Could you help me to debug my code?", you must bring with you detailed pseudocode that describes your implementation design. If you do not have pseudocode, the TA will not look at your code, but instead ask you to sketch out pseudocode at the chalkboard and discuss there instead. After discussing at a high-level, if your 10 minutes have not expired, the TA may have time to look at your code.

While you're awaiting your turn, we encourage you to listen in to the answers to any publicly answered questions. Please be courteous and allow the student who posed the question to primarily direct the discussion with the TA. We also encourage you to collaborate with others (following our collaboration policies below) while waiting.

6. General Policies

Late homework policy.

Late homework submissions are only eligible for 75% of the points the first day (24-hour period) after the deadline and only eligible for 50% the second day after the deadline.

You have a total of 10 grace days for use on any homework assignment. We will automatically keep a tally of these grace days for you; they will be applied greedily. No assignment will be accepted more than 2 days after the deadline. This has two important implications: (1) you may not use more than 2 grace days on any single assignment (2) you may not combine grace days with the late policy above to submit more than 2 days late.

HW3, HW6, and HW9 will not be accepted more than 1 day after the deadline, so that we can hold the solution session before the subsequent exams. To ensure you receive graded feedback before the exams, you must submit HW3, HW6, HW9 on time.

All homeworks will be submitted electronically via Gradescope . As such, lateness will be determined by the latest timestamp of any part of your submission. For example, suppose the homework requires two submission uploads – if you submit the first upload on time but the second upload 1 minute late, your entire homework will be penalized for the full 24-hour period.

In general, we do not grant extensions on assignments. There are several exceptions:

- Medical Emergencies: If you are sick and unable to complete an assignment or attend class, please go to University Health Services. For minor illnesses, we expect grace days or our late penalties to provide sufficient accommodation. For medical emergencies (e.g., prolonged hospitalization), students may request an extension afterwards.

- Family/Personal Emergencies: If you have a family emergency (e.g., death in the family) or a personal emergency (e.g., mental health crisis), please contact your academic adviser and/or Counseling and Psychological Services (CaPS).

- University-Approved Travel: If you are traveling out-of-town to a university approved event or an academic conference, you may request an extension for any time lost due to traveling. For university approved absences, you must provide confirmation of attendance, usually from a faculty or staff organizer of the event or via travel/conference receipts.

For any of the above situations, you may request an extension by emailing Nichelle at [email protected] – do not email the instructor or TAs. Please be specific about which assessment(s) you are requesting an extension for and the number of hours requested. The email should be sent as soon as you are aware of the conflict and at least 5 days prior to the deadline. In the case of an emergency, no notice is needed.

If this is a medical emergency or mental health crisis, you must also CC your CMU college liaison and/or your academic advisor. Do not submit any medical documentation to the course staff. If necessary, your college liaison and The Division of Student Affairs (DoSA) will request such documentation and they will view the health documentation and conclude whether a retroactive extension is appropriate. If you haven’t interacted with your college liaison before, they are experienced student affairs staff who work in partnership with students, housefellows, advisors, faculty, and associate deans in each college to assure support for students regarding their overall Carnegie Mellon experience.

Audit Policy

Formal auditing of this course is permitted. You must follow the official procedures for a course audit as outlined by the HUB/registrar. Please do not email the instructor requesting permission to audit. Instead, you should first register for the appropriate section. Next fill out the Course Audit Approval form , obtain your academic advisor's signature and then approach the instructor in-person immediately after class to obtain their signature.

Auditors are required to:

- Attend or watch all of the lectures.

- Receive a 65% participation grade or higher.

- Submit at least 4 of the 9 homework assignments.

- Auditors are encouraged to sit for the exams, but should only do so if they plan to put forth actual effort in solving them.

Pass/Fail Policy

You are allowed to take this course as Pass/Fail; instructor permission is not required. What letter grade is the cutoff for a Pass will depend on your specific program; we do not specify whether or not you Pass but rather we compute your letter grade the same as everyone else in the class and your program converts that letter grade to a Pass or Fail depending on their cutoff. Be sure to check with your program/department as to whether you can count a Pass/Fail course towards your degree requirements.

Accommodations for Students with Disabilities

If you have a disability and have an accommodations letter from the Disability Resources office, please email Nichelle at [email protected] to set up a meeting for the purposes of discussing your accommodations and needs as early in the semester as possible. He will work with you to ensure that accommodations are provided as appropriate. If you suspect that you may have a disability and would benefit from accommodations but are not yet registered with the Office of Disability Resources, I encourage you to contact them at [email protected] .

7. Technologies

We will use a variety of technologies throughout the summer:

- Piazza : we will use Piazza for all course discussion. Questions about homeworks, course content, logistics, etc... should all be directed to Piazza. If you have a question, chances are several others had the same question. By posting your question publicly, the course staff can answer once and everyone benefits. If you have a private question, you should also use Piazza as it will likely receive a faster response.

Gradescope : we will use Gradescope to collect PDF submissions of open-ended questions on the homework e.g., mathematical derivations, plots, short answers. The course staff will manually grade your submission, and you’ll receive personalized feedback explaining your final marks.

You will also submit your code for programming questions on the homework to Gradescope. After uploading your code, our grading scripts will autograde your assignment by running your program on a virtual machine. This provides you with immediate feedback on the performance of your submission.

If you believe an error was made during manual grading, you’ll be able to submit a regrade request on Gradescope. For each quiz, regrade requests will be open for only 1 week after the grades have been published. This is to encourage you to check the feedback you’ve received early.

- Zoom : lectures will be livestreamed via Zoom; the link to the lecture livestream is only available to students formally enrolled in the course and can be found in this Ed post .

- Panopto : lecture recordings will be hosted by Panopto; note that recordings may not be immediately available after lecture due to editing/processing time.

- PollEverywhere : in-class polls will be conducted through Polleverywhere. You can access the most recent poll at pollev.com/301601polls but you must be logged into an account associated with your CMU email in order to do so.

8. Collaboration and Academic Integrity

Read this carefully, collaboration among students.

The purpose of student collaboration is to facilitate learning, not to circumvent it. Studying the material in groups is strongly encouraged. You are also allowed to seek help from other students in understanding the material needed to solve a particular homework problem, provided any written notes (including code) are taken on an impermanent surface (e.g., whiteboard, chalkboard), and provided learning is facilitated, not circumvented. The actual solution must be written by each student alone.

A good method to follow when collaborating is to meet with your peers, discuss ideas at a high level, but do not copy down any notes from each other or from a white board. Any scratch work done at this time should be your own only. Before writing the assignment solutions, you should make sure that you are doing this without anyone else present, putting all notes away, closing all tabs on your computer, and writing it completely by yourself with no other resources.

You are absolutely not allowed to share/compare answers or screen share your work with one another.

The presence or absence of any form of help or collaboration, whether given or received, must be explicitly stated and disclosed in full by all involved. Specifically, each assignment solution must include answers to the following questions:

- Did you receive any help whatsoever from anyone in solving this assignment? Yes / No.

- If you answered ‘yes’, give full details: ____________

- (e.g., "Jane Doe explained to me what is asked in Question 3.4")

- Did you give any help whatsoever to anyone in solving this assignment? Yes / No.

- If you answered ‘yes’, give full details: _____________

- (e.g., "I pointed Joe Smith to section 2.3 since he didn’t know how to proceed with Question 2")

- Did you find or come across code that implements any part of this assignment? Yes / No. (See below policy on "found code")

- (book & page, URL & location within the page, etc.).

If you gave help after turning in your own assignment and/or after answering the questions above, you must update your answers before the assignment’s deadline, if necessary by emailing the course staff.

Collaboration without full disclosure will be handled severely, in compliance with CMU’s Policy on Academic Integrity.

Note that the policies outlined above only apply to the programming assignments. Students are allowed to collaborate to any extent when working on the study guides, including directly working together to arrive at solutions and sharing solutions with other members of the class. The only aspect of this collaboration policy that extends to the study guide material is the Duty to Protect One's Work: while sharing work with other students enrolled in the course is permitted, you should never post solutions publicly (see below for more details).

Previously Used Assignments

Some of the programming assignments used in this class may have been used in prior offerings, in classes at other institutions, or elsewhere. Solutions to them may be, or may have been, available online, or from other people or sources. It is explicitly forbidden to use any such sources, or to consult people who have solved these problems before. It is explicitly forbidden to search for these problems or their solutions on the internet. You must complete the programming assignments completely on your own. We will be actively monitoring your compliance. Collaboration with other students who are currently taking the class is allowed, but only under the conditions stated above.

AI Assistance

To best support your own learning, you should complete all graded assignments in this course yourself, without any use of generative artificial intelligence (AI), such as ChatGPT. Please refrain from using AI tools to generate any content (text, video, audio, images, code, etc.) for an assessment. Passing off any AI generated content as your own (e.g., cutting and pasting content into written assignments, or paraphrasing AI content) constitutes a violation of CMU’s academic integrity policy .

Policy Regarding "Found Code"

You are encouraged to read books and other instructional materials, both online and offline, to help you understand the concepts and algorithms taught in class. These materials may contain example code or pseudocode, which may help you better understand an algorithm or an implementation detail. However, when you implement your own solution to an assignment, you must put all materials aside, and write your code completely on your own, starting "from scratch". Specifically, you may not use any code you found or came across. If you find or come across code that implements any part of your assignment, you must disclose this fact in your collaboration statement.

Duty to Protect One’s Work

Students are responsible for proactively protecting their work from copying and misuse by other students. If a student’s work is copied by another student, the original author is also considered to be in violation of the course policies. It does not matter whether the author allowed the work to be copied or was merely negligent in preventing it from being copied. When overlapping work is submitted by different students, both students will be punished.

To protect future students, do not post your solutions publicly, neither during the course nor afterwards.

Penalties for Violations of Course Policies

All violations of course policies (even the first one) will always be reported to the university authorities (your department head, associate dean, the dean of Student Affairs, etc.) as an official Academic Integrity Violation and will carry severe penalties.

- The penalty for the first violation is a negative 100% on the assignment i.e., it would have been better to submit nothing and receive a 0%.

- The penalty for the second violation is failure in the course, and can even lead to dismissal from the university.

Take care of yourself. Do your best to maintain a healthy lifestyle by eating well, exercising, avoiding drugs and alcohol, getting enough sleep and taking some time to relax. This will help you achieve your goals and cope with stress.

All of us benefit from support during times of struggle. You are not alone. There are many helpful resources available on campus and an important part of the college experience is learning how to ask for help. Asking for support sooner rather than later is often helpful.

If you or anyone you know experiences any academic stress, difficult life events, or feelings like anxiety or depression, we strongly encourage you to seek support. Counseling and Psychological Services (CaPS) is here to help: call 412-268-2922 and visit their website at http://www.cmu.edu/counseling/ .

If you or someone you know is feeling suicidal or in danger of self-harm, call someone immediately, day or night:

- CaPS: 412-268-2922

- Re:solve Crisis Network: 888-796-8226

- If the situation is life threatening, call the police:

- On campus: CMU Police: 412-268-2323

- Off campus: 911

10. Diversity

We must treat every individual with respect. We are diverse in many ways, and this diversity is fundamental to building and maintaining an equitable and inclusive campus community. Diversity can refer to multiple ways that we identify ourselves, including but not limited to race, color, national origin, language, sex, disability, age, sexual orientation, gender identity, religion, creed, ancestry, belief, veteran status, or genetic information. Each of these diverse identities, along with many others not mentioned here, shape the perspectives our students, faculty, and staff bring to our campus. We, at CMU, will work to promote diversity, equity and inclusion not only because diversity fuels excellence and innovation, but because we want to pursue justice. We acknowledge our imperfections while we also fully commit to the work, inside and outside of our classrooms, of building and sustaining a campus community that increasingly embraces these core values.

Each of us is responsible for creating a safer, more inclusive environment.

Unfortunately, incidents of bias or discrimination do occur, whether intentional or unintentional. They contribute to creating an unwelcoming environment for individuals and groups at the university. Therefore, the university encourages anyone who experiences or observes unfair or hostile treatment on the basis of identity to speak out for justice and support, within the moment of the incident or after the incident has passed. Anyone can share these experiences using the following resources:

- Center for Student Diversity and Inclusion: [email protected] , 412-268-2150

- Report-It online anonymous reporting platform: reportit.net

- username: tartans

- password: plaid

All reports will be documented and deliberated to determine if there should be any following actions. Regardless of incident type, the university will use all shared experiences to transform our campus climate to be more equitable and just.

Education Associate

Nichelle phillips.

Teaching Assistants

Class Mascot

Neural the narwhal.

| Date | Topic | Slides | Readings/Resources |

|---|---|---|---|

| Mon, 5/13 | Introduction: Notation & Problem Formulation | ( ) | |

| Tue, 5/14 | Decision Trees - Model Definition & Making Predictions | ( ) | (blog post) |

| Wed, 5/15 | Decision Trees - Learning | ( ) | (blog post) |

| Mon, 5/20 | Nearest Neighbors | ( ) | |

| Tue, 5/21 | Model Selection (Mini-lecture) | ( ) | |

| Wed, 5/22 | Perceptron | ( ) | |

| Mon, 5/27 | No Class (Memorial Day) | ||

| Tue, 5/28 | Linear Regression (Mini-lecture) | ( ) | |

| Wed, 5/29 | Optimization for Machine Learning | ( ) | |

| Mon, 6/3 | MLE & MAP | ( ) | |

| Tue, 6/4 | Logistic Regression (Mini-lecture) | ( ) | |

| Wed, 6/5 | Feature Engineering & Regularization | ( ) | |

| Mon, 6/10 | Neural Networks - Model Definition & Making Predictions | ( ) | |

| Tue, 6/11 | Differentiation & Computation Graphs (Mini-lecture) | ( ) | |

| Wed, 6/12 | Neural Networks - Learning & Advanced Optimization | ( ) | |

| Mon, 6/17 | Societal Impacts of ML | ( ) | |

| Tue, 6/18 | Recitation in lieu of Lecture | ||

| Wed, 6/19 | No Class (Juneteenth) | ||

| Fri, 6/21 | Midterm Exam (Time and Location TBD) | ||

| Mon, 6/24 | Unsupervised Learning: Dimensionality Reduction | ( ) | |

| Tue, 6/25 | Unsupervised Learning: Clustering (Mini-lecture) | ( ) | |

| Wed, 6/26 | Deep Learning - CNNs | ( ) | |

| Mon, 7/1 | Deep Learning - RNNs | ( ) | |

| Tue, 7/2 | Deep Learning - Attention & Transformers | ( ) | |

| Wed, 7/3 | Recitation in lieu of Lecture | ||

| Thu, 7/4 | No Class (Independence Day) | ||

| Mon, 7/8 | Reinforcement Learning: MDPs & Value Functions | ( ) | |

| Tue, 7/9 | Reinforcement Learning: Value & Policy Iteration (Mini-lecture) | ( ) | |

| Wed, 7/10 | Reinforcement Learning: Q-learning & Deep RL | ( ) | |

| Mon, 7/15 | Learning Theory | (Pre-class) ) --> | |

| Tue, 7/16 | Learning Theory (Mini-lecture) | ( ) --> | |

| Wed, 7/17 | Boosting | ( ) --> | (2001) |

| Mon, 7/22 | Random Forests | ( ) --> | |

| Tue, 7/23 | Instructor OH in lieu of Lecture | ||

| Wed, 7/24 | Special Topics: Pretraining, Fine-tuning & In-context Learning | ( ) --> | |

| Mon, 7/29 | Special Topics: Generative Models for Vision | ( ) --> | |

| Tue, 7/30 | Instructor OH in lieu of Lecture | ||

| Wed, 7/31 | No Class (Reading Day) | ||

| Fri, 8/2 | Final Exam (Time and Location TBD) |

Recitations

Attendance at recitations is not required, but strongly encouraged. Recitations will be interactive and focus on problem solving; we strongly encourage you to actively participate. A problem sheet will usually be released prior to the recitation. If you are unable to attend one or you missed an important detail, feel free to stop by office hours to ask the TAs about the content that was covered. Of course, we also encourage you to exchange notes with your peers.

| Date | Topic | Handout |

|---|---|---|

| Thu, 5/16 | HW2 Recitation | ( ) |

| Thu, 5/23 | HW3 Recitation | ( ) |

| Thu, 5/30 | No Recitation | |

| Thu, 6/6 | HW4 Recitation | ( ) |

| Thu, 6/13 | HW5 Recitation | ( ) |

| Tue, 6/18 | Midterm Review | ( ) |

| Thu, 6/20 | No Recitation (Reading Day) | |

| Thu, 6/27 | HW6 Recitation | ( ) |

| Wed, 7/3 | HW7 Recitation | ( ) |

| Thu, 7/11 | HW8 Recitation | ( ) |

| Thu, 7/18 | HW9 Recitation | ( ) --> |

| Thu, 7/25 | Final Review | ( ) --> |

| Thu, 8/1 | No Recitation (Reading Day) |

Homework Assignments

| Release Date | Topic | Files | Due Date |

|---|---|---|---|

| Mon, 5/13 | HW1: Background Material | , | Thu, 5/16 at 11:59 PM |

| Thu, 5/16 | HW2: Decision Trees | , | Thu, 5/23 at 11:59 PM |

| Thu, 5/23 | HW3: KNN, Perceptron & Linear Regression | , | Tue, 6/4 at 11:59 PM |

| Tue, 6/4 | HW4: Logistic Regression | , | Tue, 6/11 at 11:59 PM |

| Tue, 6/11 | HW5: Neural Networks | , | Tue, 6/18 at 11:59 PM |

| Tue, 6/25 | HW6: Unsupervised Learning & Algorithmic Bias | , | Tue, 7/2 at 11:59 PM |

| Tue, 7/2 | HW7: Deep Learning in PyTorch | , | Thu, 7/11 at 11:59 PM |

| Thu, 7/11 | HW8: Reinforcement Learning | , | Thu, 7/18 at 11:59 PM |

| Thu, 7/18 | HW9: Learning Theory & Ensemble Methods | , --> | Thu, 7/25 at 11:59 PM |

Course Calendar

IMAGES

VIDEO

COMMENTS

#machinelearning #nptel #swayam #python #ml Introduction To Machine Learning All week Assignment Solution - https://www.youtube.com/playlist?list=PL__28a0xFM...

Introduction To Machine Learning | NPTEL | Week 3 | assignment solution 3 | 2023

NPTEL Introduction to Machine Learning Assignment 3 Answers Week 3 July 2023 | NPTEL week 3 answers 2023 Official Telegram : https://telegram.dog/scishowengi...

ABOUT THE COURSE : This course provides a concise introduction to the fundamental concepts in machine learning and popular machine learning algorithms. We will cover the standard and most popular supervised learning algorithms including linear regression, logistic regression, decision trees, k-nearest neighbour, an introduction to Bayesian learning and the naïve Bayes algorithm, support ...

In this course we intend to introduce some of the basic concepts of machine learning from a mathematically well motivated perspective. We will cover the different learning paradigms and some of the more popular algorithms and architectures used in each of these paradigms. INTENDED AUDIENCE : This is an elective course.

There will be a live interactive session where a Course team member will explain some sample problems, how they are solved - that will help you solve the weekly assignments. We invite you to join the session and get your doubts cleared and learn better. Date: February 24, 2023 - Friday. Time:04.30 PM - 06.30 PM.

CSC311 - Introduction to Machine Learning (Fall 2023) Overview. Machine learning (ML) is a set of techniques that allow computers to learn from data and experience rather than requiring humans to specify the desired behaviour by hand. ... There will be 3 assignments in this course, posted below. Assignments will be due at 5pm on Tuesdays or ...

The assignment is due April 27. [3/28/2023] Homework 3 (handout, starter code) ... This class will provide a broad introduction to machine learning. We will start with supervised learning, where our goal is to learn an input-to-output mapping given a set of correct input-output pairs.

Introduction. The course will introduce the foundations of learning and making predictions from data. We will study basic concepts such as trading goodness of fit and model complexity. We will discuss important machine learning algorithms used in practice, and provide hands-on experience in a series of course projects.

The final exam will be held in person, on Tuesday, May 23 2023, from 1:30 PM to 4:30 PM, in Johnson Track. Please review final exam page for logistics, practice materials, and deadline for requesting accommodations. Exercises for week 11 are due Monday, May 1, 9am. Lab for week 10 checkoffs are due Monday, May 1, 11pm.

Our objective (and we hope yours) is for you to learn about machine learning. take responsibility for your understanding. we will help! Formula: exercises 5% + attendance 5% + homework 15% + labs 15% + midterm 25% + final 35%. Lateness: 20% penalty per day, applied linearly (so 1 hour late is -0.83%) Extensions:

Meetings : 10-301 + 10-601 Section A: MWF, 9:30 AM - 10:50 AM (CUC McConomy) 10-301 + 10-601 Section B: MWF, 12:30 PM - 01:50 PM (GHC 4401) For all sections, lectures are mostly on Mondays and Wednesdays. Recitations are mostly on Fridays and will be announced ahead of time. Education Associates Email: [email protected].

I trust my investments with Xtra by MobiKwik which is earning me 12% PA returns. And the cherry on top? I get daily interest & can withdraw anytime. Invest y...

Lateness: 20% penalty per day, applied linearly (so 1 hour late is -0.83%) Extensions: 20 one-day extensions (move one assignment's deadline forward by one day) will be applied automatically at the end of the term in a way that is maximally helpful. for medical or personal difficulties see S3 & contact us at [email protected].

NPTEL Introduction To Machine Learning Week 3 Assignment Answer 2023. 1. Which of the following are differences between LDA and Logistic Regression? Logistic Regression is typically suited for binary classification, whereas LDA is directly applicable to multi-class problems.

CSC311 - Introduction to Machine Learning (Winter 2023) Overview. Machine learning (ML) is a set of techniques that allow computers to learn from data and experience rather than requiring humans to specify the desired behaviour by hand. ... There will be 3 assignments in this course, posted below. Assignments will be due at 5pm on Tuesdays or ...

INTENDED AUDIENCE: UG, PG and PhD students and industry professionals who want to work in Machine and Deep Learning. PREREQUISITES: Knowledge of Linear Algebra, Probability and Random Process, PDE will be helpful. INDUSTRY SUPPORT: This is a very important course for industry professionals. Summary. Course Status : Completed. Course Type : Core.

A 3 ( 16 %) Submission due on 17 November 2023 (Week 13 Friday) Write a single python file to perform the following tasks: (a) Perform gradient descent to minimize the cost function 𝑓 1 (𝑎) = 𝑎 5 with an initialization of 𝑎 = 1. 5 (b) Perform gradient descent to minimize the cost function 𝑓 2 (𝑏) = sin 2 (𝑏) with an initialization of 𝑏 = 0. 3 (where 𝑏 is assumed to ...

Introduction to Machine Learning (Summer 2023)OverviewMachine learning (ML) is a set of techniques that allow computers to learn from data and experience rather than re. uiring humans to specify the desired behaviour by hand. ML has become increasing. y central both in AI as an academic field and industry. This course provides a broad intro.

This course is designed to give a graduate-level student a thorough grounding in the methodologies, technologies, mathematics and algorithms currently needed by people who do research in machine learning. 10-301 and 10-601 are identical. Undergraduates must register for 10-301 and graduate students must register for 10-601.

With the increased availability of data from varied sources there has been increasing attention paid to the various data driven disciplines such as analytics...

Assignment 3 Introduction to Machine Learning Prof. B. Ravindran. Consider the case where two classes follow Gaussian distribution which are centered at (6,8) and (− 6 ,−4) and have identity covariance matrix. Which of the following is theseparating decision boundary using LDA assuming the priors to be equal?

See posts, photos and more on Facebook.

Learn the basics of machine learning with practical examples and interactive exercises. Explore data science, neural networks, natural language processing and more.

There will be a live interactive session where a Course team member will explain some sample problems, how they are solved - that will help you solve the weekly assignments. We invite you to join the session and get your doubts cleared and learn better. Date: September 29, 2023 - Friday. Time:06.00 PM - 08.00 PM.

Choosing the right machine learning course depends on your current knowledge level and career aspirations. Beginners should look for courses that introduce the fundamentals of machine learning, including basic algorithms and data preprocessing techniques. Those with some experience might benefit from intermediate courses focusing on specific algorithms, model optimization, and real-world ...