University Libraries

- Research Guides

- Blackboard Learn

- Interlibrary Loan

- Study Rooms

- University of Arkansas

Literature Reviews

- Qualitative or Quantitative?

- Getting Started

- Finding articles

- Primary sources? Peer-reviewed?

- Review Articles/ Annual Reviews...?

- Books, ebooks, dissertations, book reviews

Qualitative researchers TEND to:

Researchers using qualitative methods tend to:

- t hink that social sciences cannot be well-studied with the same methods as natural or physical sciences

- feel that human behavior is context-specific; therefore, behavior must be studied holistically, in situ, rather than being manipulated

- employ an 'insider's' perspective; research tends to be personal and thereby more subjective.

- do interviews, focus groups, field research, case studies, and conversational or content analysis.

Image from https://www.editage.com/insights/qualitative-quantitative-or-mixed-methods-a-quick-guide-to-choose-the-right-design-for-your-research?refer-type=infographics

Qualitative Research (an operational definition)

Qualitative Research: an operational description

Purpose : explain; gain insight and understanding of phenomena through intensive collection and study of narrative data

Approach: inductive; value-laden/subjective; holistic, process-oriented

Hypotheses: tentative, evolving; based on the particular study

Lit. Review: limited; may not be exhaustive

Setting: naturalistic, when and as much as possible

Sampling : for the purpose; not necessarily representative; for in-depth understanding

Measurement: narrative; ongoing

Design and Method: flexible, specified only generally; based on non-intervention, minimal disturbance, such as historical, ethnographic, or case studies

Data Collection: document collection, participant observation, informal interviews, field notes

Data Analysis: raw data is words/ ongoing; involves synthesis

Data Interpretation: tentative, reviewed on ongoing basis, speculative

- Qualitative research with more structure and less subjectivity

- Increased application of both strategies to the same study ("mixed methods")

- Evidence-based practice emphasized in more fields (nursing, social work, education, and others).

Some Other Guidelines

- Guide for formatting Graphs and Tables

- Critical Appraisal Checklist for an Article On Qualitative Research

Quantitative researchers TEND to:

Researchers using quantitative methods tend to:

- think that both natural and social sciences strive to explain phenomena with confirmable theories derived from testable assumptions

- attempt to reduce social reality to variables, in the same way as with physical reality

- try to tightly control the variable(s) in question to see how the others are influenced.

- Do experiments, have control groups, use blind or double-blind studies; use measures or instruments.

Quantitative Research (an operational definition)

Quantitative research: an operational description

Purpose: explain, predict or control phenomena through focused collection and analysis of numberical data

Approach: deductive; tries to be value-free/has objectives/ is outcome-oriented

Hypotheses : Specific, testable, and stated prior to study

Lit. Review: extensive; may significantly influence a particular study

Setting: controlled to the degree possible

Sampling: uses largest manageable random/randomized sample, to allow generalization of results to larger populations

Measurement: standardized, numberical; "at the end"

Design and Method: Strongly structured, specified in detail in advance; involves intervention, manipulation and control groups; descriptive, correlational, experimental

Data Collection: via instruments, surveys, experiments, semi-structured formal interviews, tests or questionnaires

Data Analysis: raw data is numbers; at end of study, usually statistical

Data Interpretation: formulated at end of study; stated as a degree of certainty

This page on qualitative and quantitative research has been adapted and expanded from a handout by Suzy Westenkirchner. Used with permission.

Images from https://www.editage.com/insights/qualitative-quantitative-or-mixed-methods-a-quick-guide-to-choose-the-right-design-for-your-research?refer-type=infographics.

- << Previous: Books, ebooks, dissertations, book reviews

- Last Updated: Jan 8, 2024 2:51 PM

- URL: https://uark.libguides.com/litreview

- See us on Instagram

- Follow us on Twitter

- Phone: 479-575-4104

You are now being redirected to go-pdf.net....

Have a language expert improve your writing

Run a free plagiarism check in 10 minutes, generate accurate citations for free.

- Knowledge Base

Methodology

- Qualitative vs. Quantitative Research | Differences, Examples & Methods

Qualitative vs. Quantitative Research | Differences, Examples & Methods

Published on April 12, 2019 by Raimo Streefkerk . Revised on June 22, 2023.

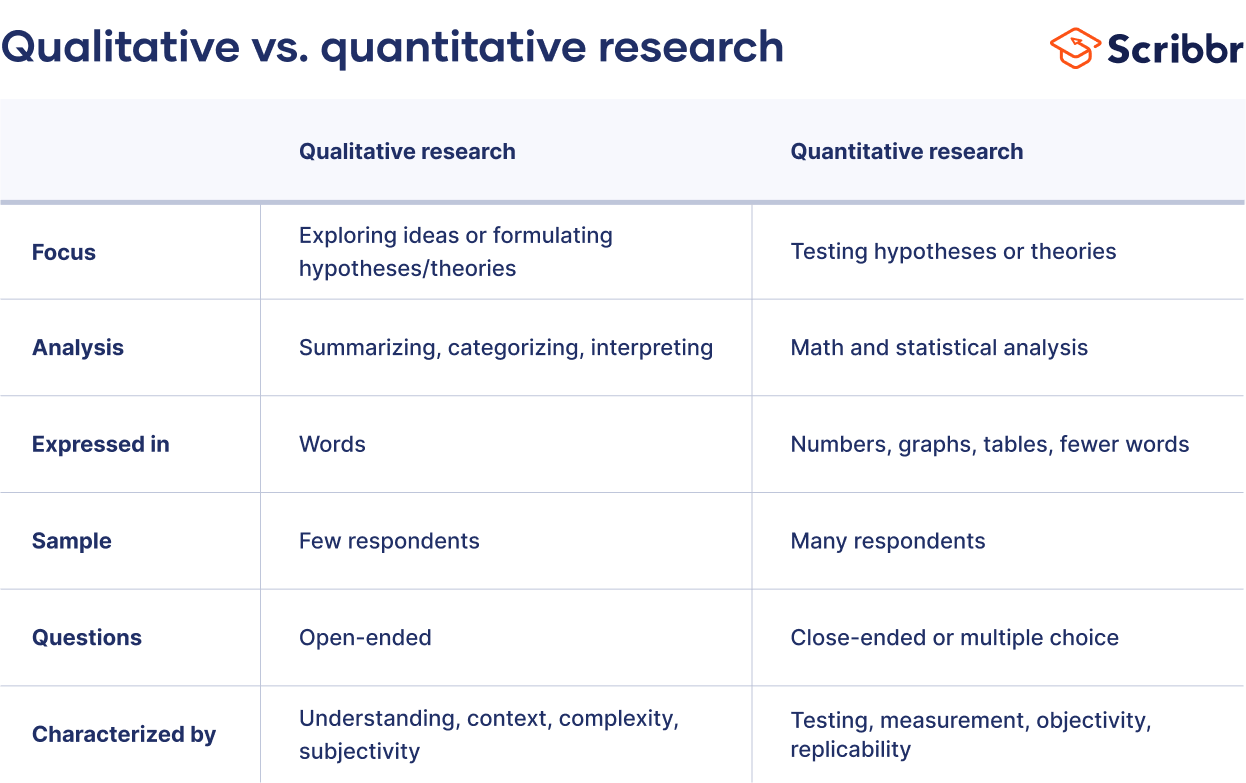

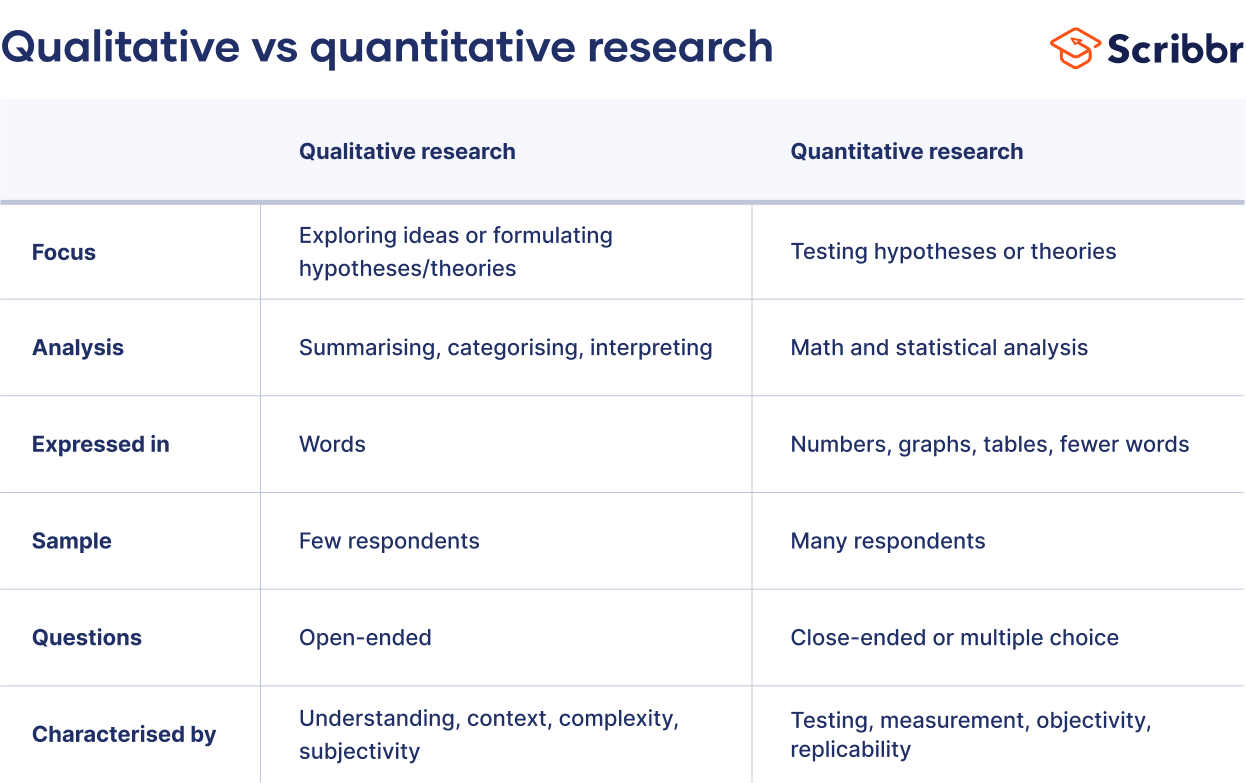

When collecting and analyzing data, quantitative research deals with numbers and statistics, while qualitative research deals with words and meanings. Both are important for gaining different kinds of knowledge.

Common quantitative methods include experiments, observations recorded as numbers, and surveys with closed-ended questions.

Quantitative research is at risk for research biases including information bias , omitted variable bias , sampling bias , or selection bias . Qualitative research Qualitative research is expressed in words . It is used to understand concepts, thoughts or experiences. This type of research enables you to gather in-depth insights on topics that are not well understood.

Common qualitative methods include interviews with open-ended questions, observations described in words, and literature reviews that explore concepts and theories.

Table of contents

The differences between quantitative and qualitative research, data collection methods, when to use qualitative vs. quantitative research, how to analyze qualitative and quantitative data, other interesting articles, frequently asked questions about qualitative and quantitative research.

Quantitative and qualitative research use different research methods to collect and analyze data, and they allow you to answer different kinds of research questions.

Quantitative and qualitative data can be collected using various methods. It is important to use a data collection method that will help answer your research question(s).

Many data collection methods can be either qualitative or quantitative. For example, in surveys, observational studies or case studies , your data can be represented as numbers (e.g., using rating scales or counting frequencies) or as words (e.g., with open-ended questions or descriptions of what you observe).

However, some methods are more commonly used in one type or the other.

Quantitative data collection methods

- Surveys : List of closed or multiple choice questions that is distributed to a sample (online, in person, or over the phone).

- Experiments : Situation in which different types of variables are controlled and manipulated to establish cause-and-effect relationships.

- Observations : Observing subjects in a natural environment where variables can’t be controlled.

Qualitative data collection methods

- Interviews : Asking open-ended questions verbally to respondents.

- Focus groups : Discussion among a group of people about a topic to gather opinions that can be used for further research.

- Ethnography : Participating in a community or organization for an extended period of time to closely observe culture and behavior.

- Literature review : Survey of published works by other authors.

A rule of thumb for deciding whether to use qualitative or quantitative data is:

- Use quantitative research if you want to confirm or test something (a theory or hypothesis )

- Use qualitative research if you want to understand something (concepts, thoughts, experiences)

For most research topics you can choose a qualitative, quantitative or mixed methods approach . Which type you choose depends on, among other things, whether you’re taking an inductive vs. deductive research approach ; your research question(s) ; whether you’re doing experimental , correlational , or descriptive research ; and practical considerations such as time, money, availability of data, and access to respondents.

Quantitative research approach

You survey 300 students at your university and ask them questions such as: “on a scale from 1-5, how satisfied are your with your professors?”

You can perform statistical analysis on the data and draw conclusions such as: “on average students rated their professors 4.4”.

Qualitative research approach

You conduct in-depth interviews with 15 students and ask them open-ended questions such as: “How satisfied are you with your studies?”, “What is the most positive aspect of your study program?” and “What can be done to improve the study program?”

Based on the answers you get you can ask follow-up questions to clarify things. You transcribe all interviews using transcription software and try to find commonalities and patterns.

Mixed methods approach

You conduct interviews to find out how satisfied students are with their studies. Through open-ended questions you learn things you never thought about before and gain new insights. Later, you use a survey to test these insights on a larger scale.

It’s also possible to start with a survey to find out the overall trends, followed by interviews to better understand the reasons behind the trends.

Qualitative or quantitative data by itself can’t prove or demonstrate anything, but has to be analyzed to show its meaning in relation to the research questions. The method of analysis differs for each type of data.

Analyzing quantitative data

Quantitative data is based on numbers. Simple math or more advanced statistical analysis is used to discover commonalities or patterns in the data. The results are often reported in graphs and tables.

Applications such as Excel, SPSS, or R can be used to calculate things like:

- Average scores ( means )

- The number of times a particular answer was given

- The correlation or causation between two or more variables

- The reliability and validity of the results

Analyzing qualitative data

Qualitative data is more difficult to analyze than quantitative data. It consists of text, images or videos instead of numbers.

Some common approaches to analyzing qualitative data include:

- Qualitative content analysis : Tracking the occurrence, position and meaning of words or phrases

- Thematic analysis : Closely examining the data to identify the main themes and patterns

- Discourse analysis : Studying how communication works in social contexts

If you want to know more about statistics , methodology , or research bias , make sure to check out some of our other articles with explanations and examples.

- Chi square goodness of fit test

- Degrees of freedom

- Null hypothesis

- Discourse analysis

- Control groups

- Mixed methods research

- Non-probability sampling

- Quantitative research

- Inclusion and exclusion criteria

Research bias

- Rosenthal effect

- Implicit bias

- Cognitive bias

- Selection bias

- Negativity bias

- Status quo bias

Quantitative research deals with numbers and statistics, while qualitative research deals with words and meanings.

Quantitative methods allow you to systematically measure variables and test hypotheses . Qualitative methods allow you to explore concepts and experiences in more detail.

In mixed methods research , you use both qualitative and quantitative data collection and analysis methods to answer your research question .

The research methods you use depend on the type of data you need to answer your research question .

- If you want to measure something or test a hypothesis , use quantitative methods . If you want to explore ideas, thoughts and meanings, use qualitative methods .

- If you want to analyze a large amount of readily-available data, use secondary data. If you want data specific to your purposes with control over how it is generated, collect primary data.

- If you want to establish cause-and-effect relationships between variables , use experimental methods. If you want to understand the characteristics of a research subject, use descriptive methods.

Data collection is the systematic process by which observations or measurements are gathered in research. It is used in many different contexts by academics, governments, businesses, and other organizations.

There are various approaches to qualitative data analysis , but they all share five steps in common:

- Prepare and organize your data.

- Review and explore your data.

- Develop a data coding system.

- Assign codes to the data.

- Identify recurring themes.

The specifics of each step depend on the focus of the analysis. Some common approaches include textual analysis , thematic analysis , and discourse analysis .

A research project is an academic, scientific, or professional undertaking to answer a research question . Research projects can take many forms, such as qualitative or quantitative , descriptive , longitudinal , experimental , or correlational . What kind of research approach you choose will depend on your topic.

Cite this Scribbr article

If you want to cite this source, you can copy and paste the citation or click the “Cite this Scribbr article” button to automatically add the citation to our free Citation Generator.

Streefkerk, R. (2023, June 22). Qualitative vs. Quantitative Research | Differences, Examples & Methods. Scribbr. Retrieved June 29, 2024, from https://www.scribbr.com/methodology/qualitative-quantitative-research/

Is this article helpful?

Raimo Streefkerk

Other students also liked, what is quantitative research | definition, uses & methods, what is qualitative research | methods & examples, mixed methods research | definition, guide & examples, get unlimited documents corrected.

✔ Free APA citation check included ✔ Unlimited document corrections ✔ Specialized in correcting academic texts

Table of Contents

Collaboration, information literacy, writing process, how to frame research questions, literature reviews, & citations: qualitative vs. quantitative.

- © 2023 by Joseph M. Moxley - University of South Florida

This assignment will guide you through the analysis of how qualitative , quantitative , and mixed-methods researchers frame research questions, construct literature reviews, and integrate citations. You will engage in discourse analysis, rhetorical analysis, and citation analysis of one qualitative study and one quantitative or mixed-methods research study. This creative challenge will help you understand the different methodologies and the scholarly conventions in Professional and Technical Communication.

- Research Questions: Explore how the formulation of research questions differs between qualitative and quantitative or mixed-methods studies. Consider whether these questions are open-ended or specific, reflecting the underlying methodologies.

- Literature Reviews: Analyze how authors frame their literature reviews, emphasizing themes and sources. Consider how these reviews set the stage for the research questions or hypotheses.

- Citation Analysis: Evaluate how researchers use citations to support their arguments and research questions, noting the conventions followed and their alignment with audience expectations.

- Methodological Alignment: Assess how research questions align with qualitative or quantitative approaches, and how literature reviews and citations support the formulation of hypotheses.

Why Do Different Research Methodologies Frame Research Questions, Literature Reviews, and Citations Differently?

In research methods, understanding why different methodologies frame research questions, literature reviews, and citations differently is crucial. These differences are deeply rooted in contrasting epistemological assumptions about knowledge construction. Qualitative research, informed by interpretivist and constructivist epistemologies, explores subjective meanings and interpretations through methods like interviews, observations, and textual analysis. Researchers using these approaches view knowledge as socially constructed, emphasizing the contextual and subjective nature of understanding.

Conversely, quantitative research, rooted in positivist or post-positivist epistemologies, seeks objective measurement and hypothesis testing. Positivist epistemology emphasizes empirical observation and hypothesis verification, assuming an objective reality that can be measured and confirmed. Post-positivist epistemology extends this by acknowledging the role of interpretation and multiple perspectives in constructing knowledge, integrating critical perspectives into empirical inquiry.

These methodological differences significantly influence how researchers approach and interpret their findings in academic and professional contexts. Language practices, such as the formulation of research questions, the construction of literature reviews, and the use of citations, reflect these underlying epistemological assumptions. Qualitative researchers frame open-ended questions to delve into complex phenomena, reflecting their belief in the constructed nature of knowledge. Their literature reviews emphasize thematic analysis and the interpretation of texts to uncover underlying meanings, often citing sources that contribute to theoretical understanding.

In contrast, quantitative researchers frame specific, measurable questions aimed at testing hypotheses and generalizing findings. Their literature reviews synthesize empirical studies and statistically analyze data to validate or refute hypotheses, prioritizing citations that provide empirical evidence and support for their claims.

By understanding these epistemological foundations and their influence on language practices, researchers can navigate and critically evaluate different methodological approaches to research in Professional and Technical Communication and beyond.

Writing Prompt

Choose one qualitative and quantitative or mixed-methods research study from Jason Tham’s list of PTC Journals, listed below. Reflect on the differences and similarities in how qualitative and quantitative or mixed-methods researchers frame their research questions, literature reviews, and use of citations.

Guidelines:

- Do not engage in methodological critique for this assignment.

- Demonstrate an awareness of the research terms and conventions defined in the assigned readings and from the first creative challenge

- Adopt a professional writing style.

- Before each of the studies you analyze, provide a full bibliographic reference in APA 7 style

Recommended Readings:

- Scholarship as a Conversation – Explores the ongoing dialogues shaping scholarly research.

- Credibility & Authority – Guides on establishing credibility and authority in academic writing and research.

- Citation – How to Connect Evidence to Your Claims

- Citation & Voice – How to Distinguish Your Ideas from Your Sources

- Citation Conventions – What is the Role of Citation in Academic & Professional Writing?

- Citation Conventions – When Are Citations Required in Academic & Professional Writing?

- Paraphrasing – How to Paraphrase with Clarity & Concision

- Block Quotations

- Summary – Learn How To Summarize Sources in Academic & Professional Writing

Deliverables

- A comparative analysis paper of 4-5 pages

- A reflection on your processes responding to this creative challenge, including a summary of how you used AI tools.

Step 1 – Select Two Studies to Analyze

Review the list of academic and professional journals below, which has been compiled and annotated by Professor Jason Tham, an associate professor of technical communication and rhetoric at Texas Tech. To log on to many of these journals, because they are locked behind a paywall, you may need to log on to the University’s Library Services portal.

Journal of Business and Technical Communication (SAGE)

- Nature: Theory driven; seems to balance qualitative and quantitative research

- Focus: Technical and business communication practices and pedagogy; discussions about training students to be professionals; some useful teaching strategies and cases

- Notes: Currently one of the top journals in technical communication; arguably most cited; has a strong tie to Iowa State’s professional communication program

Journal of Technical Writing and Communication (SAGE)

- Nature: Slightly less theoretical than JBTC and TCQ but still heavy academic-speak

- Focus: Trends and approaches in technical communication practices and research

- Notes: One of the oldest technical communication journals in the US

Technical Communication (Society for Technical Communication)

- Nature: Arguably more practical than JTWC, JBTC, TCQ, and IEEE Transactions; caters to STC’s professional audience… and it’s associated with the STC’s annual summit

- Focus: Emerging topics, methods, and practices in technical communication; content management, information architecture, and usability research

- Notes: It’s behind a paywall some university libraries may not even access; there is an online version of the journal called Technical Communication Online… but it’s not as prominent as the print journal; seems to have a strong association with Texas Tech’s technical communication program

Technical Communication Quarterly (Association for Teachers of Technical Writing) (Taylor & Francis)

- Nature: Theoretical + pedagogical

- Focus: Teaching methods and exemplary approaches to research; features many exemplary qualitative research cases

- Notes: Another top journal in technical communication; produces many award-winning pieces; associated with ATTW so it has a huge academic following… especially those who also attend the annual Conference on College Composition and Communication (CCCC)

IEEE Transactions on Professional Communication (Institute of Electrical & Electronics Engineers – Professional Communication Society)

- Nature: 50-50 theory and practice

- Focus: Engineering communication as professional communication; empirical research

- Notes: Another old journal that has a lot of history; seems to have a strong tie to the University of North Texas’s technical communication department

IEEE Transactions on Technology and Society (IEEE Society on Social Implications of Technology)

- Nature: 30% technical, 70% philosophical discussions about social technologies

- Focus: Computers science, CS education, technical design, social computing

- Notes: Good for interdisciplinary work, digital humanities, and digital education

Communication Design Quarterly (Association for Computing Machinery – Special Interest Group on Design of Communication)

- Nature: Theoretical, methodological

- Focus: Offers many accessible (comprehensible) research reports on design methods, research practices, teaching approaches, and industry trends

- Notes: Open access…yay! Recently pursued an “online first” model where articles are published on a rolling basis; it’s considered the second-tier journal in the academic circle but it’s surely becoming more popular among technical communication scholars

Journal of Usability Studies (User Experience Professionals Association)

- Nature: For academics, this is highly practical

- Focus: Empirical research; mostly quantitative

- Notes: Independent journal not associated with an academic institution

Behaviour and Information Technology (Taylor & Francis)

- Nature: Computer science emphasis… so, experimental + theoretical

- Focus: Human-computer interaction; information design, behavioral science

- Notes: This is a UK journal… provides a nice juxtaposition to US journals and perspectives

Human Factors: The Journal of the Human Factors and Ergonomics Society (SAGE)

- Nature: Similar to BIT, experimental and theoretical

- Focus: Puts emphasis on the human factors and ergonomics discipline; draws from psychology

- Notes: As shown in its name… it’s a journal for the Human Factors and Ergonomics Society

Ergonomics in Design: The Quarterly of Human Factors Applications (SAGE)

- Nature: Slightly more theoretical than Human Factors

- Focus: Theoretical discussions, experiments, and demonstrations

- Notes: Also an HFES journal

International Journal of Human-Computer Studies (Elsevier)

- Nature: Theoretical

- Focus: More interdisciplinary than EID and Human Factors

- Notes: May be one that technical communication researchers feel more comfortable publishing in even if they are not working directly in HCI or computer science fields

Human Technology (Independent journal)

- Nature: Theoretical, philosophical

- Focus: Discusses technological futures and human-computer interaction

- Notes: It’s got less prestige compared to EID and Human Factors

Human Communication & Technology (Independent journal)

- Nature: Theoretical, empirical

- Focus: Communication studies and social technologies

- Notes: It’s fairly new and doesn’t seem to publish multiple issues a year

Journal of Computer-Mediated Communication (International Communication Association) (Oxford)

- Nature: Empirical; qualitative; quantitative

- Focus: Social scientific approach to computer-based communication; media studies and politics; social media research

- Notes: Top journal for solid communication technologies research

International Journal of Sociotechnology and Knowledge Development (IGI Global)

- Nature: Empirical; qualitative; quantitative; practical

- Focus: Social scientific approach to technology studies and professional communication; seems catered to practitioner audience

- Notes: Has an interdisciplinary feel to it; one or two special issues are of specific interest to technical communication design

Business and Professional Communication Quarterly (SAGE)

- Nature: Theoretical, pedagogical

- Focus: Workplace communication studies and teaching cases

- Notes: A journal of the Association for Business Communication (ABC); top tiered for business writing and communication research

International Journal of Business Communication (SAGE)

- Nature: Practical, pedagogical, experimental

- Focus: Similar focus to BPCQ

- Notes: Also an ABC journal (I am not sure why there is this other journal)

Programmatic Perspectives (Council for Programs in Technical and Scientific Communication)

- Nature: Programmatic, pedagogical

- Focus: Program and curriculum design; teaching issues; professional development of teachers

- Notes: Smaller journal… not sure how big is the readership but it’s got a good reputation

Xchanges: An Interdisciplinary Journal of Technical Communication, Rhetoric, and Writing across the Curriculum (Independent journal)

- Nature: Pedagogical, beginner research, experimental, teaching cases

- Focus: Technical communication, writing studies, rhet/comp, and everything in between!

- Notes: Open access journal with pretty good editorial support; provides mentorship to undergrad and graduate writing; multimedia friendly

RhetTech Undergraduate Journal (Independent journal)

- Nature: Beginner research, undergraduate research

- Focus: Writing studies, rhet/comp, technical communication

Notes: Open access; print based (PDF) so not very multimedia-friendly

Step 2 – Engage in Critical Analysis

Consider These Heuristic Questions to Guide Your Analysis

a) Research Question Analysis (discourse analysis)

- How do the investigators present their research question(s)? Do they present their research question in the abstract and intro and throughout the study?

- How do the investigators clarify the significance of their research question(s)?

- Based on the research question and definition of its significance and scholarly roots, what methodological community is the researching targeting as its primary audience?

- How do the research questions differ in their formulation between the qualitative and quantitative or mixed-methods studies?

- Are the research questions open-ended or specific? How does this reflect the study’s methodology?

- Identify and discuss the research questions or hypotheses in both studies.

- Explain how the research questions align with the qualitative or quantitative approach.

- Reflect on how the literature review supports the formulation of these research questions or hypotheses.

b) Types of Literature Cited (Citation Analysis):

- How do the researchers use citations to build their arguments and support their research questions?

- What citation conventions are followed, and how do they reflect the expectations of the target audience?

- Theoretical works (conceptual frameworks, models, theories)

- quantitative studies?

- qualitative studies?

- Past scholarly conversations?

- Policy documents or industry reports (especially in applied research)

- Original research?

c) Information Literacy Conventions (Rhetorical Analysis)

Authority is constructed & contextual:.

- How do the authors address the authority of the sources they cite or use to develop their study or argument?

- Do they evaluate and present the credibility of their sources?

Information Creation as a Process:

- How do the authors describe the research and information creation process?

- Do they acknowledge the iterative nature of research and knowledge development?

- How do they present different formats of information (e.g., raw data, analyzed results, interpretations)?

Information Has Value :

- How do the authors give credit to secondary sources through proper attribution and citation?

Research as Inquiry :

- How do the authors formulate questions based on information gaps or existing conflicting information?

- Do they explain how they determined the scope of their investigation?

- Do they employ research methods appropriate to their inquiry/research questions?

- How do the research questions align with the qualitative, quantitative, or mixed-methods approach?

- How do the literature reviews and citations support the formulation of these research questions or hypotheses?

Scholarship as Conversation :

- How do the authors situate their work within the larger scholarly conversation?

- Do they cite and build upon contributing work of others in their field?

- How do they identify the contribution their work makes to the disciplinary knowledge?

- Do they acknowledge competing perspectives on the issue?

Searching as Strategic Exploration :

- Do the authors describe their search strategies and how they refined them based on initial results?

- How do they demonstrate the use of different types of searching language (e.g., controlled vocabulary, keywords, natural language)?

- Do they discuss how they determined the initial scope of their literature review and adjusted it as necessary?

- How do they show persistence and flexibility in their information gathering process?

d) Rhetorical Appeals

- Appeals to ethos (credibility) fallacious ethos ?

- Appeals to pathos (emotion)

- Appeals to logos (logic)

- Overall rhetorical strategies used to persuade the audience

e) Literature Reviews

- How do the authors of each study frame their literature review? What themes and sources are emphasized?

- How does the literature review set the stage for the research questions or hypotheses?

Scholarly Conversations:

- How do the investigators root their research questions in existing scholarly conversations?

- What hermeneutic methods are used to interpret and integrate prior research?

Literature Review Analysis:

- Identify the main themes and sources cited in the literature review sections of both studies.

- Discuss how the literature review sets the stage for the research questions or hypotheses.

- Compare the depth and breadth of the literature reviews in both types of studies.

Citation Analysis:

- Analyze how the researchers use citations to support their arguments and research questions.

- Discuss the citation conventions followed and their alignment with the expectations of the target audience.

Evaluation Criteria:

- Depth of Analysis: Thorough identification and discussion of key themes, sources, research questions, and citations.

- Comparative Insight: Ability to compare and contrast framing of research questions, literature reviews, and citations.

- Clarity and Coherence: Clear and coherent presentation of ideas with logical flow.

- Reflective Thought: Depth of reflection on implications of research paradigm differences.

Step 2 – Choose 2 Articles from the List of PTC Journals below — 1 Qualitative Study & 1 Quantitative Study.

Step 2 – engage in discourse, rhetorical, and citation analysis, for each study in parts 1 and 2, analyze:, 3. comparison.

Compare and contrast the two articles, considering

- differences in how quantitative vs. qualitative studies engage with literature

- variations in presenting research questions and their significance

- variations in how each study type uses literature to interpret results

- differences in rhetorical strategies employed.

4. Evaluation

Score each article on:

- Authority (1-4 scale): Assess how well the authors establish their credibility and the legitimacy of their sources

- Clarity (1-4 scale): Evaluate how clearly the authors present their research questions and engage with existing literature. Did the investigators use visual language / data visualizations to clarify and emphasize key concepts?

- Rhetorical Effectiveness (1-4 scale): Evaluate how well the authors use rhetorical strategies to inform and persuade their audience

Featured Articles

Academic Writing – How to Write for the Academic Community

Professional Writing – How to Write for the Professional World

Credibility & Authority – How to Be Credible & Authoritative in Research, Speech & Writing

'Qualitative' and 'quantitative' methods and approaches across subject fields: implications for research values, assumptions, and practices

- Open access

- Published: 30 September 2023

- Volume 58 , pages 2357–2387, ( 2024 )

Cite this article

You have full access to this open access article

- Nick Pilcher ORCID: orcid.org/0000-0002-5093-9345 1 &

- Martin Cortazzi 2

12k Accesses

Explore all metrics

There is considerable literature showing the complexity, connectivity and blurring of 'qualitative' and 'quantitative' methods in research. Yet these concepts are often represented in a binary way as independent dichotomous categories. This is evident in many key textbooks which are used in research methods courses to guide students and newer researchers in their research training. This paper analyses such textbook representations of 'qualitative' and 'quantitative' in 25 key resources published in English (supported by an outline survey of 23 textbooks written in German, Spanish and French). We then compare these with the perceptions, gathered through semi-structured interviews, of university researchers (n = 31) who work in a wide range of arts and science disciplines. The analysis of what the textbooks say compared to what the participants report they do in their practice shows some common features, as might be assumed, but there are significant contrasts and contradictions. The differences tend to align with some other recent literature to underline the complexity and connectivity associated with the terms. We suggest ways in which future research methods courses and newer researchers could question and positively deconstruct such binary representations in order to free up directions for research in practice, so that investigations can use both quantitative or qualitative approaches in more nuanced practices that are appropriate to the specific field and given context of investigations.

Similar content being viewed by others

Qualitative Research and Content Analysis

Designing a Research Question

“Qualitative Research” Is a Moving Target

Avoid common mistakes on your manuscript.

1 Introduction: qualitative and quantitative methods, presentations, and practices

Teaching in research methods courses for undergraduates, postgraduates and newer researchers is commonly supported or guided through textbooks with explanations of 'qualitative' and 'quantitative' methods and cases of how these methods are employed. Student dissertations and theses commonly include methodology chapters closely aligned with these textbook representations. Unexceptionally, dissertations and theses we supervise and examine internationally have methodology chapters and frequently these consider rationales and methods associated with positivist or interpretivist paradigms. Within such positivist or interpretivist frameworks, research approaches are amplified with elaborations of the rationale, the methods, and reasons for their choice over likely alternatives. In an apparent convention, related data are assigned as quantitative or qualitative in nature, with associated labelling as ‘numerical’ or ‘textual'. The different types of data yield different values and interpretive directions, and are clustered conceptually with particular research traditions, approaches, and fields or disciplines. Frequently, these clusters are oriented around 'quantitative' and 'qualitative' conceptualizations.

This paper seeks to show how ‘qualitative’ and ‘quantitative’, whether stereotyped or more nuanced, as binary divisions as presented in textbooks and published resources describing research methods may not always accord with the perceptions and day-to-day practices of university researchers. Such common binary representations of quantitative and qualitative and their associated concepts may hide complexities, some of which are outlined below. Any binary divide between ‘qualitative’ and ‘quantitative’ needs caution to show complexity and awareness of disparities with some researchers’ practices.

To date, as far as the present authors are aware, no study has first identified a range of binary representations of ‘quantitative’ and ‘qualitative’ methods and approaches in a literature review study of the many research methods textbooks and sources which guide students and then, secondly, undertaken an interview study with a range of established participant researchers in widely divergent fields to seek their understandings of ‘quantitative’ and ‘qualitative’ in their own fields. The findings related here complement and extend the complexities and convergences of understanding the concepts in different disciplines. Arguably, this paper demonstrates how students and novice researchers should not be constrained in their studies by any binary representations of ‘quantitative’ and ‘qualitative’ the terms. They should feel free to use either (or neither) or both in strategic combinations, as appropriate to their fields.

1.1 Presentations

Characteristically, presentations in research methods textbooks distinguish postivist and interpretivist approaches or paradigms (e.g. Guba and Lincoln 1994 ; Howe 1988 ; Denzin and Lincoln 2011 ) or ‘two cultures’ (Goertz and Mahoney 2012 ) with associated debates or ‘wars’ (e.g. Creswell 1995 ; Morse 1991 ). Quantitative data are shown as ‘numbers’ gathered through experiments (Moore 2006 ) or mathematical models (Denzin and Lincoln 1998 ), whereas qualitative data are usually words or texts (Punch 2005 ; Goertz and Mahoney 2012 ), characteristically gathered through interviews or life stories (Denzin and Lincoln 2011 ). Regarding analysis, some sources claim that establishing objective causal relationships is key in quantitative analysis (e.g. Goertz and Mahoney 2012 ) whereas qualitative analysis uses more discursive and interpretative procedures.

Thus, much literature presents research in terms of two generally distinct methods—quantitative and qualitative—which many students are taught in research methods courses. The binary divide may seem to be legitimated in the titles of many academic journals. This division prevails as designated strands of separated research methods in courses which apparently handle both (cf. Onwuegbuzie and Leech 2005 ). Consequently, students may follow this seemingly stereotyped binary view or feel uncomfortable to deviate from it. Arguably, PhD candidates need to demonstrate understanding of such concepts and procedures in a viva—or risk failure (cf. Trafford and Leshem 2002 ). The Cambridge Dictionary defines ‘quality’ as “how good or bad something is”; while ‘quantity' is “the amount or number of something, especially that can be measured” (Cambridge 2022 ). But definitions of ‘Qualitative' can be elusive, since “a precise definition of qualitative research, and specifically… its distinctive feature of being “qualitative”, the literature is meager” (Aspers and Corte 2019 , p.139). Some observe a “paradox… that researchers act as if they know what it is, but they cannot formulate a definition” and that “there is no consensus about specific qualitative methods nor… data” (Aspers and Corte 2019 , p40). In general, ‘qualitative research’ is an iterative process to discover more about a phenomenon (ibid.). Elsewhere, 'qualitative’ is defined negatively: "It is research that does not use numbers” (Seale 1999b , p.119). But this oversimplifies and hides possible disciplinary variation. For example, when investigating criminal action, numeric information (quantity) always follows an interpretation (De Gregorio 2014 ), and consequently this is a quantity of a quality (cf. Uher 2022 ).

Indeed, many authorities note the presence of elements of one in the other. For example, in analysis specifically, that what are considered to be quantitative elements such as statistics are used in qualitative analysis (Miles and Huberman 1994 ). More generically, that “a qualitative dimension is present in quantitative work as well” (Aspers and Corte 2019 , p.139). In ‘mixed methods’ research (cf. Tashakkori et al. 1998 ; Johnson et al. 2007 ; Teddlie and Tashakkori 2011 ) many researchers ‘mix’ the two approaches (Seale 1999a ; Mason 2006 ; Dawson 2019 ), either using multiple methods concurrently, or doing so sequentially. Mixed method research logically depends on prior understandings of quantitative and qualitative concepts but this is not always obvious (e.g. De Gregorio 2014 ); for instance Heyvaert et al. ( 2013 ) define mixed methods as combining quantitative and qualitative items, but these key terms are left undefined. Some commentators characterize such mixing as a skin, not a sweater to be changed every day (Marsh and Furlong 2002 , cited in Grix 2004 ). In some disciplines, these terms are often blurred, interchanged or conjoined. In sociology, for instance, “any quality can be quantified. Any quantity is a quality of a social context, quantity versus quality is therefore not a separation” (Hanson 2008 , p.102) and characterizing quantitative as ‘objective’ and qualitative as ‘subjective’ is held to be false when seeking triangulation (Hanson 2008 ). Additionally, approaches to measuring and generating quantitative numerical information can differ in social sciences compared to physics (Uher 2022 ). Indeed, quantity may consist of ‘a multitude’ of divisible aspects and a ‘magnitude’ for indivisible aspects (Uher 2022 ). Notably, “the terms ‘measurement’ and ‘quantification’ have different meanings and are therefore prone to jingle-jangle fallacies” (Uher 2022 ) where individuals use the same words to denote different understandings (cf. Bakhtin 1986 ). Comparatively, the words ‘unit’ and ‘scale’ are multitudinous in different sciences, and the key principles of numerical traceability and data generation traceability arguably need to be applied more to social sciences and psychology (Uher 2022 ). The interdependence of the terms means any quantity is grounded in a quality of something, even if the inverse does not always apply (Uher 2022 ).

1.2 Practices

The present paper compares representations found in research methods textbooks with the reported practices of established researchers given in semi-structured interviews. The differences revealed between what the literature review of methods texts showed and what the interview study showed both underlines and extends this complexity, with implications for how such methodologies are approached and taught. The interview study data (analysed below) show that many participant researchers in disciplines commonly located within an ostensibly ‘positivist’ scientific tradition (e.g. chemistry) are, in fact, using qualitative methods as scientific procedures (contra Tashakkori et al 1998 ; Guba and Lincoln 1994 ; Howe 1988 ; Lincoln and Guba 1985 ; Teddlie and Tashakkori 2011 ; Creswell 1995 ; Morse 1991 ). These interview study data also show that many participant researchers use what they describe as qualitative approaches to provide initial measurements (geotechnics; chemistry) of phenomena before later using quantitative procedures to measure the quantity of a quality (cf. Uher 2022 ). Some participant researchers also say they use quantitative procedures to reveal data for which they subsequently use qualitative approaches to interpret and understand (biology; dendrology) through their creative imaginations or experience (contra e.g. Hammersley, 2013 ). Participant researchers in ostensibly ‘positivist’ areas describe themselves as doubting ‘facts’ measured by machines programmed by humans (thus showing they feel researchers are not outside the world looking in (contra. e.g. Punch 2005 )) or doubting the certainty of quantitative data over time (contra e.g. Punch 2005 ). Critically, the interview study data show that these participant researchers often engage in debate over what a ‘number’ is and the extent to which ‘numbers’ can be considered ‘quantitative’. For example the data show how a mathematician considers that many individuals do not know what they mean by the word ‘quantitative’, and an engineer interprets any numbers involving human judgements as ‘qualitative’. Further, both a chemist and a geotechnician routinely define and use ‘qualitative’ methods and analysis to arrive at numerical values (contra. Davies and Hughes 2014 ; Denzin and Lincoln 2011 ).

Such data refute many textbook and key source representations of quantitative and qualitative as being binary and separately ringfenced entities as shown in the literature review study below (contra e.g. Punch 2005 ; Goertz and Mahoney 2012 ). Nevertheless, they resonate with much recent and current literature in the field (e.g. Uher 2022 ; De Gregorio 2014 ). They also arguably extend the complexities of the terms and approaches. In some disciplines, these participant researchers only do a particular type of research and never need anything other than clear ‘quantitative’ definitions (Mathematics), and some only ever conduct research involving text and never numbers (Literature). Moreover, some participant researchers consider certain aspects lie outside the ‘qualitative’ or ‘quantitative’ (the theoretical in German Literature), or do research which they maintain does not contain ‘knowledge’ (Fine-Art Sculpture), while others outline how they feel they do foundational conceptual research which they believe comes at a stage before any quantity or quality can be assessed (Philosophy). Indeed, of the 31 participant researchers we spoke to, nine of them considered the terms ‘quantitative’ and ‘qualitative’ to be of little relevance for their subject.

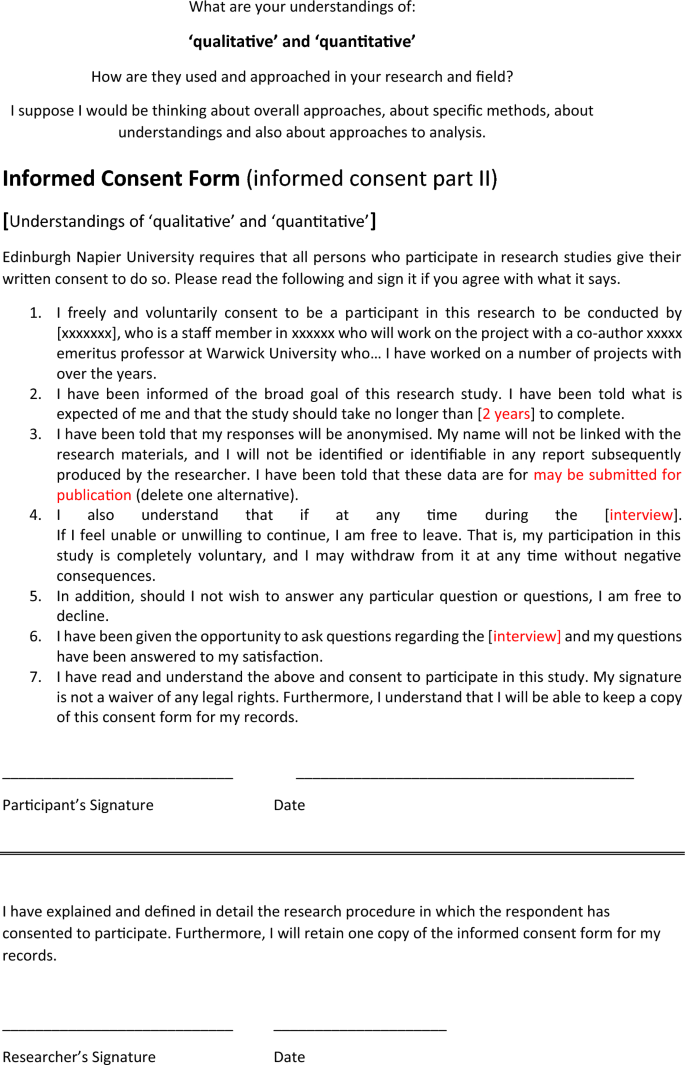

1.3 Outline of the two studies

This paper reports and discusses findings from a constructivist grounded approach interview study that interviewed experienced participant researchers (N = 31) in various disciplines (see Table 1 below) about their understandings of ‘qualitative’ and ‘quantitative’ in their subject areas. Findings from this interview study were compared with findings from a research methods literature review study that revealed many disparities with received and often binary presentations of the concepts in much key literature that informs student research methods courses. In this section we outline the review criteria, the method of analysis, and our findings. The findings are grouped according to how the sources reviewed consider ‘quantitative’ and ‘qualitative’ approaches the aspects of positivism and constructivism; the nature of research questions; research methods; analysis; issues of reliability, validity and generalizability; and the value and worth of the different approaches. Following this. We outline the approach, method, and procedure adopted for the interviews with research participants; sampling and saturation; and analysis; beside details of the participant researchers. Subsequently, Theme 2 focuses on contrasts of the interview data with ‘binary’ textbook and key source representations. Theme 3 focuses on what the interview data show about participant researcher perceptions of the value of ‘quantitative’ and ‘qualitative’ methods and approaches. This section outlines where, how, and sometimes why, participant researchers considered ‘quantitative’ and ‘qualitative’ methods approaches to be (or to not be) useful to them. These interview study findings show a surprising range of understandings, usage, and often perceived irrelevance of the terms. In the Discussion section, these findings form the focus of comparison with the literature as well as a consideration of possible implications for approaching and teaching research methods. In the conclusion we summarise the implications for research methods courses, for researchers in different disciplines and interdisciplinary contexts and discuss limitations and suggest future research. Besides adding to the debate on how ‘quantitative’ and ‘qualitative’ are conceptualized and how they are related, the paper appeals to those delivering research methods courses and to novice researchers to consider the concepts as highly complex and overlapping, to loosen constraints, and elaborate nuances of the commonplace binary representations of the terms.

2 Literature review study: some key textbooks and sources for teaching Research Methods.

2.1 review criteria.

To identify how concepts are presented in key materials we undertook a literature review study by consulting research methods course reading lists, library search engines, physically available shelves in institutional libraries, and Google Scholar. We wanted to encompass textbooks and some key texts which are recommended to UG, PG Masters and PhD students., for example, ‘textbooks’ like ‘Doing Your Research Project: A Guide for first-time researchers’ (Bell and Waters 2014 ) and ‘Introduction to Research Methods: A Practical Guide for Anyone Undertaking a Research project (5th Edition)’ (Dawson 2019 ). Such sources were frequently mentioned on reading lists and are freely available in many institutional libraries. We consulted seminal thinkers who have published widely on research methods, such as Denzin and Lincoln, or Cresswell, but we also considered texts which are likely less known such as ‘A tale of two cultures’ (Goertz and Mahoney 2012 ) and key articles such as ‘Five misunderstandings about case-study research’ (Flyvbjerg 2006 ). Students can freely find such sources, and are easily directed to them by supervisors. Although a more comprehensively robust search is possible, we nevertheless followed procedures and standard criteria for literature reviews (Atkinson et al. 2015 ).

3 Method of analysis

We assembled a total of 25 sources to look for a number of key tenets. We examined the sources for occurrence of the following: whether quantitative was described as positivist and qualitative was described as constructivist; whether quantitative was said to be science-based and qualitative was more reflective and non-science based; whether the research questions were presented as predetermined in quantitative methods and initially less focused in qualitative methods; whether quantitative methods were structured and qualitative methods were discussed as less structured; whether quantitative analysis focused on cause-effect type relationships and qualitative analysis was more exploratory; whether reliability, validity and generalizability were achieved through large numbers in quantitative research and through in-depth study in qualitative research; whether for particular subjects such as the sciences quantitative approaches were perceived to be of value (and qualitative was implied to have less value) and whether the converse was the case for other subjects such as history and anthropology; and whether mixed methods were considered possible or not possible. The 25 sources are detailed in Appendix 1 . As a confirmatory but less detailed exercise, and also detailed in Appendix 1 , we checked a further 23 research methods textbooks in German, Spanish and French, authored in those languages (rather than translations from English).

3.1 Findings

Overall, related to what quantitative and qualitative approaches, methods and analysis are, we found many key, often binary representations in this literature review. We outline these here below.

3.2 Positivism and constructivism

Firstly, 20 of the sources we reviewed stated that quantitative is considered positivist, and qualitative constructivist (e.g. Tashakkori et al 1998 ; Guba and Lincoln 1994 ; Howe 1988 ; Lincoln and Guba 1985 ; Teddlie and Tashakkori 2011 ; Creswell 1995 ; Morse 1991 ). Even if not everyone doing quantitative research (e.g. in sociology) consider themselves positivists (Marsh 1979 ), it is generally held quantitative research is positivist. Here, 12 of the sources noted that quantitative is considered ‘scientific’, situating observers outside the world looking in, e.g. through gathering numerical data (Punch 2005 ; Davis and Hughes 2014 ) whereas qualitative “locates the observer in the world” (Denzin and Lincoln 2011 , p.3). Quantitative researchers “collect facts and study the relationship of one set of facts to another”, whereas qualitative researchers “doubt whether social ‘facts’ exist and question whether a ‘scientific’ approach can be used when dealing with human beings” (Bell and Waters 2014 , p. 9).

3.3 The nature of research questions

Secondly, regarding research questions, “qualitative research… typically has… questions and methods… more general at the start, and… more focused as the study progresses” (Punch 2005 , p.28). In contrast, quantitative research uses “numerical data and typically… structured and predetermined research questions, conceptual frameworks and designs” (Punch 2005 , p.28). Of the sources we reviewed, 16 made such assertions. This understanding relates to type, and nature, of data, which is in turn anchored to particular worldviews. Punch ( 2005 , p 3–4) writes of how “in teaching about research, I find it useful to approach the qualitative-quantitative distinction primarily through…. the nature of the data. Later, the distinction can be broadened to include …. ways of conceptualising the reality being studied, and methods.” Here, the nature of data influences approach: numbers are for quantitative, and not-numbers (commonly words) for qualitative. Similarly, for Miles et al. ( 2018 ) “the nature of qualitative data” is “primarily on data in the form of words, that is, language in the form of extended text” (Miles et al. 2018 , no page). These understandings in turn relate to methods used.

Commonly, specific types of methods are said to be related to the type of approach adopted, and 18 of the sources we reviewed presented quantitative methods as being structured, and qualitative methods as less structured. For example, Davies and Hughes ( 2014 , p.23) claim “there are two principal options open to you: 1… quantitative research methods, using the traditions of science. 2… qualitative research, employing a more reflective or exploratory approach.” Here, quantitative methods are “questionnaires or structured interviews” whereas qualitative methods are “such as interviews or focus groups” (Dawson 2019 , no page given). Quantitative methods are more scientific, involve controlling a set of variables, and may involve experiments, something which, “qualitative researchers are agreed in their opposition to this definition of scientific research, or at least its application to social inquiry” (Hammersley 2013 , p. ix). As Punch notes ( 2005 , p.208), “the experiment was seen as the basis for establishing cause-effect relationships between variables, and its outcome (and control) variables had to be measured.”

4.1 Analysis

Such understandings often relate to analysis, and 16 of the sources we reviewed presented quantitative analysis as being statistical and number related, and qualitative analysis as being text based. With quantitative methods, “the data is subjected to statistical analysis, using techniques… likely to produce quantified, and, if possible, generalizable conclusions” (Bell and Waters 2014 , p.281). With qualitative research, however, this “calls for advanced skills in data management and text-driven creativity during the analysis and write-up” (Davies and Hughes 2014 ). Again, the data’s nature is key, and whilst qualitative analysis may condense data, it does not seek numbers. Indeed, “by data condensation, we do not necessarily mean quantification”, however, “occasionally, it may be helpful to convert the data into magnitudes… but this is not always necessary” (Miles et al. 2018 , npg). Qualitative analysis may involve stages such as assigning codes, subsequently sorting and sifting them, isolating patterns, then gradually refining any assertions made and comparing them to other literature (Miles et al. 2018 ). This could involve condensing, displaying, then drawing conclusions from the data (Miles et al. 2018 ). In this respect, some sources consider qualitative and quantitative analysis broadly similar in overall goals, yet different because quantitative analyses use “well-defined, familiar methods; are guided by canons; and are usually more sequential than iterative or cyclical” (Miles et al. 2018 , npg). In contrast, “qualitative researchers are… more fluid and… humanistic” in meaning making (Miles et al. 2018 , npg). Here, both approaches seek causation and may attempt to reveal ‘cause and effect’ but qualitative approaches often seek multiple and interacting influences, and effects and are less rigid (Miles et al. 2018 ). In quantitative inquiry search for causation relates to “causal mechanisms (i.e. how did X cause Y)” whereas in “the human sciences, this distinction relates to causal effects (i.e. whether X causes Y)” (Teddlie and Tashakkori 2011 , p.286). Similarly, that “scientific research in any area… seeks to trace out cause-effect relationships” (Punch 2005 , p.78). In contrast, qualitative research seeks interpretative understandings of human behaviour, “not ‘caused’ in any mechanical way, but… continually constructed and reconstructed” (Punch 2005 , p.126).

4.2 Issues of reliability, validity and generalizability

Regarding reliability, validity and generalizability, 19 of the sources we reviewed presented ideas along the lines that quantitative research is understood to seek large numbers, so quantitative researchers, “use techniques… likely to produce quantified and, if possible, generalizable conclusions (Bell and Waters 2014 , p.9). This means quantitative “research researches many more people” (Dawson 2019 , npg). Given quantitative researchers aim, “to discover answers to questions through the application of scientific procedures” it is anticipated these procedures will “increase the likelihood that the information… will be reliable and unbiased” (Davies and Hughes 2014 , p.9). Conversely, qualitative researchers are considered “more concerned to understand individuals’ perceptions of the world” (Bell and Waters 2014 , p.281) and consequently aim for in-depth data with smaller numbers, “as it is attitudes, behaviour and experiences that are important” (Dawson 2019 , npg). Consequently, generalizability of data is not key, as qualitative research has its “emphasis on a specific case, a focused and bounded phenomenon embedded in its context” (Miles et al. 2018 , npg). Yet, such research is considered generalizable in theoretical insight if not actual data (Flyvbjerg 2006 ).

4.3 The value and worth of the different approaches

Regarding ‘value’ and ‘worth’, many see this related with appropriacy to the question being researched. Thus, if questions involve more quantitative approaches, then these are of value, and if more qualitative, then these are of value, and 6 of the sources we reviewed presented these views (e.g. Bell and Waters 2014 ; Punch 2005 ; Dawson 2019 ). This resonates with disciplinary orientations where choices between given approaches are valued more in specific disciplines. History and Anthropology are seen more qualitative, whereas Economics and Epidemiology may be more quantitative (Kumar 1996 ). Qualitative approaches are valuable to study human behaviour and reveal in-depth pictures of peoples’ lived experience (e.g. Denzin and Lincoln 2011 ; Miles et al. 2018 ). Many consider there to be no real inherent superiority for one approach over another, and “asking whether quantitative or qualitative research is superior to the other is not a useful question” (Goertz and Mahoney 2012 , p.2).

Nevertheless, some give higher pragmatic value to quantitative research for studying individuals and people; neoliberal governments consistently value quantitative over qualitative research (Barone 2007 ; Bloch 2004 ; St Pierre 2004 ). Concomitantly, data produced by qualitative research is criticised by quantitative proponents “because of their problematic generalizability” (Bloor and Wood 2006 , p.179). However, other studies find quantitative researchers see qualitative methods and approaches positively (Pilcher and Cortazzi 2016 ). Some even question the qualitative/quantitative divide, and suggest “a more subtle and realistic set of distinctions that capture variation in research practice better” (Hammersley 2013 , p.99).

The above literature review study of key texts is hardly exhaustive, but shows a general outline of the binary divisions and categorizations that exist in many sources students and newer researchers encounter. Thus, despite the complex and blurred picture as outlined in the introduction above, many key texts students consult and that inform research methods courses often present a binary understanding that quantitative is positivist, focused on determining cause and effect, numerical or magnitude focused, uses experiments, and is grounded in an understanding the world can be observed from the outside in. Conversely, qualitative tends to be constructivist, focused on determining why events occur, is word or textual based (even if these elements are measured by their magnitude in a number or numerical format) and grounded in understanding the researcher is part of the world. The sciences and areas such as economics are said to tend towards the quantitative, and areas such as history and anthropology towards the qualitative.

We also note that in our literature review study we focused on English language textbooks, but we also looked at outline details, descriptions, and contents lists of texts in the languages of German, Spanish and French. We find that these broadly confirm the perception of a division between quantitative and qualitative research, and we detail a number of these in Appendix 1 . These examples are all research methods handbooks and student guides intended for under and post-graduates in social sciences and humanities; many are inter-disciplinary but some are more specifically books devoted to psychology, health care, education, politics, and management. Among the textbooks and handbooks examined in other languages, more recent books pay attention to online research and uses of the internet, social media and sometimes to big data and software for data analysis.

In these sources in languages other than English we find massive predominance of two (quantitative/qualitative) or three approaches (mixed). These are invariably introduced and examined with related theories, examples and cases in exactly that order: quantitative; qualitative; mixed. Here there is perhaps the unexamined implication that this is a historical order of research method development and also of acceptability of use (depending on research purposes). Notably, Molina Marin (2020) is oriented to Latin America and makes the point that most European writing about research methods is in English or German, while there are far fewer publications in Spanish and few with Latin American contextual relevance, which may limit epistemological perspectives. This point is evident in French and Spanish publications (much less the case in German) where bibliographic details seem dominated by English language publications (or translations from them). We now turn to outline our interview study.

5 Interview study

5.1 approach and choice of method.

We approached our interview study from a constructivist standpoint of exploring and investigating different subject specialists’ understandings of quantitative and qualitative. Critically, we were guided by the key constructivist tenet that knowledge is not independent of subjects seeking it (Olssen 1996 ), nor of subjects using it. Extending from this we considered interviews more appropriate than narratives or focus groups. Given the exploratory nature of our study, we considered interviews most suited as we wanted to have a free dialogue (cf. Bakhtin 1981 ) regarding how the terms are understood in their subject contexts as opposed to their neutral dictionary definitions (Bakhtin 1986 ), and not to focus on a specific point with many individuals. Specifically, we used ‘semi’-structured interviews. ‘Semi’ can mean both ‘half in quantity or value’ but also ‘to some extent: partly: incompletely’ (e.g. Merriam Webster 2022 ). Our interviews, following our constructionist and exploratory approach, aligned with the latter definition (see Appendix 2 for the Interview study schedule). This loose ‘semi’ structure was deliberately designed to (and did) lead to interviews directed by the participants, who themselves often specifically asked what was meant by the questions. This created a highly technical dialogue (Buber, 1947) focused on the subject.

5.2 Sampling and saturation

Our sampling combined purposive and snowball sampling (Sharma 2017 ; Levitt et al. 2018 ). Initially, participants were purposively identified by subject given the project sought to understand different subject perspectives of ‘qualitative’ and ‘quantitative.’ Later, a combined purposive and snowball sampling technique was used whereby participants interviewed were asked if they knew others teaching particular subjects. Regarding priorities for participant eligibility, this was done according to subject, although generally participants also had extensive experience (see Table 1 ). For most, English was their first language, where it was not, participants were proficient in English. The language of interview choice was English as it was most familiar to both participants and interviewer (Cortazzi et al. 2011 ).

Regarding saturation, some argue saturation occurs within 12 interviews (Guest et al. 2006 ), others within 17 (Francis et al. 2010 ). Arguably, however, saturation cannot be determined in advance of analysis and is “inescapably situated and subjective” (Braun and Clarke 2021 , p.201). This critical role of subjectivity and context guided how we approached saturation, whereby it was “operationalized in a way consistent with the research question(s) and the theoretical position and analytic framework adopted” (Saunders et al. 2018 , p.1893). We recognise that more could always be found but are satisfied that 31 participants provided sufficient data for our investigation. Indeed, our original intention was to recruit 20 participants, feeling this would provide sufficient saturation (Francis et al. 2010 ; Guest et al. 2006 ) but when we reached 20, and as we had already started analysis (cf. Braun and Clarke 2021 ) as we ourselves transcribed the interviews (Bird 2005 ) we wanted to explore understandings of ‘qualitative’ and ‘quantitative’ with other subject fields. As Table 1 shows, ‘English Literature’, ‘Philosophy, and ‘Sculpture’ were only explored after interview 20. These additional subject fields added significantly (see below) to our data.

5.3 Analysis and participant researcher details

Our analysis followed Braun and Clarke’s ( 2006 ) thematic analysis. Given the study’s exploratory constructionist nature, we combined ‘top down’ deductive type analysis for anticipated themes, and ‘bottom up’ inductive type analysis for any unexpected themes. The latter was similar to a constructivist grounded theory analysis (Charmaz 2010 ) whereby the transcripts were explored through close repeated reading for themes to emerge from the bottom up. We deliberately did not use any CAQDAS software such as NVivo as we wanted to manually read the scripts in one lengthy word document. We recognise that such software could allow us to do this but we were familiar with the approach we used and have found it effective for a number of years. We thus continued to use it here as well. We counted instances of themes through cross-checking after reading transcripts and discussing them, thereby heightening reliability and validity (Golafshani 2003 ). All interviews were undertaken with informed consent and participants were assured all representation was anonymous (Christians 2011 ). The study was approved by relevant ethics committees. Table 1 above shows the subject area, years of experience, and first language of the participant researchers. We also bracket after each subject area whether we consider it to be ‘Science’ or ‘Arts’ or whether we consider them as ‘Arts/Science’ or ‘Science/Arts’. This is of course subjective and in many ways not possible to do, but we were guided in how we categorised these subjects by doing so according to how we feel the methodology sources form the literature review study would categorize them.

5.4 Presentation of the interview study data compared with data from the literature review study

We present our interview study data in the three broad areas that emerged through analysis. Our approach to thematic analysis was to deductively code the interview transcripts manually under the three broad areas of: where data aligns with textbook and key source ‘binary’ representations; where the data contrasts with such representations; and where the data relates to interviewee perceptions of the value of ‘qualitative’ and ‘quantitative’. The latter relates to whether participant researchers expressed views that suggested they considered each approach to be useful, valuable, or not. We also read through the transcripts inductively with a view to being open to emerging and unanticipated themes. For each data citation, we note the subject field to show the range of subject areas. We later discuss these data in terms of their implications for research values, assumptions and practices and for their use when teaching about different methods. We provide illustrative citations and numbers of participant researchers who commented in relation to the key points below, but first provide an overview in Table 2 .

5.4.1 Theme 1: Alignments with ‘binary’ textbook and key source representations

The data often aligned with textbook representations. Seven participant researchers explicitly said, or alluded to the representation that ‘quantitative’ is positivist and seeks objectivity whereas ‘qualitative’ is more constructivist and subjective. For example: “the main distinction… is that qualitative is associated with subjectivity and quantitative being objective.” This was because “traditionally quantitative methods they’ve been associated with the positivist scientific model of research whereas qualitative methods are rooted in the constructivist and interpretivist model” (Psychology). Similarly, “quantitative methods… I see that as more… logical to a scientific mode of generating knowledge so… largely depends on numbers to establish causal relations… qualitative, I want to more broadly summarize that as anything other than numbers” (Communication Studies). One Statistics researcher had “always associated quantitative research more with statistics and numbers… you measure something… I think qualitative… you make a statement… without saying to what extent so… so you run fast but it’s not clear how fast you actually run…. that doesn’t tell you much because it doesn’t tell you how fast.” One mathematics participant researcher said mathematics was “ super quantitative… more beyond quantitative in the sense that not only is there a measurement of size in everything but everything is defined in… really careful terms… in how that quantity kind of interacts with other quantities that are defined so in that sense it’s kind of beyond quantitative.” Further, this applied at pre-data and data integration stages. Conversely, ‘qualitative’ “would be more a kind of verbalistic form of reasoning or… logic.”

Another representation four participant researchers noted was that ‘quantitative ‘ has structured predetermined questions whereas ‘qualitative’ has initially general questions that became more focused as research proceeded. For example, in Tourism, “with qualitative research I would go with open ended questions whereas with quantitative research I would go with closed questions.” This was because ‘qualitative’ was more exploratory: “quantitative methods… I would use when the parameters… are well understood, qualitative research is when I’m dealing with topics where I’m not entirely sure about… the answers.” As one Psychology participant researcher commented: “the main assumption in quantitative… is one single answer… whereas qualitative approaches embrace… multiplicity.”

Nineteen participant researchers considered ‘quantitative’ numbers whereas ‘qualitative’ was anything except numbers. For example, “quantitative research… you’re generating numbers and the analysis is involving numbers… qualitative is… usually… text-based looking for something else… not condensing it down to numbers” (Psychology). Similarly, ‘quantitative’ was “largely… numeric… the arrangement of larger scale patterns” whereas, “in design field, the idea of qualitative…is about the measure… people put against something… not [a] numerical measure” (Design). One participant researcher elaborated about Biology and Ecology, noting that “quantitative it’s a number it’s an amount of something… associated with a numerical dimension… whereas… qualitative data and… observations… in biology…. you’re looking at electron micrographs… you may want to describe those things… purely in… QUALitative terms… and you can do the same in… Ecology” (Human Computer Interaction). One participant researcher also commented on the magnitude of ‘quantitative’ data often involving more than numbers, or having a complex involvement with numbers: “I was thinking… quantitative… just involves numbers…. but it’s not… if… NVivo… counts the occurrence of a word… it’s done in a very structured way…. to the point that you can even… then do statistical analysis” (Logistics).

Regarding mixed methods, data aligned with the textbook representations that there are two distinct ‘camps’ but also that these could be crossed. Six participants felt opposing camps and paradigms existed. For example, in Nursing, that “it does feel quite divided in Nursing I think you’re either a qualitative or a quantitative researcher there’s two different schools… yeah some people in our school would be very anti-qualitative.” Similarly, in Music one participant researcher felt “it is very split and you’ll find… some people position themselves in one or the other of those camps and are reluctant to consider the other side. In Psychology, “yes… they’re quite… territorial and passionately defensive about the rightness of their own approaches so there’s this… narrative that these two paradigms… of positivistic and interpretivist type… cannot be crossed… you need to belong to one camp.” Also, in Communication Studies, “I do think they are kind of mutually exclusive although I accept… they can be combined… but I don’t think they, they fundamentally… speak to each other.” One Linguistics participant researcher felt some Linguists were highly qualitative and never used numbers, but “then you have… the corpus analysts who quantify everything and always under the headline ‘Corpus linguistics finally gets to the point… where we get rid of researcher bias; it objectifies the analysis’ because you have big numbers and you have statistical values and therefore… it’s led by the data not by the researcher.” This participant researcher found such striving for objectivity a “very strange thing” as any choice was based on previously argued ideas, which themselves could not be objective: “because all the decisions that you need to put into which software am I using, which algorithm am I using, which text do I put in…. this is all driven by ideas.”