Have a language expert improve your writing

Run a free plagiarism check in 10 minutes, generate accurate citations for free.

- Knowledge Base

Methodology

- Systematic Review | Definition, Example, & Guide

Systematic Review | Definition, Example & Guide

Published on June 15, 2022 by Shaun Turney . Revised on November 20, 2023.

A systematic review is a type of review that uses repeatable methods to find, select, and synthesize all available evidence. It answers a clearly formulated research question and explicitly states the methods used to arrive at the answer.

They answered the question “What is the effectiveness of probiotics in reducing eczema symptoms and improving quality of life in patients with eczema?”

In this context, a probiotic is a health product that contains live microorganisms and is taken by mouth. Eczema is a common skin condition that causes red, itchy skin.

Table of contents

What is a systematic review, systematic review vs. meta-analysis, systematic review vs. literature review, systematic review vs. scoping review, when to conduct a systematic review, pros and cons of systematic reviews, step-by-step example of a systematic review, other interesting articles, frequently asked questions about systematic reviews.

A review is an overview of the research that’s already been completed on a topic.

What makes a systematic review different from other types of reviews is that the research methods are designed to reduce bias . The methods are repeatable, and the approach is formal and systematic:

- Formulate a research question

- Develop a protocol

- Search for all relevant studies

- Apply the selection criteria

- Extract the data

- Synthesize the data

- Write and publish a report

Although multiple sets of guidelines exist, the Cochrane Handbook for Systematic Reviews is among the most widely used. It provides detailed guidelines on how to complete each step of the systematic review process.

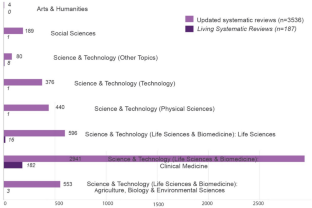

Systematic reviews are most commonly used in medical and public health research, but they can also be found in other disciplines.

Systematic reviews typically answer their research question by synthesizing all available evidence and evaluating the quality of the evidence. Synthesizing means bringing together different information to tell a single, cohesive story. The synthesis can be narrative ( qualitative ), quantitative , or both.

Receive feedback on language, structure, and formatting

Professional editors proofread and edit your paper by focusing on:

- Academic style

- Vague sentences

- Style consistency

See an example

Systematic reviews often quantitatively synthesize the evidence using a meta-analysis . A meta-analysis is a statistical analysis, not a type of review.

A meta-analysis is a technique to synthesize results from multiple studies. It’s a statistical analysis that combines the results of two or more studies, usually to estimate an effect size .

A literature review is a type of review that uses a less systematic and formal approach than a systematic review. Typically, an expert in a topic will qualitatively summarize and evaluate previous work, without using a formal, explicit method.

Although literature reviews are often less time-consuming and can be insightful or helpful, they have a higher risk of bias and are less transparent than systematic reviews.

Similar to a systematic review, a scoping review is a type of review that tries to minimize bias by using transparent and repeatable methods.

However, a scoping review isn’t a type of systematic review. The most important difference is the goal: rather than answering a specific question, a scoping review explores a topic. The researcher tries to identify the main concepts, theories, and evidence, as well as gaps in the current research.

Sometimes scoping reviews are an exploratory preparation step for a systematic review, and sometimes they are a standalone project.

Prevent plagiarism. Run a free check.

A systematic review is a good choice of review if you want to answer a question about the effectiveness of an intervention , such as a medical treatment.

To conduct a systematic review, you’ll need the following:

- A precise question , usually about the effectiveness of an intervention. The question needs to be about a topic that’s previously been studied by multiple researchers. If there’s no previous research, there’s nothing to review.

- If you’re doing a systematic review on your own (e.g., for a research paper or thesis ), you should take appropriate measures to ensure the validity and reliability of your research.

- Access to databases and journal archives. Often, your educational institution provides you with access.

- Time. A professional systematic review is a time-consuming process: it will take the lead author about six months of full-time work. If you’re a student, you should narrow the scope of your systematic review and stick to a tight schedule.

- Bibliographic, word-processing, spreadsheet, and statistical software . For example, you could use EndNote, Microsoft Word, Excel, and SPSS.

A systematic review has many pros .

- They minimize research bias by considering all available evidence and evaluating each study for bias.

- Their methods are transparent , so they can be scrutinized by others.

- They’re thorough : they summarize all available evidence.

- They can be replicated and updated by others.

Systematic reviews also have a few cons .

- They’re time-consuming .

- They’re narrow in scope : they only answer the precise research question.

The 7 steps for conducting a systematic review are explained with an example.

Step 1: Formulate a research question

Formulating the research question is probably the most important step of a systematic review. A clear research question will:

- Allow you to more effectively communicate your research to other researchers and practitioners

- Guide your decisions as you plan and conduct your systematic review

A good research question for a systematic review has four components, which you can remember with the acronym PICO :

- Population(s) or problem(s)

- Intervention(s)

- Comparison(s)

You can rearrange these four components to write your research question:

- What is the effectiveness of I versus C for O in P ?

Sometimes, you may want to include a fifth component, the type of study design . In this case, the acronym is PICOT .

- Type of study design(s)

- The population of patients with eczema

- The intervention of probiotics

- In comparison to no treatment, placebo , or non-probiotic treatment

- The outcome of changes in participant-, parent-, and doctor-rated symptoms of eczema and quality of life

- Randomized control trials, a type of study design

Their research question was:

- What is the effectiveness of probiotics versus no treatment, a placebo, or a non-probiotic treatment for reducing eczema symptoms and improving quality of life in patients with eczema?

Step 2: Develop a protocol

A protocol is a document that contains your research plan for the systematic review. This is an important step because having a plan allows you to work more efficiently and reduces bias.

Your protocol should include the following components:

- Background information : Provide the context of the research question, including why it’s important.

- Research objective (s) : Rephrase your research question as an objective.

- Selection criteria: State how you’ll decide which studies to include or exclude from your review.

- Search strategy: Discuss your plan for finding studies.

- Analysis: Explain what information you’ll collect from the studies and how you’ll synthesize the data.

If you’re a professional seeking to publish your review, it’s a good idea to bring together an advisory committee . This is a group of about six people who have experience in the topic you’re researching. They can help you make decisions about your protocol.

It’s highly recommended to register your protocol. Registering your protocol means submitting it to a database such as PROSPERO or ClinicalTrials.gov .

Step 3: Search for all relevant studies

Searching for relevant studies is the most time-consuming step of a systematic review.

To reduce bias, it’s important to search for relevant studies very thoroughly. Your strategy will depend on your field and your research question, but sources generally fall into these four categories:

- Databases: Search multiple databases of peer-reviewed literature, such as PubMed or Scopus . Think carefully about how to phrase your search terms and include multiple synonyms of each word. Use Boolean operators if relevant.

- Handsearching: In addition to searching the primary sources using databases, you’ll also need to search manually. One strategy is to scan relevant journals or conference proceedings. Another strategy is to scan the reference lists of relevant studies.

- Gray literature: Gray literature includes documents produced by governments, universities, and other institutions that aren’t published by traditional publishers. Graduate student theses are an important type of gray literature, which you can search using the Networked Digital Library of Theses and Dissertations (NDLTD) . In medicine, clinical trial registries are another important type of gray literature.

- Experts: Contact experts in the field to ask if they have unpublished studies that should be included in your review.

At this stage of your review, you won’t read the articles yet. Simply save any potentially relevant citations using bibliographic software, such as Scribbr’s APA or MLA Generator .

- Databases: EMBASE, PsycINFO, AMED, LILACS, and ISI Web of Science

- Handsearch: Conference proceedings and reference lists of articles

- Gray literature: The Cochrane Library, the metaRegister of Controlled Trials, and the Ongoing Skin Trials Register

- Experts: Authors of unpublished registered trials, pharmaceutical companies, and manufacturers of probiotics

Step 4: Apply the selection criteria

Applying the selection criteria is a three-person job. Two of you will independently read the studies and decide which to include in your review based on the selection criteria you established in your protocol . The third person’s job is to break any ties.

To increase inter-rater reliability , ensure that everyone thoroughly understands the selection criteria before you begin.

If you’re writing a systematic review as a student for an assignment, you might not have a team. In this case, you’ll have to apply the selection criteria on your own; you can mention this as a limitation in your paper’s discussion.

You should apply the selection criteria in two phases:

- Based on the titles and abstracts : Decide whether each article potentially meets the selection criteria based on the information provided in the abstracts.

- Based on the full texts: Download the articles that weren’t excluded during the first phase. If an article isn’t available online or through your library, you may need to contact the authors to ask for a copy. Read the articles and decide which articles meet the selection criteria.

It’s very important to keep a meticulous record of why you included or excluded each article. When the selection process is complete, you can summarize what you did using a PRISMA flow diagram .

Next, Boyle and colleagues found the full texts for each of the remaining studies. Boyle and Tang read through the articles to decide if any more studies needed to be excluded based on the selection criteria.

When Boyle and Tang disagreed about whether a study should be excluded, they discussed it with Varigos until the three researchers came to an agreement.

Step 5: Extract the data

Extracting the data means collecting information from the selected studies in a systematic way. There are two types of information you need to collect from each study:

- Information about the study’s methods and results . The exact information will depend on your research question, but it might include the year, study design , sample size, context, research findings , and conclusions. If any data are missing, you’ll need to contact the study’s authors.

- Your judgment of the quality of the evidence, including risk of bias .

You should collect this information using forms. You can find sample forms in The Registry of Methods and Tools for Evidence-Informed Decision Making and the Grading of Recommendations, Assessment, Development and Evaluations Working Group .

Extracting the data is also a three-person job. Two people should do this step independently, and the third person will resolve any disagreements.

They also collected data about possible sources of bias, such as how the study participants were randomized into the control and treatment groups.

Step 6: Synthesize the data

Synthesizing the data means bringing together the information you collected into a single, cohesive story. There are two main approaches to synthesizing the data:

- Narrative ( qualitative ): Summarize the information in words. You’ll need to discuss the studies and assess their overall quality.

- Quantitative : Use statistical methods to summarize and compare data from different studies. The most common quantitative approach is a meta-analysis , which allows you to combine results from multiple studies into a summary result.

Generally, you should use both approaches together whenever possible. If you don’t have enough data, or the data from different studies aren’t comparable, then you can take just a narrative approach. However, you should justify why a quantitative approach wasn’t possible.

Boyle and colleagues also divided the studies into subgroups, such as studies about babies, children, and adults, and analyzed the effect sizes within each group.

Step 7: Write and publish a report

The purpose of writing a systematic review article is to share the answer to your research question and explain how you arrived at this answer.

Your article should include the following sections:

- Abstract : A summary of the review

- Introduction : Including the rationale and objectives

- Methods : Including the selection criteria, search method, data extraction method, and synthesis method

- Results : Including results of the search and selection process, study characteristics, risk of bias in the studies, and synthesis results

- Discussion : Including interpretation of the results and limitations of the review

- Conclusion : The answer to your research question and implications for practice, policy, or research

To verify that your report includes everything it needs, you can use the PRISMA checklist .

Once your report is written, you can publish it in a systematic review database, such as the Cochrane Database of Systematic Reviews , and/or in a peer-reviewed journal.

In their report, Boyle and colleagues concluded that probiotics cannot be recommended for reducing eczema symptoms or improving quality of life in patients with eczema. Note Generative AI tools like ChatGPT can be useful at various stages of the writing and research process and can help you to write your systematic review. However, we strongly advise against trying to pass AI-generated text off as your own work.

If you want to know more about statistics , methodology , or research bias , make sure to check out some of our other articles with explanations and examples.

- Student’s t -distribution

- Normal distribution

- Null and Alternative Hypotheses

- Chi square tests

- Confidence interval

- Quartiles & Quantiles

- Cluster sampling

- Stratified sampling

- Data cleansing

- Reproducibility vs Replicability

- Peer review

- Prospective cohort study

Research bias

- Implicit bias

- Cognitive bias

- Placebo effect

- Hawthorne effect

- Hindsight bias

- Affect heuristic

- Social desirability bias

A literature review is a survey of scholarly sources (such as books, journal articles, and theses) related to a specific topic or research question .

It is often written as part of a thesis, dissertation , or research paper , in order to situate your work in relation to existing knowledge.

A literature review is a survey of credible sources on a topic, often used in dissertations , theses, and research papers . Literature reviews give an overview of knowledge on a subject, helping you identify relevant theories and methods, as well as gaps in existing research. Literature reviews are set up similarly to other academic texts , with an introduction , a main body, and a conclusion .

An annotated bibliography is a list of source references that has a short description (called an annotation ) for each of the sources. It is often assigned as part of the research process for a paper .

A systematic review is secondary research because it uses existing research. You don’t collect new data yourself.

Cite this Scribbr article

If you want to cite this source, you can copy and paste the citation or click the “Cite this Scribbr article” button to automatically add the citation to our free Citation Generator.

Turney, S. (2023, November 20). Systematic Review | Definition, Example & Guide. Scribbr. Retrieved September 3, 2024, from https://www.scribbr.com/methodology/systematic-review/

Is this article helpful?

Shaun Turney

Other students also liked, how to write a literature review | guide, examples, & templates, how to write a research proposal | examples & templates, what is critical thinking | definition & examples, "i thought ai proofreading was useless but..".

I've been using Scribbr for years now and I know it's a service that won't disappoint. It does a good job spotting mistakes”

Systematic Reviews

- What is a Systematic Review?

A systematic review is an evidence synthesis that uses explicit, reproducible methods to perform a comprehensive literature search and critical appraisal of individual studies and that uses appropriate statistical techniques to combine these valid studies.

Key Characteristics of a Systematic Review:

Generally, systematic reviews must have:

- a clearly stated set of objectives with pre-defined eligibility criteria for studies

- an explicit, reproducible methodology

- a systematic search that attempts to identify all studies that would meet the eligibility criteria

- an assessment of the validity of the findings of the included studies, for example through the assessment of the risk of bias

- a systematic presentation, and synthesis, of the characteristics and findings of the included studies.

A meta-analysis is a systematic review that uses quantitative methods to synthesize and summarize the pooled data from included studies.

Additional Information

- How-to Books

- Beyond Health Sciences

- Cochrane Handbook For Systematic Reviews of Interventions Provides guidance to authors for the preparation of Cochrane Intervention reviews. Chapter 6 covers searching for reviews.

- Systematic Reviews: CRD’s Guidance for Undertaking Reviews in Health Care From The University of York Centre for Reviews and Dissemination: Provides practical guidance for undertaking evidence synthesis based on a thorough understanding of systematic review methodology. It presents the core principles of systematic reviewing, and in complementary chapters, highlights issues that are specific to reviews of clinical tests, public health interventions, adverse effects, and economic evaluations.

- Cornell, Sytematic Reviews and Evidence Synthesis Beyond the Health Sciences Video series geared for librarians but very informative about searching outside medicine.

- << Previous: Getting Started

- Next: Levels of Evidence >>

- Getting Started

- Levels of Evidence

- Locating Systematic Reviews

- Searching Systematically

- Developing Answerable Questions

- Identifying Synonyms & Related Terms

- Using Truncation and Wildcards

- Identifying Search Limits/Exclusion Criteria

- Keyword vs. Subject Searching

- Where to Search

- Search Filters

- Sensitivity vs. Precision

- Core Databases

- Other Databases

- Clinical Trial Registries

- Conference Presentations

- Databases Indexing Grey Literature

- Web Searching

- Handsearching

- Citation Indexes

- Documenting the Search Process

- Managing your Review

Research Support

- Last Updated: Aug 14, 2024 11:07 AM

- URL: https://guides.library.ucdavis.edu/systematic-reviews

An official website of the United States government

The .gov means it’s official. Federal government websites often end in .gov or .mil. Before sharing sensitive information, make sure you’re on a federal government site.

The site is secure. The https:// ensures that you are connecting to the official website and that any information you provide is encrypted and transmitted securely.

- Publications

- Account settings

- My Bibliography

- Collections

- Citation manager

Save citation to file

Email citation, add to collections.

- Create a new collection

- Add to an existing collection

Add to My Bibliography

Your saved search, create a file for external citation management software, your rss feed.

- Search in PubMed

- Search in NLM Catalog

- Add to Search

How to Do a Systematic Review: A Best Practice Guide for Conducting and Reporting Narrative Reviews, Meta-Analyses, and Meta-Syntheses

Affiliations.

- 1 Behavioural Science Centre, Stirling Management School, University of Stirling, Stirling FK9 4LA, United Kingdom; email: [email protected].

- 2 Department of Psychological and Behavioural Science, London School of Economics and Political Science, London WC2A 2AE, United Kingdom.

- 3 Department of Statistics, Northwestern University, Evanston, Illinois 60208, USA; email: [email protected].

- PMID: 30089228

- DOI: 10.1146/annurev-psych-010418-102803

Systematic reviews are characterized by a methodical and replicable methodology and presentation. They involve a comprehensive search to locate all relevant published and unpublished work on a subject; a systematic integration of search results; and a critique of the extent, nature, and quality of evidence in relation to a particular research question. The best reviews synthesize studies to draw broad theoretical conclusions about what a literature means, linking theory to evidence and evidence to theory. This guide describes how to plan, conduct, organize, and present a systematic review of quantitative (meta-analysis) or qualitative (narrative review, meta-synthesis) information. We outline core standards and principles and describe commonly encountered problems. Although this guide targets psychological scientists, its high level of abstraction makes it potentially relevant to any subject area or discipline. We argue that systematic reviews are a key methodology for clarifying whether and how research findings replicate and for explaining possible inconsistencies, and we call for researchers to conduct systematic reviews to help elucidate whether there is a replication crisis.

Keywords: evidence; guide; meta-analysis; meta-synthesis; narrative; systematic review; theory.

PubMed Disclaimer

Similar articles

- The future of Cochrane Neonatal. Soll RF, Ovelman C, McGuire W. Soll RF, et al. Early Hum Dev. 2020 Nov;150:105191. doi: 10.1016/j.earlhumdev.2020.105191. Epub 2020 Sep 12. Early Hum Dev. 2020. PMID: 33036834

- Summarizing systematic reviews: methodological development, conduct and reporting of an umbrella review approach. Aromataris E, Fernandez R, Godfrey CM, Holly C, Khalil H, Tungpunkom P. Aromataris E, et al. Int J Evid Based Healthc. 2015 Sep;13(3):132-40. doi: 10.1097/XEB.0000000000000055. Int J Evid Based Healthc. 2015. PMID: 26360830

- RAMESES publication standards: meta-narrative reviews. Wong G, Greenhalgh T, Westhorp G, Buckingham J, Pawson R. Wong G, et al. BMC Med. 2013 Jan 29;11:20. doi: 10.1186/1741-7015-11-20. BMC Med. 2013. PMID: 23360661 Free PMC article.

- A Primer on Systematic Reviews and Meta-Analyses. Nguyen NH, Singh S. Nguyen NH, et al. Semin Liver Dis. 2018 May;38(2):103-111. doi: 10.1055/s-0038-1655776. Epub 2018 Jun 5. Semin Liver Dis. 2018. PMID: 29871017 Review.

- Publication Bias and Nonreporting Found in Majority of Systematic Reviews and Meta-analyses in Anesthesiology Journals. Hedin RJ, Umberham BA, Detweiler BN, Kollmorgen L, Vassar M. Hedin RJ, et al. Anesth Analg. 2016 Oct;123(4):1018-25. doi: 10.1213/ANE.0000000000001452. Anesth Analg. 2016. PMID: 27537925 Review.

- The Association between Emotional Intelligence and Prosocial Behaviors in Children and Adolescents: A Systematic Review and Meta-Analysis. Cao X, Chen J. Cao X, et al. J Youth Adolesc. 2024 Aug 28. doi: 10.1007/s10964-024-02062-y. Online ahead of print. J Youth Adolesc. 2024. PMID: 39198344

- The impact of chemical pollution across major life transitions: a meta-analysis on oxidative stress in amphibians. Martin C, Capilla-Lasheras P, Monaghan P, Burraco P. Martin C, et al. Proc Biol Sci. 2024 Aug;291(2029):20241536. doi: 10.1098/rspb.2024.1536. Epub 2024 Aug 28. Proc Biol Sci. 2024. PMID: 39191283 Free PMC article.

- Target mechanisms of mindfulness-based programmes and practices: a scoping review. Maloney S, Kock M, Slaghekke Y, Radley L, Lopez-Montoyo A, Montero-Marin J, Kuyken W. Maloney S, et al. BMJ Ment Health. 2024 Aug 24;27(1):e300955. doi: 10.1136/bmjment-2023-300955. BMJ Ment Health. 2024. PMID: 39181568 Free PMC article. Review.

- Bridging disciplines-key to success when implementing planetary health in medical training curricula. Malmqvist E, Oudin A. Malmqvist E, et al. Front Public Health. 2024 Aug 6;12:1454729. doi: 10.3389/fpubh.2024.1454729. eCollection 2024. Front Public Health. 2024. PMID: 39165783 Free PMC article. Review.

- Strength of evidence for five happiness strategies. Puterman E, Zieff G, Stoner L. Puterman E, et al. Nat Hum Behav. 2024 Aug 12. doi: 10.1038/s41562-024-01954-0. Online ahead of print. Nat Hum Behav. 2024. PMID: 39134738 No abstract available.

- Search in MeSH

LinkOut - more resources

Full text sources.

- Ingenta plc

- Ovid Technologies, Inc.

Other Literature Sources

- scite Smart Citations

Miscellaneous

- NCI CPTAC Assay Portal

- Citation Manager

NCBI Literature Resources

MeSH PMC Bookshelf Disclaimer

The PubMed wordmark and PubMed logo are registered trademarks of the U.S. Department of Health and Human Services (HHS). Unauthorized use of these marks is strictly prohibited.

Have a language expert improve your writing

Run a free plagiarism check in 10 minutes, automatically generate references for free.

- Knowledge Base

- Methodology

- Systematic Review | Definition, Examples & Guide

Systematic Review | Definition, Examples & Guide

Published on 15 June 2022 by Shaun Turney . Revised on 18 July 2024.

A systematic review is a type of review that uses repeatable methods to find, select, and synthesise all available evidence. It answers a clearly formulated research question and explicitly states the methods used to arrive at the answer.

They answered the question ‘What is the effectiveness of probiotics in reducing eczema symptoms and improving quality of life in patients with eczema?’

In this context, a probiotic is a health product that contains live microorganisms and is taken by mouth. Eczema is a common skin condition that causes red, itchy skin.

Table of contents

What is a systematic review, systematic review vs meta-analysis, systematic review vs literature review, systematic review vs scoping review, when to conduct a systematic review, pros and cons of systematic reviews, step-by-step example of a systematic review, frequently asked questions about systematic reviews.

A review is an overview of the research that’s already been completed on a topic.

What makes a systematic review different from other types of reviews is that the research methods are designed to reduce research bias . The methods are repeatable , and the approach is formal and systematic:

- Formulate a research question

- Develop a protocol

- Search for all relevant studies

- Apply the selection criteria

- Extract the data

- Synthesise the data

- Write and publish a report

Although multiple sets of guidelines exist, the Cochrane Handbook for Systematic Reviews is among the most widely used. It provides detailed guidelines on how to complete each step of the systematic review process.

Systematic reviews are most commonly used in medical and public health research, but they can also be found in other disciplines.

Systematic reviews typically answer their research question by synthesising all available evidence and evaluating the quality of the evidence. Synthesising means bringing together different information to tell a single, cohesive story. The synthesis can be narrative ( qualitative ), quantitative , or both.

Prevent plagiarism, run a free check.

Systematic reviews often quantitatively synthesise the evidence using a meta-analysis . A meta-analysis is a statistical analysis, not a type of review.

A meta-analysis is a technique to synthesise results from multiple studies. It’s a statistical analysis that combines the results of two or more studies, usually to estimate an effect size .

A literature review is a type of review that uses a less systematic and formal approach than a systematic review. Typically, an expert in a topic will qualitatively summarise and evaluate previous work, without using a formal, explicit method.

Although literature reviews are often less time-consuming and can be insightful or helpful, they have a higher risk of bias and are less transparent than systematic reviews.

Similar to a systematic review, a scoping review is a type of review that tries to minimise bias by using transparent and repeatable methods.

However, a scoping review isn’t a type of systematic review. The most important difference is the goal: rather than answering a specific question, a scoping review explores a topic. The researcher tries to identify the main concepts, theories, and evidence, as well as gaps in the current research.

Sometimes scoping reviews are an exploratory preparation step for a systematic review, and sometimes they are a standalone project.

A systematic review is a good choice of review if you want to answer a question about the effectiveness of an intervention , such as a medical treatment.

To conduct a systematic review, you’ll need the following:

- A precise question , usually about the effectiveness of an intervention. The question needs to be about a topic that’s previously been studied by multiple researchers. If there’s no previous research, there’s nothing to review.

- If you’re doing a systematic review on your own (e.g., for a research paper or thesis), you should take appropriate measures to ensure the validity and reliability of your research.

- Access to databases and journal archives. Often, your educational institution provides you with access.

- Time. A professional systematic review is a time-consuming process: it will take the lead author about six months of full-time work. If you’re a student, you should narrow the scope of your systematic review and stick to a tight schedule.

- Bibliographic, word-processing, spreadsheet, and statistical software . For example, you could use EndNote, Microsoft Word, Excel, and SPSS.

A systematic review has many pros .

- They minimise research b ias by considering all available evidence and evaluating each study for bias.

- Their methods are transparent , so they can be scrutinised by others.

- They’re thorough : they summarise all available evidence.

- They can be replicated and updated by others.

Systematic reviews also have a few cons .

- They’re time-consuming .

- They’re narrow in scope : they only answer the precise research question.

The 7 steps for conducting a systematic review are explained with an example.

Step 1: Formulate a research question

Formulating the research question is probably the most important step of a systematic review. A clear research question will:

- Allow you to more effectively communicate your research to other researchers and practitioners

- Guide your decisions as you plan and conduct your systematic review

A good research question for a systematic review has four components, which you can remember with the acronym PICO :

- Population(s) or problem(s)

- Intervention(s)

- Comparison(s)

You can rearrange these four components to write your research question:

- What is the effectiveness of I versus C for O in P ?

Sometimes, you may want to include a fourth component, the type of study design . In this case, the acronym is PICOT .

- Type of study design(s)

- The population of patients with eczema

- The intervention of probiotics

- In comparison to no treatment, placebo , or non-probiotic treatment

- The outcome of changes in participant-, parent-, and doctor-rated symptoms of eczema and quality of life

- Randomised control trials, a type of study design

Their research question was:

- What is the effectiveness of probiotics versus no treatment, a placebo, or a non-probiotic treatment for reducing eczema symptoms and improving quality of life in patients with eczema?

Step 2: Develop a protocol

A protocol is a document that contains your research plan for the systematic review. This is an important step because having a plan allows you to work more efficiently and reduces bias.

Your protocol should include the following components:

- Background information : Provide the context of the research question, including why it’s important.

- Research objective(s) : Rephrase your research question as an objective.

- Selection criteria: State how you’ll decide which studies to include or exclude from your review.

- Search strategy: Discuss your plan for finding studies.

- Analysis: Explain what information you’ll collect from the studies and how you’ll synthesise the data.

If you’re a professional seeking to publish your review, it’s a good idea to bring together an advisory committee . This is a group of about six people who have experience in the topic you’re researching. They can help you make decisions about your protocol.

It’s highly recommended to register your protocol. Registering your protocol means submitting it to a database such as PROSPERO or ClinicalTrials.gov .

Step 3: Search for all relevant studies

Searching for relevant studies is the most time-consuming step of a systematic review.

To reduce bias, it’s important to search for relevant studies very thoroughly. Your strategy will depend on your field and your research question, but sources generally fall into these four categories:

- Databases: Search multiple databases of peer-reviewed literature, such as PubMed or Scopus . Think carefully about how to phrase your search terms and include multiple synonyms of each word. Use Boolean operators if relevant.

- Handsearching: In addition to searching the primary sources using databases, you’ll also need to search manually. One strategy is to scan relevant journals or conference proceedings. Another strategy is to scan the reference lists of relevant studies.

- Grey literature: Grey literature includes documents produced by governments, universities, and other institutions that aren’t published by traditional publishers. Graduate student theses are an important type of grey literature, which you can search using the Networked Digital Library of Theses and Dissertations (NDLTD) . In medicine, clinical trial registries are another important type of grey literature.

- Experts: Contact experts in the field to ask if they have unpublished studies that should be included in your review.

At this stage of your review, you won’t read the articles yet. Simply save any potentially relevant citations using bibliographic software, such as Scribbr’s APA or MLA Generator .

- Databases: EMBASE, PsycINFO, AMED, LILACS, and ISI Web of Science

- Handsearch: Conference proceedings and reference lists of articles

- Grey literature: The Cochrane Library, the metaRegister of Controlled Trials, and the Ongoing Skin Trials Register

- Experts: Authors of unpublished registered trials, pharmaceutical companies, and manufacturers of probiotics

Step 4: Apply the selection criteria

Applying the selection criteria is a three-person job. Two of you will independently read the studies and decide which to include in your review based on the selection criteria you established in your protocol . The third person’s job is to break any ties.

To increase inter-rater reliability , ensure that everyone thoroughly understands the selection criteria before you begin.

If you’re writing a systematic review as a student for an assignment, you might not have a team. In this case, you’ll have to apply the selection criteria on your own; you can mention this as a limitation in your paper’s discussion.

You should apply the selection criteria in two phases:

- Based on the titles and abstracts : Decide whether each article potentially meets the selection criteria based on the information provided in the abstracts.

- Based on the full texts: Download the articles that weren’t excluded during the first phase. If an article isn’t available online or through your library, you may need to contact the authors to ask for a copy. Read the articles and decide which articles meet the selection criteria.

It’s very important to keep a meticulous record of why you included or excluded each article. When the selection process is complete, you can summarise what you did using a PRISMA flow diagram .

Next, Boyle and colleagues found the full texts for each of the remaining studies. Boyle and Tang read through the articles to decide if any more studies needed to be excluded based on the selection criteria.

When Boyle and Tang disagreed about whether a study should be excluded, they discussed it with Varigos until the three researchers came to an agreement.

Step 5: Extract the data

Extracting the data means collecting information from the selected studies in a systematic way. There are two types of information you need to collect from each study:

- Information about the study’s methods and results . The exact information will depend on your research question, but it might include the year, study design , sample size, context, research findings , and conclusions. If any data are missing, you’ll need to contact the study’s authors.

- Your judgement of the quality of the evidence, including risk of bias .

You should collect this information using forms. You can find sample forms in The Registry of Methods and Tools for Evidence-Informed Decision Making and the Grading of Recommendations, Assessment, Development and Evaluations Working Group .

Extracting the data is also a three-person job. Two people should do this step independently, and the third person will resolve any disagreements.

They also collected data about possible sources of bias, such as how the study participants were randomised into the control and treatment groups.

Step 6: Synthesise the data

Synthesising the data means bringing together the information you collected into a single, cohesive story. There are two main approaches to synthesising the data:

- Narrative ( qualitative ): Summarise the information in words. You’ll need to discuss the studies and assess their overall quality.

- Quantitative : Use statistical methods to summarise and compare data from different studies. The most common quantitative approach is a meta-analysis , which allows you to combine results from multiple studies into a summary result.

Generally, you should use both approaches together whenever possible. If you don’t have enough data, or the data from different studies aren’t comparable, then you can take just a narrative approach. However, you should justify why a quantitative approach wasn’t possible.

Boyle and colleagues also divided the studies into subgroups, such as studies about babies, children, and adults, and analysed the effect sizes within each group.

Step 7: Write and publish a report

The purpose of writing a systematic review article is to share the answer to your research question and explain how you arrived at this answer.

Your article should include the following sections:

- Abstract : A summary of the review

- Introduction : Including the rationale and objectives

- Methods : Including the selection criteria, search method, data extraction method, and synthesis method

- Results : Including results of the search and selection process, study characteristics, risk of bias in the studies, and synthesis results

- Discussion : Including interpretation of the results and limitations of the review

- Conclusion : The answer to your research question and implications for practice, policy, or research

To verify that your report includes everything it needs, you can use the PRISMA checklist .

Once your report is written, you can publish it in a systematic review database, such as the Cochrane Database of Systematic Reviews , and/or in a peer-reviewed journal.

A systematic review is secondary research because it uses existing research. You don’t collect new data yourself.

A literature review is a survey of scholarly sources (such as books, journal articles, and theses) related to a specific topic or research question .

It is often written as part of a dissertation , thesis, research paper , or proposal .

There are several reasons to conduct a literature review at the beginning of a research project:

- To familiarise yourself with the current state of knowledge on your topic

- To ensure that you’re not just repeating what others have already done

- To identify gaps in knowledge and unresolved problems that your research can address

- To develop your theoretical framework and methodology

- To provide an overview of the key findings and debates on the topic

Writing the literature review shows your reader how your work relates to existing research and what new insights it will contribute.

Cite this Scribbr article

If you want to cite this source, you can copy and paste the citation or click the ‘Cite this Scribbr article’ button to automatically add the citation to our free Reference Generator.

Turney, S. (2024, July 17). Systematic Review | Definition, Examples & Guide. Scribbr. Retrieved 3 September 2024, from https://www.scribbr.co.uk/research-methods/systematic-reviews/

Is this article helpful?

Shaun Turney

Other students also liked, what is a literature review | guide, template, & examples, exploratory research | definition, guide, & examples, what is peer review | types & examples.

Systematic Reviews and Meta Analysis

- Getting Started

- Guides and Standards

- Review Protocols

- Databases and Sources

- Randomized Controlled Trials

- Controlled Clinical Trials

- Observational Designs

- Tests of Diagnostic Accuracy

- Software and Tools

- Where do I get all those articles?

- Collaborations

- EPI 233/528

- Countway Mediated Search

- Risk of Bias (RoB)

Systematic review Q & A

What is a systematic review.

A systematic review is guided filtering and synthesis of all available evidence addressing a specific, focused research question, generally about a specific intervention or exposure. The use of standardized, systematic methods and pre-selected eligibility criteria reduce the risk of bias in identifying, selecting and analyzing relevant studies. A well-designed systematic review includes clear objectives, pre-selected criteria for identifying eligible studies, an explicit methodology, a thorough and reproducible search of the literature, an assessment of the validity or risk of bias of each included study, and a systematic synthesis, analysis and presentation of the findings of the included studies. A systematic review may include a meta-analysis.

For details about carrying out systematic reviews, see the Guides and Standards section of this guide.

Is my research topic appropriate for systematic review methods?

A systematic review is best deployed to test a specific hypothesis about a healthcare or public health intervention or exposure. By focusing on a single intervention or a few specific interventions for a particular condition, the investigator can ensure a manageable results set. Moreover, examining a single or small set of related interventions, exposures, or outcomes, will simplify the assessment of studies and the synthesis of the findings.

Systematic reviews are poor tools for hypothesis generation: for instance, to determine what interventions have been used to increase the awareness and acceptability of a vaccine or to investigate the ways that predictive analytics have been used in health care management. In the first case, we don't know what interventions to search for and so have to screen all the articles about awareness and acceptability. In the second, there is no agreed on set of methods that make up predictive analytics, and health care management is far too broad. The search will necessarily be incomplete, vague and very large all at the same time. In most cases, reviews without clearly and exactly specified populations, interventions, exposures, and outcomes will produce results sets that quickly outstrip the resources of a small team and offer no consistent way to assess and synthesize findings from the studies that are identified.

If not a systematic review, then what?

You might consider performing a scoping review . This framework allows iterative searching over a reduced number of data sources and no requirement to assess individual studies for risk of bias. The framework includes built-in mechanisms to adjust the analysis as the work progresses and more is learned about the topic. A scoping review won't help you limit the number of records you'll need to screen (broad questions lead to large results sets) but may give you means of dealing with a large set of results.

This tool can help you decide what kind of review is right for your question.

Can my student complete a systematic review during her summer project?

Probably not. Systematic reviews are a lot of work. Including creating the protocol, building and running a quality search, collecting all the papers, evaluating the studies that meet the inclusion criteria and extracting and analyzing the summary data, a well done review can require dozens to hundreds of hours of work that can span several months. Moreover, a systematic review requires subject expertise, statistical support and a librarian to help design and run the search. Be aware that librarians sometimes have queues for their search time. It may take several weeks to complete and run a search. Moreover, all guidelines for carrying out systematic reviews recommend that at least two subject experts screen the studies identified in the search. The first round of screening can consume 1 hour per screener for every 100-200 records. A systematic review is a labor-intensive team effort.

How can I know if my topic has been been reviewed already?

Before starting out on a systematic review, check to see if someone has done it already. In PubMed you can use the systematic review subset to limit to a broad group of papers that is enriched for systematic reviews. You can invoke the subset by selecting if from the Article Types filters to the left of your PubMed results, or you can append AND systematic[sb] to your search. For example:

"neoadjuvant chemotherapy" AND systematic[sb]

The systematic review subset is very noisy, however. To quickly focus on systematic reviews (knowing that you may be missing some), simply search for the word systematic in the title:

"neoadjuvant chemotherapy" AND systematic[ti]

Any PRISMA-compliant systematic review will be captured by this method since including the words "systematic review" in the title is a requirement of the PRISMA checklist. Cochrane systematic reviews do not include 'systematic' in the title, however. It's worth checking the Cochrane Database of Systematic Reviews independently.

You can also search for protocols that will indicate that another group has set out on a similar project. Many investigators will register their protocols in PROSPERO , a registry of review protocols. Other published protocols as well as Cochrane Review protocols appear in the Cochrane Methodology Register, a part of the Cochrane Library .

- Next: Guides and Standards >>

- Last Updated: Sep 4, 2024 4:04 PM

- URL: https://guides.library.harvard.edu/meta-analysis

| | |

Jump to navigation

- Bahasa Malaysia

What are systematic reviews?

Watch this video from Cochrane Consumers and Communication to learn what systematic reviews are, how researchers prepare them, and why they’re an important part of making informed decisions about health - for everyone.

Cochrane evidence, including our systematic reviews, provides a powerful tool to enhance your healthcare knowledge and decision making. This video from Cochrane Sweden explains a bit about how we create health evidence and what Cochrane does.

- Search our Plain Language Summaries of health evidence

- Learn more about Cochrane and our work

1.2.2 What is a systematic review?

A systematic review attempts to collate all empirical evidence that fits pre-specified eligibility criteria in order to answer a specific research question. It uses explicit, systematic methods that are selected with a view to minimizing bias, thus providing more reliable findings from which conclusions can be drawn and decisions made (Antman 1992, Oxman 1993) . The key characteristics of a systematic review are:

a clearly stated set of objectives with pre-defined eligibility criteria for studies;

an explicit, reproducible methodology;

a systematic search that attempts to identify all studies that would meet the eligibility criteria;

an assessment of the validity of the findings of the included studies, for example through the assessment of risk of bias; and

a systematic presentation, and synthesis, of the characteristics and findings of the included studies.

Many systematic reviews contain meta-analyses. Meta-analysis is the use of statistical methods to summarize the results of independent studies (Glass 1976). By combining information from all relevant studies, meta-analyses can provide more precise estimates of the effects of health care than those derived from the individual studies included within a review (see Chapter 9, Section 9.1.3 ). They also facilitate investigations of the consistency of evidence across studies, and the exploration of differences across studies.

Help us improve our Library guides with this 5 minute survey . We appreciate your feedback!

- UOW Library

- Key guides for researchers

Systematic Review

What is a systematic review.

- Five other types of systematic review

- How is a literature review different?

- Search tips for systematic reviews

- Controlled vocabularies

- Grey literature

- Transferring your search

- Documenting your results

- Support & contact

A systematic review is an authoritative account of existing evidence using reliable, objective, thorough and reproducible research practices.

It is a method of making sense of large bodies of information and contributes to the answers to questions about what works and what doesn't.

Systematic reviews map areas of uncertainty and identify where little or no relevant research has been done, but where new studies are needed.

It is a good idea to familiarise yourself with the systematic review process before beginning your review. You can do this by searching for other systematic reviews to look at as examples, by reading a glossary of commonly used terms , and by learning how to distinguish between types of systematic review.

Characteristics of a systematic review

Some characteristics, or features, of systematic reviews are:

- Clearly stated set of objectives with pre-defined eligibility criteria

- Explicit, reproducible methodology

- A systematic search that attempts to identify all studies that would meet the eligibility criteria

- Assesses the validity of the findings, for example assessing the risk of bias

- Systematic presentation and synthesis of the findings of the included studies. (Cochrane Handbook for Systematic Reviews of Interventions, 2008, p. 6).

Watch this video from the Cochrane Library for more information about systematic reviews.

- Previous: Introduction to systematic reviews

- Next: Five other types of systematic review

- Last Updated: Jun 20, 2024 12:04 PM

- URL: https://uow.libguides.com/systematic-review

Insert research help text here

LIBRARY RESOURCES

Library homepage

Library SEARCH

A-Z Databases

STUDY SUPPORT

Academic Skills Centre

Referencing and citing

Digital Skills Hub

MORE UOW SERVICES

UOW homepage

Student support and wellbeing

IT Services

On the lands that we study, we walk, and we live, we acknowledge and respect the traditional custodians and cultural knowledge holders of these lands.

Copyright & disclaimer | Privacy & cookie usage

Library Services

UCL LIBRARY SERVICES

- Guides and databases

- Library skills

- Systematic reviews

What are systematic reviews?

- Types of systematic reviews

- Formulating a research question

- Identifying studies

- Searching databases

- Describing and appraising studies

- Synthesis and systematic maps

- Software for systematic reviews

- Online training and support

- Live and face to face training

- Individual support

- Further help

Systematic reviews are a type of literature review of research that require equivalent standards of rigour to primary research. They have a clear, logical rationale that is reported to the reader of the review. They are used in research and policymaking to inform evidence-based decisions and practice. They differ from traditional literature reviews in the following elements of conduct and reporting.

Systematic reviews:

- use explicit and transparent methods

- are a piece of research following a standard set of stages

- are accountable, replicable and updateable

- involve users to ensure a review is relevant and useful.

For example, systematic reviews (like all research) should have a clear research question, and the perspective of the authors in their approach to addressing the question is described. There are clearly described methods on how each study in a review was identified, how that study was appraised for quality and relevance and how it is combined with other studies in order to address the review question. A systematic review usually involves more than one person in order to increase the objectivity and trustworthiness of the reviews methods and findings.

Research protocols for systematic reviews may be peer-reviewed and published or registered in a suitable repository to help avoid duplication of reviews and for comparisons to be made with the final review and the planned review.

- History of systematic reviews to inform policy (EPPI-Centre)

- Six reasons why it is important to be systematic (EPPI-Centre)

- Evidence Synthesis International (ESI): Position Statement Describes the issues, principles and goals in synthesising research evidence to inform policy, practice and decisions

On this page

Should all literature reviews be 'systematic reviews', different methods for systematic reviews, reporting standards for systematic reviews.

Literature reviews provide a more complete picture of research knowledge than is possible from individual pieces of research. This can be used to: clarify what is known from research, provide new perspectives, build theory, test theory, identify research gaps or inform research agendas.

A systematic review requires a considerable amount of time and resources, and is one type of literature review.

If the purpose of a review is to make justifiable evidence claims, then it should be systematic, as a systematic review uses rigorous explicit methods. The methods used can depend on the purpose of the review, and the time and resources available.

A 'non-systematic review' might use some of the same methods as systematic reviews, such as systematic approaches to identify studies or quality appraise the literature. There may be times when this approach can be useful. In a student dissertation, for example, there may not be the time to be fully systematic in a review of the literature if this is only one small part of the thesis. In other types of research, there may also be a need to obtain a quick and not necessarily thorough overview of a literature to inform some other work (including a systematic review). Another example, is where policymakers, or other people using research findings, want to make quick decisions and there is no systematic review available to help them. They have a choice of gaining a rapid overview of the research literature or not having any research evidence to help their decision-making.

Just like any other piece of research, the methods used to undertake any literature review should be carefully planned to justify the conclusions made.

Finding out about different types of systematic reviews and the methods used for systematic reviews, and reading both systematic and other types of review will help to understand some of the differences.

Typically, a systematic review addresses a focussed, structured research question in order to inform understanding and decisions on an area. (see the Formulating a research question section for examples).

Sometimes systematic reviews ask a broad research question, and one strategy to achieve this is the use of several focussed sub-questions each addressed by sub-components of the review.

Another strategy is to develop a map to describe the type of research that has been undertaken in relation to a research question. Some maps even describe over 2,000 papers, while others are much smaller. One purpose of a map is to help choose a sub-set of studies to explore more fully in a synthesis. There are also other purposes of maps: see the box on systematic evidence maps for further information.

Reporting standards specify minimum elements that need to go into the reporting of a review. The reporting standards refer mainly to methodological issues but they are not as detailed or specific as critical appraisal for the methodological standards of conduct of a review.

A number of organisations have developed specific guidelines and standards for both the conducting and reporting on systematic reviews in different topic areas.

- PRISMA PRISMA is a reporting standard and is an acronym for Preferred Reporting Items for Systematic Reviews and Meta-Analyses. The Key Documents section of the PRISMA website links to a checklist, flow diagram and explanatory notes. PRISMA is less useful for certain types of reviews, including those that are iterative.

- eMERGe eMERGe is a reporting standard that has been developed for meta-ethnographies, a qualitative synthesis method.

- ROSES: RepOrting standards for Systematic Evidence Syntheses Reporting standards, including forms and flow diagram, designed specifically for systematic reviews and maps in the field of conservation and environmental management.

Useful books about systematic reviews

Systematic approaches to a successful literature review

An introduction to systematic reviews

Cochrane handbook for systematic reviews of interventions

Systematic reviews: crd's guidance for undertaking reviews in health care.

Finding what works in health care: Standards for systematic reviews

Systematic Reviews in the Social Sciences

Meta-analysis and research synthesis.

Research Synthesis and Meta-Analysis

Doing a Systematic Review

Literature reviews.

- What is a literature review?

- Why are literature reviews important?

- << Previous: Systematic reviews

- Next: Types of systematic reviews >>

- Last Updated: Aug 2, 2024 9:22 AM

- URL: https://library-guides.ucl.ac.uk/systematic-reviews

Systematic Review

- Library Help

- What is a Systematic Review (SR)?

- Steps of a Systematic Review

- Framing a Research Question

- Developing a Search Strategy

- Searching the Literature

- Managing the Process

- Meta-analysis

- Publishing your Systematic Review

Introduction to Systematic Review

- Introduction

- Types of literature reviews

- Other Libguides

- Systematic review as part of a dissertation

- Tutorials & Guidelines & Examples from non-Medical Disciplines

|

A "high-level overview of primary research on a focused question" utilizing high-quality research evidence through: Source: Kysh, Lynn (2013): Difference between a systematic review and a literature review. [figshare]. Available at:

|

Depending on your learning style, please explore the resources in various formats on the tabs above.

For additional tutorials, visit the SR Workshop Videos from UNC at Chapel Hill outlining each stage of the systematic review process.

Know the difference! Systematic review vs. literature review

| It is common to confuse systematic and literature reviews as both are used to provide a summary of the existent literature or research on a specific topic. Even with this common ground, both types vary significantly. Please review the following chart (and its corresponding poster linked below) for a detailed explanation of each as well as the differences between each type of review. Source: Kysh, L. (2013). What’s in a name? The difference between a systematic review and a literature review and why it matters. [Poster] Retrieved from . Check the website from UNC at Chapel Hill, |

Types of literature reviews along with associated methodologies

JBI Manual for Evidence Synthesis . Find definitions and methodological guidance.

- Systematic Reviews - Chapters 1-7

- Mixed Methods Systematic Reviews - Chapter 8

- Diagnostic Test Accuracy Systematic Reviews - Chapter 9

- Umbrella Reviews - Chapter 10

- Scoping Reviews - Chapter 11

- Systematic Reviews of Measurement Properties - Chapter 12

Systematic reviews vs scoping reviews -

Grant, M. J., & Booth, A. (2009). A typology of reviews: an analysis of 14 review types and associated methodologies. Health Information and Libraries Journal , 26 (2), 91–108. https://doi.org/10.1111/j.1471-1842.2009.00848.x

Gough, D., Thomas, J., & Oliver, S. (2012). Clarifying differences between review designs and methods. Systematic Reviews, 1 (28). htt p s://doi.org/ 10.1186/2046-4053-1-28

Munn, Z., Peters, M., Stern, C., Tufanaru, C., McArthur, A., & Aromataris, E. (2018). Systematic review or scoping review ? Guidance for authors when choosing between a systematic or scoping review approach. BMC medical research methodology, 18 (1), 143. https://doi.org/10.1186/s12874-018-0611-x. Also, check out the Libguide from Weill Cornell Medicine for the differences between a systematic review and a scoping review and when to embark on either one of them.

Sutton, A., Clowes, M., Preston, L., & Booth, A. (2019). Meeting the review family: Exploring review types and associated information retrieval requirements . Health Information & Libraries Journal , 36 (3), 202–222. https://doi.org/10.1111/hir.12276

Temple University. Review Types . - This guide provides useful descriptions of some of the types of reviews listed in the above article.

UMD Health Sciences and Human Services Library. Review Types . - Guide describing Literature Reviews, Scoping Reviews, and Rapid Reviews.

Whittemore, R., Chao, A., Jang, M., Minges, K. E., & Park, C. (2014). Methods for knowledge synthesis: An overview. Heart & Lung: The Journal of Acute and Critical Care, 43 (5), 453–461. https://doi.org/10.1016/j.hrtlng.2014.05.014

Differences between a systematic review and other types of reviews

Armstrong, R., Hall, B. J., Doyle, J., & Waters, E. (2011). ‘ Scoping the scope ’ of a cochrane review. Journal of Public Health , 33 (1), 147–150. https://doi.org/10.1093/pubmed/fdr015

Kowalczyk, N., & Truluck, C. (2013). Literature reviews and systematic reviews: What is the difference? Radiologic Technology , 85 (2), 219–222.

White, H., Albers, B., Gaarder, M., Kornør, H., Littell, J., Marshall, Z., Matthew, C., Pigott, T., Snilstveit, B., Waddington, H., & Welch, V. (2020). Guidance for producing a Campbell evidence and gap map . Campbell Systematic Reviews, 16 (4), e1125. https://doi.org/10.1002/cl2.1125. Check also this comparison between evidence and gaps maps and systematic reviews.

Rapid Reviews Tutorials

Rapid Review Guidebook by the National Collaborating Centre of Methods and Tools (NCCMT)

Hamel, C., Michaud, A., Thuku, M., Skidmore, B., Stevens, A., Nussbaumer-Streit, B., & Garritty, C. (2021). Defining Rapid Reviews: a systematic scoping review and thematic analysis of definitions and defining characteristics of rapid reviews. Journal of clinical epidemiology , 129 , 74–85. https://doi.org/10.1016/j.jclinepi.2020.09.041

|

Image: by WeeblyTutorials |

under the tab on the left side menu. |

- Müller, C., Lautenschläger, S., Meyer, G., & Stephan, A. (2017). Interventions to support people with dementia and their caregivers during the transition from home care to nursing home care: A systematic review . International Journal of Nursing Studies, 71 , 139–152. https://doi.org/10.1016/j.ijnurstu.2017.03.013

- Bhui, K. S., Aslam, R. W., Palinski, A., McCabe, R., Johnson, M. R. D., Weich, S., … Szczepura, A. (2015). Interventions to improve therapeutic communications between Black and minority ethnic patients and professionals in psychiatric services: Systematic review . The British Journal of Psychiatry, 207 (2), 95–103. https://doi.org/10.1192/bjp.bp.114.158899

- Rosen, L. J., Noach, M. B., Winickoff, J. P., & Hovell, M. F. (2012). Parental smoking cessation to protect young children: A systematic review and meta-analysis . Pediatrics, 129 (1), 141–152. https://doi.org/10.1542/peds.2010-3209

Scoping Review

- Hyshka, E., Karekezi, K., Tan, B., Slater, L. G., Jahrig, J., & Wild, T. C. (2017). The role of consumer perspectives in estimating population need for substance use services: A scoping review . BMC Health Services Research, 171-14. https://doi.org/10.1186/s12913-017-2153-z

- Olson, K., Hewit, J., Slater, L.G., Chambers, T., Hicks, D., Farmer, A., & ... Kolb, B. (2016). Assessing cognitive function in adults during or following chemotherapy: A scoping review . Supportive Care In Cancer, 24 (7), 3223-3234. https://doi.org/10.1007/s00520-016-3215-1

- Pham, M. T., Rajić, A., Greig, J. D., Sargeant, J. M., Papadopoulos, A., & McEwen, S. A. (2014). A scoping review of scoping reviews: Advancing the approach and enhancing the consistency . Research Synthesis Methods, 5 (4), 371–385. https://doi.org/10.1002/jrsm.1123

- Scoping Review Tutorial from UNC at Chapel Hill

Qualitative Systematic Review/Meta-Synthesis

- Lee, H., Tamminen, K. A., Clark, A. M., Slater, L., Spence, J. C., & Holt, N. L. (2015). A meta-study of qualitative research examining determinants of children's independent active free play . International Journal Of Behavioral Nutrition & Physical Activity, 12 (5), 121-12. https://doi.org/10.1186/s12966-015-0165-9

Videos on systematic reviews

| This video lecture explains in detail the steps necessary to conduct a systematic review (44 min.) | Here's a brief introduction to how to evaluate systematic reviews (16 min.) |

Systematic Reviews: What are they? Are they right for my research? - 47 min. video recording with a closed caption option.

More training videos on systematic reviews:

| from Yale University (approximately 5-10 minutes each) | with Margaret Foster (approximately 55 min each) |

Books on Systematic Reviews

Books on Meta-analysis

- University of Toronto Libraries - very detailed with good tips on the sensitivity and specificity of searches.

- Monash University - includes an interactive case study tutorial.

- Dalhousie University Libraries - a comprehensive How-To Guide on conducting a systematic review.

Guidelines for a systematic review as part of the dissertation

- Guidelines for Systematic Reviews in the Context of Doctoral Education Background by University of Victoria (PDF)

- Can I conduct a Systematic Review as my Master’s dissertation or PhD thesis? Yes, It Depends! by Farhad (blog)

- What is a Systematic Review Dissertation Like? by the University of Edinburgh (50 min video)

Further readings on experiences of PhD students and doctoral programs with systematic reviews

Puljak, L., & Sapunar, D. (2017). Acceptance of a systematic review as a thesis: Survey of biomedical doctoral programs in Europe . Systematic Reviews , 6 (1), 253. https://doi.org/10.1186/s13643-017-0653-x

Perry, A., & Hammond, N. (2002). Systematic reviews: The experiences of a PhD Student . Psychology Learning & Teaching , 2 (1), 32–35. https://doi.org/10.2304/plat.2002.2.1.32

Daigneault, P.-M., Jacob, S., & Ouimet, M. (2014). Using systematic review methods within a Ph.D. dissertation in political science: Challenges and lessons learned from practice . International Journal of Social Research Methodology , 17 (3), 267–283. https://doi.org/10.1080/13645579.2012.730704

UMD Doctor of Philosophy Degree Policies

Before you embark on a systematic review research project, check the UMD PhD Policies to make sure you are on the right path. Systematic reviews require a team of at least two reviewers and an information specialist or a librarian. Discuss with your advisor the authorship roles of the involved team members. Keep in mind that the UMD Doctor of Philosophy Degree Policies (scroll down to the section, Inclusion of one's own previously published materials in a dissertation ) outline such cases, specifically the following:

" It is recognized that a graduate student may co-author work with faculty members and colleagues that should be included in a dissertation . In such an event, a letter should be sent to the Dean of the Graduate School certifying that the student's examining committee has determined that the student made a substantial contribution to that work. This letter should also note that the inclusion of the work has the approval of the dissertation advisor and the program chair or Graduate Director. The letter should be included with the dissertation at the time of submission. The format of such inclusions must conform to the standard dissertation format. A foreword to the dissertation, as approved by the Dissertation Committee, must state that the student made substantial contributions to the relevant aspects of the jointly authored work included in the dissertation."

| by CommLab India |

|

- Cochrane Handbook for Systematic Reviews of Interventions - See Part 2: General methods for Cochrane reviews

- Systematic Searches - Yale library video tutorial series

- Using PubMed's Clinical Queries to Find Systematic Reviews - From the U.S. National Library of Medicine

- Systematic reviews and meta-analyses: A step-by-step guide - From the University of Edinsburgh, Centre for Cognitive Ageing and Cognitive Epidemiology

| by Vinova |

|

Bioinformatics

- Mariano, D. C., Leite, C., Santos, L. H., Rocha, R. E., & de Melo-Minardi, R. C. (2017). A guide to performing systematic literature reviews in bioinformatics . arXiv preprint arXiv:1707.05813.

Environmental Sciences

Collaboration for Environmental Evidence. 2018. Guidelines and Standards for Evidence synthesis in Environmental Management. Version 5.0 (AS Pullin, GK Frampton, B Livoreil & G Petrokofsky, Eds) www.environmentalevidence.org/information-for-authors .

Pullin, A. S., & Stewart, G. B. (2006). Guidelines for systematic review in conservation and environmental management. Conservation Biology, 20 (6), 1647–1656. https://doi.org/10.1111/j.1523-1739.2006.00485.x

Engineering Education

- Borrego, M., Foster, M. J., & Froyd, J. E. (2014). Systematic literature reviews in engineering education and other developing interdisciplinary fields. Journal of Engineering Education, 103 (1), 45–76. https://doi.org/10.1002/jee.20038

Public Health

- Hannes, K., & Claes, L. (2007). Learn to read and write systematic reviews: The Belgian Campbell Group . Research on Social Work Practice, 17 (6), 748–753. https://doi.org/10.1177/1049731507303106

- McLeroy, K. R., Northridge, M. E., Balcazar, H., Greenberg, M. R., & Landers, S. J. (2012). Reporting guidelines and the American Journal of Public Health’s adoption of preferred reporting items for systematic reviews and meta-analyses . American Journal of Public Health, 102 (5), 780–784. https://doi.org/10.2105/AJPH.2011.300630

- Pollock, A., & Berge, E. (2018). How to do a systematic review. International Journal of Stroke, 13 (2), 138–156. https://doi.org/10.1177/1747493017743796

- Institute of Medicine. (2011). Finding what works in health care: Standards for systematic reviews . https://doi.org/10.17226/13059

- Wanden-Berghe, C., & Sanz-Valero, J. (2012). Systematic reviews in nutrition: Standardized methodology . The British Journal of Nutrition, 107 Suppl 2, S3-7. https://doi.org/10.1017/S0007114512001432

Social Sciences

- Bronson, D., & Davis, T. (2012). Finding and evaluating evidence: Systematic reviews and evidence-based practice (Pocket guides to social work research methods). Oxford: Oxford University Press.

- Petticrew, M., & Roberts, H. (2006). Systematic reviews in the social sciences: A practical guide . Malden, MA: Blackwell Pub.

- Cornell University Library Guide - Systematic literature reviews in engineering: Example: Software Engineering

- Biolchini, J., Mian, P. G., Natali, A. C. C., & Travassos, G. H. (2005). Systematic review in software engineering . System Engineering and Computer Science Department COPPE/UFRJ, Technical Report ES, 679 (05), 45.

- Biolchini, J. C., Mian, P. G., Natali, A. C. C., Conte, T. U., & Travassos, G. H. (2007). Scientific research ontology to support systematic review in software engineering . Advanced Engineering Informatics, 21 (2), 133–151.

- Kitchenham, B. (2007). Guidelines for performing systematic literature reviews in software engineering . [Technical Report]. Keele, UK, Keele University, 33(2004), 1-26.

- Weidt, F., & Silva, R. (2016). Systematic literature review in computer science: A practical guide . Relatórios Técnicos do DCC/UFJF , 1 .

| by Day Translations |

Resources for your writing |

- Academic Phrasebank - Get some inspiration and find some terms and phrases for writing your research paper

- Oxford English Dictionary - Use to locate word variants and proper spelling

- << Previous: Library Help

- Next: Steps of a Systematic Review >>

- Last Updated: Aug 26, 2024 12:37 PM

- URL: https://lib.guides.umd.edu/SR

Log in using your username and password

- Search More Search for this keyword Advanced search

- Latest content

- Current issue

- Write for Us

- BMJ Journals

You are here

- Volume 14, Issue 3

- What is a systematic review?

- Article Text

- Article info

- Citation Tools

- Rapid Responses

- Article metrics

- Jane Clarke

- Correspondence to Jane Clarke 4 Prime Road, Grey Lynn, Auckland, New Zealand; janeclarkehome{at}gmail.com

https://doi.org/10.1136/ebn.2011.0049

Statistics from Altmetric.com

Request permissions.

If you wish to reuse any or all of this article please use the link below which will take you to the Copyright Clearance Center’s RightsLink service. You will be able to get a quick price and instant permission to reuse the content in many different ways.

A high-quality systematic review is described as the most reliable source of evidence to guide clinical practice. The purpose of a systematic review is to deliver a meticulous summary of all the available primary research in response to a research question. A systematic review uses all the existing research and is sometime called ‘secondary research’ (research on research). They are often required by research funders to establish the state of existing knowledge and are frequently used in guideline development. Systematic review findings are often used within the …

Competing interests None.

Read the full text or download the PDF:

- A-Z Publications

Annual Review of Psychology

Volume 70, 2019, review article, how to do a systematic review: a best practice guide for conducting and reporting narrative reviews, meta-analyses, and meta-syntheses.

- Andy P. Siddaway 1 , Alex M. Wood 2 , and Larry V. Hedges 3