- Resources Home 🏠

- Try SciSpace Copilot

- Search research papers

- Add Copilot Extension

- Try AI Detector

- Try Paraphraser

- Try Citation Generator

- April Papers

- June Papers

- July Papers

Here's What You Need to Understand About Research Methodology

Table of Contents

Research methodology involves a systematic and well-structured approach to conducting scholarly or scientific inquiries. Knowing the significance of research methodology and its different components is crucial as it serves as the basis for any study.

Typically, your research topic will start as a broad idea you want to investigate more thoroughly. Once you’ve identified a research problem and created research questions , you must choose the appropriate methodology and frameworks to address those questions effectively.

What is the definition of a research methodology?

Research methodology is the process or the way you intend to execute your study. The methodology section of a research paper outlines how you plan to conduct your study. It covers various steps such as collecting data, statistical analysis, observing participants, and other procedures involved in the research process

The methods section should give a description of the process that will convert your idea into a study. Additionally, the outcomes of your process must provide valid and reliable results resonant with the aims and objectives of your research. This thumb rule holds complete validity, no matter whether your paper has inclinations for qualitative or quantitative usage.

Studying research methods used in related studies can provide helpful insights and direction for your own research. Now easily discover papers related to your topic on SciSpace and utilize our AI research assistant, Copilot , to quickly review the methodologies applied in different papers.

The need for a good research methodology

While deciding on your approach towards your research, the reason or factors you weighed in choosing a particular problem and formulating a research topic need to be validated and explained. A research methodology helps you do exactly that. Moreover, a good research methodology lets you build your argument to validate your research work performed through various data collection methods, analytical methods, and other essential points.

Just imagine it as a strategy documented to provide an overview of what you intend to do.

While undertaking any research writing or performing the research itself, you may get drifted in not something of much importance. In such a case, a research methodology helps you to get back to your outlined work methodology.

A research methodology helps in keeping you accountable for your work. Additionally, it can help you evaluate whether your work is in sync with your original aims and objectives or not. Besides, a good research methodology enables you to navigate your research process smoothly and swiftly while providing effective planning to achieve your desired results.

What is the basic structure of a research methodology?

Usually, you must ensure to include the following stated aspects while deciding over the basic structure of your research methodology:

1. Your research procedure

Explain what research methods you’re going to use. Whether you intend to proceed with quantitative or qualitative, or a composite of both approaches, you need to state that explicitly. The option among the three depends on your research’s aim, objectives, and scope.

2. Provide the rationality behind your chosen approach

Based on logic and reason, let your readers know why you have chosen said research methodologies. Additionally, you have to build strong arguments supporting why your chosen research method is the best way to achieve the desired outcome.

3. Explain your mechanism

The mechanism encompasses the research methods or instruments you will use to develop your research methodology. It usually refers to your data collection methods. You can use interviews, surveys, physical questionnaires, etc., of the many available mechanisms as research methodology instruments. The data collection method is determined by the type of research and whether the data is quantitative data(includes numerical data) or qualitative data (perception, morale, etc.) Moreover, you need to put logical reasoning behind choosing a particular instrument.

4. Significance of outcomes

The results will be available once you have finished experimenting. However, you should also explain how you plan to use the data to interpret the findings. This section also aids in understanding the problem from within, breaking it down into pieces, and viewing the research problem from various perspectives.

5. Reader’s advice

Anything that you feel must be explained to spread more awareness among readers and focus groups must be included and described in detail. You should not just specify your research methodology on the assumption that a reader is aware of the topic.

All the relevant information that explains and simplifies your research paper must be included in the methodology section. If you are conducting your research in a non-traditional manner, give a logical justification and list its benefits.

6. Explain your sample space

Include information about the sample and sample space in the methodology section. The term "sample" refers to a smaller set of data that a researcher selects or chooses from a larger group of people or focus groups using a predetermined selection method. Let your readers know how you are going to distinguish between relevant and non-relevant samples. How you figured out those exact numbers to back your research methodology, i.e. the sample spacing of instruments, must be discussed thoroughly.

For example, if you are going to conduct a survey or interview, then by what procedure will you select the interviewees (or sample size in case of surveys), and how exactly will the interview or survey be conducted.

7. Challenges and limitations

This part, which is frequently assumed to be unnecessary, is actually very important. The challenges and limitations that your chosen strategy inherently possesses must be specified while you are conducting different types of research.

The importance of a good research methodology

You must have observed that all research papers, dissertations, or theses carry a chapter entirely dedicated to research methodology. This section helps maintain your credibility as a better interpreter of results rather than a manipulator.

A good research methodology always explains the procedure, data collection methods and techniques, aim, and scope of the research. In a research study, it leads to a well-organized, rationality-based approach, while the paper lacking it is often observed as messy or disorganized.

You should pay special attention to validating your chosen way towards the research methodology. This becomes extremely important in case you select an unconventional or a distinct method of execution.

Curating and developing a strong, effective research methodology can assist you in addressing a variety of situations, such as:

- When someone tries to duplicate or expand upon your research after few years.

- If a contradiction or conflict of facts occurs at a later time. This gives you the security you need to deal with these contradictions while still being able to defend your approach.

- Gaining a tactical approach in getting your research completed in time. Just ensure you are using the right approach while drafting your research methodology, and it can help you achieve your desired outcomes. Additionally, it provides a better explanation and understanding of the research question itself.

- Documenting the results so that the final outcome of the research stays as you intended it to be while starting.

Instruments you could use while writing a good research methodology

As a researcher, you must choose which tools or data collection methods that fit best in terms of the relevance of your research. This decision has to be wise.

There exists many research equipments or tools that you can use to carry out your research process. These are classified as:

a. Interviews (One-on-One or a Group)

An interview aimed to get your desired research outcomes can be undertaken in many different ways. For example, you can design your interview as structured, semi-structured, or unstructured. What sets them apart is the degree of formality in the questions. On the other hand, in a group interview, your aim should be to collect more opinions and group perceptions from the focus groups on a certain topic rather than looking out for some formal answers.

In surveys, you are in better control if you specifically draft the questions you seek the response for. For example, you may choose to include free-style questions that can be answered descriptively, or you may provide a multiple-choice type response for questions. Besides, you can also opt to choose both ways, deciding what suits your research process and purpose better.

c. Sample Groups

Similar to the group interviews, here, you can select a group of individuals and assign them a topic to discuss or freely express their opinions over that. You can simultaneously note down the answers and later draft them appropriately, deciding on the relevance of every response.

d. Observations

If your research domain is humanities or sociology, observations are the best-proven method to draw your research methodology. Of course, you can always include studying the spontaneous response of the participants towards a situation or conducting the same but in a more structured manner. A structured observation means putting the participants in a situation at a previously decided time and then studying their responses.

Of all the tools described above, it is you who should wisely choose the instruments and decide what’s the best fit for your research. You must not restrict yourself from multiple methods or a combination of a few instruments if appropriate in drafting a good research methodology.

Types of research methodology

A research methodology exists in various forms. Depending upon their approach, whether centered around words, numbers, or both, methodologies are distinguished as qualitative, quantitative, or an amalgamation of both.

1. Qualitative research methodology

When a research methodology primarily focuses on words and textual data, then it is generally referred to as qualitative research methodology. This type is usually preferred among researchers when the aim and scope of the research are mainly theoretical and explanatory.

The instruments used are observations, interviews, and sample groups. You can use this methodology if you are trying to study human behavior or response in some situations. Generally, qualitative research methodology is widely used in sociology, psychology, and other related domains.

2. Quantitative research methodology

If your research is majorly centered on data, figures, and stats, then analyzing these numerical data is often referred to as quantitative research methodology. You can use quantitative research methodology if your research requires you to validate or justify the obtained results.

In quantitative methods, surveys, tests, experiments, and evaluations of current databases can be advantageously used as instruments If your research involves testing some hypothesis, then use this methodology.

3. Amalgam methodology

As the name suggests, the amalgam methodology uses both quantitative and qualitative approaches. This methodology is used when a part of the research requires you to verify the facts and figures, whereas the other part demands you to discover the theoretical and explanatory nature of the research question.

The instruments for the amalgam methodology require you to conduct interviews and surveys, including tests and experiments. The outcome of this methodology can be insightful and valuable as it provides precise test results in line with theoretical explanations and reasoning.

The amalgam method, makes your work both factual and rational at the same time.

Final words: How to decide which is the best research methodology?

If you have kept your sincerity and awareness intact with the aims and scope of research well enough, you must have got an idea of which research methodology suits your work best.

Before deciding which research methodology answers your research question, you must invest significant time in reading and doing your homework for that. Taking references that yield relevant results should be your first approach to establishing a research methodology.

Moreover, you should never refrain from exploring other options. Before setting your work in stone, you must try all the available options as it explains why the choice of research methodology that you finally make is more appropriate than the other available options.

You should always go for a quantitative research methodology if your research requires gathering large amounts of data, figures, and statistics. This research methodology will provide you with results if your research paper involves the validation of some hypothesis.

Whereas, if you are looking for more explanations, reasons, opinions, and public perceptions around a theory, you must use qualitative research methodology.The choice of an appropriate research methodology ultimately depends on what you want to achieve through your research.

Frequently Asked Questions (FAQs) about Research Methodology

1. how to write a research methodology.

You can always provide a separate section for research methodology where you should specify details about the methods and instruments used during the research, discussions on result analysis, including insights into the background information, and conveying the research limitations.

2. What are the types of research methodology?

There generally exists four types of research methodology i.e.

- Observation

- Experimental

- Derivational

3. What is the true meaning of research methodology?

The set of techniques or procedures followed to discover and analyze the information gathered to validate or justify a research outcome is generally called Research Methodology.

4. Where lies the importance of research methodology?

Your research methodology directly reflects the validity of your research outcomes and how well-informed your research work is. Moreover, it can help future researchers cite or refer to your research if they plan to use a similar research methodology.

You might also like

Consensus GPT vs. SciSpace GPT: Choose the Best GPT for Research

Literature Review and Theoretical Framework: Understanding the Differences

Using AI for research: A beginner’s guide

- USC Libraries

- Research Guides

Organizing Your Social Sciences Research Paper

- 6. The Methodology

- Purpose of Guide

- Design Flaws to Avoid

- Independent and Dependent Variables

- Glossary of Research Terms

- Reading Research Effectively

- Narrowing a Topic Idea

- Broadening a Topic Idea

- Extending the Timeliness of a Topic Idea

- Academic Writing Style

- Applying Critical Thinking

- Choosing a Title

- Making an Outline

- Paragraph Development

- Research Process Video Series

- Executive Summary

- The C.A.R.S. Model

- Background Information

- The Research Problem/Question

- Theoretical Framework

- Citation Tracking

- Content Alert Services

- Evaluating Sources

- Primary Sources

- Secondary Sources

- Tiertiary Sources

- Scholarly vs. Popular Publications

- Qualitative Methods

- Quantitative Methods

- Insiderness

- Using Non-Textual Elements

- Limitations of the Study

- Common Grammar Mistakes

- Writing Concisely

- Avoiding Plagiarism

- Footnotes or Endnotes?

- Further Readings

- Generative AI and Writing

- USC Libraries Tutorials and Other Guides

- Bibliography

The methods section describes actions taken to investigate a research problem and the rationale for the application of specific procedures or techniques used to identify, select, process, and analyze information applied to understanding the problem, thereby, allowing the reader to critically evaluate a study’s overall validity and reliability. The methodology section of a research paper answers two main questions: How was the data collected or generated? And, how was it analyzed? The writing should be direct and precise and always written in the past tense.

Kallet, Richard H. "How to Write the Methods Section of a Research Paper." Respiratory Care 49 (October 2004): 1229-1232.

Importance of a Good Methodology Section

You must explain how you obtained and analyzed your results for the following reasons:

- Readers need to know how the data was obtained because the method you chose affects the results and, by extension, how you interpreted their significance in the discussion section of your paper.

- Methodology is crucial for any branch of scholarship because an unreliable method produces unreliable results and, as a consequence, undermines the value of your analysis of the findings.

- In most cases, there are a variety of different methods you can choose to investigate a research problem. The methodology section of your paper should clearly articulate the reasons why you have chosen a particular procedure or technique.

- The reader wants to know that the data was collected or generated in a way that is consistent with accepted practice in the field of study. For example, if you are using a multiple choice questionnaire, readers need to know that it offered your respondents a reasonable range of answers to choose from.

- The method must be appropriate to fulfilling the overall aims of the study. For example, you need to ensure that you have a large enough sample size to be able to generalize and make recommendations based upon the findings.

- The methodology should discuss the problems that were anticipated and the steps you took to prevent them from occurring. For any problems that do arise, you must describe the ways in which they were minimized or why these problems do not impact in any meaningful way your interpretation of the findings.

- In the social and behavioral sciences, it is important to always provide sufficient information to allow other researchers to adopt or replicate your methodology. This information is particularly important when a new method has been developed or an innovative use of an existing method is utilized.

Bem, Daryl J. Writing the Empirical Journal Article. Psychology Writing Center. University of Washington; Denscombe, Martyn. The Good Research Guide: For Small-Scale Social Research Projects . 5th edition. Buckingham, UK: Open University Press, 2014; Lunenburg, Frederick C. Writing a Successful Thesis or Dissertation: Tips and Strategies for Students in the Social and Behavioral Sciences . Thousand Oaks, CA: Corwin Press, 2008.

Structure and Writing Style

I. Groups of Research Methods

There are two main groups of research methods in the social sciences:

- The e mpirical-analytical group approaches the study of social sciences in a similar manner that researchers study the natural sciences . This type of research focuses on objective knowledge, research questions that can be answered yes or no, and operational definitions of variables to be measured. The empirical-analytical group employs deductive reasoning that uses existing theory as a foundation for formulating hypotheses that need to be tested. This approach is focused on explanation.

- The i nterpretative group of methods is focused on understanding phenomenon in a comprehensive, holistic way . Interpretive methods focus on analytically disclosing the meaning-making practices of human subjects [the why, how, or by what means people do what they do], while showing how those practices arrange so that it can be used to generate observable outcomes. Interpretive methods allow you to recognize your connection to the phenomena under investigation. However, the interpretative group requires careful examination of variables because it focuses more on subjective knowledge.

II. Content

The introduction to your methodology section should begin by restating the research problem and underlying assumptions underpinning your study. This is followed by situating the methods you used to gather, analyze, and process information within the overall “tradition” of your field of study and within the particular research design you have chosen to study the problem. If the method you choose lies outside of the tradition of your field [i.e., your review of the literature demonstrates that the method is not commonly used], provide a justification for how your choice of methods specifically addresses the research problem in ways that have not been utilized in prior studies.

The remainder of your methodology section should describe the following:

- Decisions made in selecting the data you have analyzed or, in the case of qualitative research, the subjects and research setting you have examined,

- Tools and methods used to identify and collect information, and how you identified relevant variables,

- The ways in which you processed the data and the procedures you used to analyze that data, and

- The specific research tools or strategies that you utilized to study the underlying hypothesis and research questions.

In addition, an effectively written methodology section should:

- Introduce the overall methodological approach for investigating your research problem . Is your study qualitative or quantitative or a combination of both (mixed method)? Are you going to take a special approach, such as action research, or a more neutral stance?

- Indicate how the approach fits the overall research design . Your methods for gathering data should have a clear connection to your research problem. In other words, make sure that your methods will actually address the problem. One of the most common deficiencies found in research papers is that the proposed methodology is not suitable to achieving the stated objective of your paper.

- Describe the specific methods of data collection you are going to use , such as, surveys, interviews, questionnaires, observation, archival research. If you are analyzing existing data, such as a data set or archival documents, describe how it was originally created or gathered and by whom. Also be sure to explain how older data is still relevant to investigating the current research problem.

- Explain how you intend to analyze your results . Will you use statistical analysis? Will you use specific theoretical perspectives to help you analyze a text or explain observed behaviors? Describe how you plan to obtain an accurate assessment of relationships, patterns, trends, distributions, and possible contradictions found in the data.

- Provide background and a rationale for methodologies that are unfamiliar for your readers . Very often in the social sciences, research problems and the methods for investigating them require more explanation/rationale than widely accepted rules governing the natural and physical sciences. Be clear and concise in your explanation.

- Provide a justification for subject selection and sampling procedure . For instance, if you propose to conduct interviews, how do you intend to select the sample population? If you are analyzing texts, which texts have you chosen, and why? If you are using statistics, why is this set of data being used? If other data sources exist, explain why the data you chose is most appropriate to addressing the research problem.

- Provide a justification for case study selection . A common method of analyzing research problems in the social sciences is to analyze specific cases. These can be a person, place, event, phenomenon, or other type of subject of analysis that are either examined as a singular topic of in-depth investigation or multiple topics of investigation studied for the purpose of comparing or contrasting findings. In either method, you should explain why a case or cases were chosen and how they specifically relate to the research problem.

- Describe potential limitations . Are there any practical limitations that could affect your data collection? How will you attempt to control for potential confounding variables and errors? If your methodology may lead to problems you can anticipate, state this openly and show why pursuing this methodology outweighs the risk of these problems cropping up.

NOTE: Once you have written all of the elements of the methods section, subsequent revisions should focus on how to present those elements as clearly and as logically as possibly. The description of how you prepared to study the research problem, how you gathered the data, and the protocol for analyzing the data should be organized chronologically. For clarity, when a large amount of detail must be presented, information should be presented in sub-sections according to topic. If necessary, consider using appendices for raw data.

ANOTHER NOTE: If you are conducting a qualitative analysis of a research problem , the methodology section generally requires a more elaborate description of the methods used as well as an explanation of the processes applied to gathering and analyzing of data than is generally required for studies using quantitative methods. Because you are the primary instrument for generating the data [e.g., through interviews or observations], the process for collecting that data has a significantly greater impact on producing the findings. Therefore, qualitative research requires a more detailed description of the methods used.

YET ANOTHER NOTE: If your study involves interviews, observations, or other qualitative techniques involving human subjects , you may be required to obtain approval from the university's Office for the Protection of Research Subjects before beginning your research. This is not a common procedure for most undergraduate level student research assignments. However, i f your professor states you need approval, you must include a statement in your methods section that you received official endorsement and adequate informed consent from the office and that there was a clear assessment and minimization of risks to participants and to the university. This statement informs the reader that your study was conducted in an ethical and responsible manner. In some cases, the approval notice is included as an appendix to your paper.

III. Problems to Avoid

Irrelevant Detail The methodology section of your paper should be thorough but concise. Do not provide any background information that does not directly help the reader understand why a particular method was chosen, how the data was gathered or obtained, and how the data was analyzed in relation to the research problem [note: analyzed, not interpreted! Save how you interpreted the findings for the discussion section]. With this in mind, the page length of your methods section will generally be less than any other section of your paper except the conclusion.

Unnecessary Explanation of Basic Procedures Remember that you are not writing a how-to guide about a particular method. You should make the assumption that readers possess a basic understanding of how to investigate the research problem on their own and, therefore, you do not have to go into great detail about specific methodological procedures. The focus should be on how you applied a method , not on the mechanics of doing a method. An exception to this rule is if you select an unconventional methodological approach; if this is the case, be sure to explain why this approach was chosen and how it enhances the overall process of discovery.

Problem Blindness It is almost a given that you will encounter problems when collecting or generating your data, or, gaps will exist in existing data or archival materials. Do not ignore these problems or pretend they did not occur. Often, documenting how you overcame obstacles can form an interesting part of the methodology. It demonstrates to the reader that you can provide a cogent rationale for the decisions you made to minimize the impact of any problems that arose.

Literature Review Just as the literature review section of your paper provides an overview of sources you have examined while researching a particular topic, the methodology section should cite any sources that informed your choice and application of a particular method [i.e., the choice of a survey should include any citations to the works you used to help construct the survey].

It’s More than Sources of Information! A description of a research study's method should not be confused with a description of the sources of information. Such a list of sources is useful in and of itself, especially if it is accompanied by an explanation about the selection and use of the sources. The description of the project's methodology complements a list of sources in that it sets forth the organization and interpretation of information emanating from those sources.

Azevedo, L.F. et al. "How to Write a Scientific Paper: Writing the Methods Section." Revista Portuguesa de Pneumologia 17 (2011): 232-238; Blair Lorrie. “Choosing a Methodology.” In Writing a Graduate Thesis or Dissertation , Teaching Writing Series. (Rotterdam: Sense Publishers 2016), pp. 49-72; Butin, Dan W. The Education Dissertation A Guide for Practitioner Scholars . Thousand Oaks, CA: Corwin, 2010; Carter, Susan. Structuring Your Research Thesis . New York: Palgrave Macmillan, 2012; Kallet, Richard H. “How to Write the Methods Section of a Research Paper.” Respiratory Care 49 (October 2004):1229-1232; Lunenburg, Frederick C. Writing a Successful Thesis or Dissertation: Tips and Strategies for Students in the Social and Behavioral Sciences . Thousand Oaks, CA: Corwin Press, 2008. Methods Section. The Writer’s Handbook. Writing Center. University of Wisconsin, Madison; Rudestam, Kjell Erik and Rae R. Newton. “The Method Chapter: Describing Your Research Plan.” In Surviving Your Dissertation: A Comprehensive Guide to Content and Process . (Thousand Oaks, Sage Publications, 2015), pp. 87-115; What is Interpretive Research. Institute of Public and International Affairs, University of Utah; Writing the Experimental Report: Methods, Results, and Discussion. The Writing Lab and The OWL. Purdue University; Methods and Materials. The Structure, Format, Content, and Style of a Journal-Style Scientific Paper. Department of Biology. Bates College.

Writing Tip

Statistical Designs and Tests? Do Not Fear Them!

Don't avoid using a quantitative approach to analyzing your research problem just because you fear the idea of applying statistical designs and tests. A qualitative approach, such as conducting interviews or content analysis of archival texts, can yield exciting new insights about a research problem, but it should not be undertaken simply because you have a disdain for running a simple regression. A well designed quantitative research study can often be accomplished in very clear and direct ways, whereas, a similar study of a qualitative nature usually requires considerable time to analyze large volumes of data and a tremendous burden to create new paths for analysis where previously no path associated with your research problem had existed.

To locate data and statistics, GO HERE .

Another Writing Tip

Knowing the Relationship Between Theories and Methods

There can be multiple meaning associated with the term "theories" and the term "methods" in social sciences research. A helpful way to delineate between them is to understand "theories" as representing different ways of characterizing the social world when you research it and "methods" as representing different ways of generating and analyzing data about that social world. Framed in this way, all empirical social sciences research involves theories and methods, whether they are stated explicitly or not. However, while theories and methods are often related, it is important that, as a researcher, you deliberately separate them in order to avoid your theories playing a disproportionate role in shaping what outcomes your chosen methods produce.

Introspectively engage in an ongoing dialectic between the application of theories and methods to help enable you to use the outcomes from your methods to interrogate and develop new theories, or ways of framing conceptually the research problem. This is how scholarship grows and branches out into new intellectual territory.

Reynolds, R. Larry. Ways of Knowing. Alternative Microeconomics . Part 1, Chapter 3. Boise State University; The Theory-Method Relationship. S-Cool Revision. United Kingdom.

Yet Another Writing Tip

Methods and the Methodology

Do not confuse the terms "methods" and "methodology." As Schneider notes, a method refers to the technical steps taken to do research . Descriptions of methods usually include defining and stating why you have chosen specific techniques to investigate a research problem, followed by an outline of the procedures you used to systematically select, gather, and process the data [remember to always save the interpretation of data for the discussion section of your paper].

The methodology refers to a discussion of the underlying reasoning why particular methods were used . This discussion includes describing the theoretical concepts that inform the choice of methods to be applied, placing the choice of methods within the more general nature of academic work, and reviewing its relevance to examining the research problem. The methodology section also includes a thorough review of the methods other scholars have used to study the topic.

Bryman, Alan. "Of Methods and Methodology." Qualitative Research in Organizations and Management: An International Journal 3 (2008): 159-168; Schneider, Florian. “What's in a Methodology: The Difference between Method, Methodology, and Theory…and How to Get the Balance Right?” PoliticsEastAsia.com. Chinese Department, University of Leiden, Netherlands.

- << Previous: Scholarly vs. Popular Publications

- Next: Qualitative Methods >>

- Last Updated: Jul 3, 2024 10:07 AM

- URL: https://libguides.usc.edu/writingguide

Research Methodology Example

Detailed Walkthrough + Free Methodology Chapter Template

If you’re working on a dissertation or thesis and are looking for an example of a research methodology chapter , you’ve come to the right place.

In this video, we walk you through a research methodology from a dissertation that earned full distinction , step by step. We start off by discussing the core components of a research methodology by unpacking our free methodology chapter template . We then progress to the sample research methodology to show how these concepts are applied in an actual dissertation, thesis or research project.

If you’re currently working on your research methodology chapter, you may also find the following resources useful:

- Research methodology 101 : an introductory video discussing what a methodology is and the role it plays within a dissertation

- Research design 101 : an overview of the most common research designs for both qualitative and quantitative studies

- Variables 101 : an introductory video covering the different types of variables that exist within research.

- Sampling 101 : an overview of the main sampling methods

- Methodology tips : a video discussion covering various tips to help you write a high-quality methodology chapter

- Private coaching : Get hands-on help with your research methodology

PS – If you’re working on a dissertation, be sure to also check out our collection of dissertation and thesis examples here .

FAQ: Research Methodology Example

Research methodology example: frequently asked questions, is the sample research methodology real.

Yes. The chapter example is an extract from a Master’s-level dissertation for an MBA program. A few minor edits have been made to protect the privacy of the sponsoring organisation, but these have no material impact on the research methodology.

Can I replicate this methodology for my dissertation?

As we discuss in the video, every research methodology will be different, depending on the research aims, objectives and research questions. Therefore, you’ll need to tailor your literature review to suit your specific context.

You can learn more about the basics of writing a research methodology chapter here .

Where can I find more examples of research methodologies?

The best place to find more examples of methodology chapters would be within dissertation/thesis databases. These databases include dissertations, theses and research projects that have successfully passed the assessment criteria for the respective university, meaning that you have at least some sort of quality assurance.

The Open Access Thesis Database (OATD) is a good starting point.

How do I get the research methodology chapter template?

You can access our free methodology chapter template here .

Is the methodology template really free?

Yes. There is no cost for the template and you are free to use it as you wish.

Great insights you are sharing here…

Submit a Comment Cancel reply

Your email address will not be published. Required fields are marked *

Save my name, email, and website in this browser for the next time I comment.

- Print Friendly

When you choose to publish with PLOS, your research makes an impact. Make your work accessible to all, without restrictions, and accelerate scientific discovery with options like preprints and published peer review that make your work more Open.

- PLOS Biology

- PLOS Climate

- PLOS Complex Systems

- PLOS Computational Biology

- PLOS Digital Health

- PLOS Genetics

- PLOS Global Public Health

- PLOS Medicine

- PLOS Mental Health

- PLOS Neglected Tropical Diseases

- PLOS Pathogens

- PLOS Sustainability and Transformation

- PLOS Collections

- How to Write Your Methods

Ensure understanding, reproducibility and replicability

What should you include in your methods section, and how much detail is appropriate?

Why Methods Matter

The methods section was once the most likely part of a paper to be unfairly abbreviated, overly summarized, or even relegated to hard-to-find sections of a publisher’s website. While some journals may responsibly include more detailed elements of methods in supplementary sections, the movement for increased reproducibility and rigor in science has reinstated the importance of the methods section. Methods are now viewed as a key element in establishing the credibility of the research being reported, alongside the open availability of data and results.

A clear methods section impacts editorial evaluation and readers’ understanding, and is also the backbone of transparency and replicability.

For example, the Reproducibility Project: Cancer Biology project set out in 2013 to replicate experiments from 50 high profile cancer papers, but revised their target to 18 papers once they understood how much methodological detail was not contained in the original papers.

What to include in your methods section

What you include in your methods sections depends on what field you are in and what experiments you are performing. However, the general principle in place at the majority of journals is summarized well by the guidelines at PLOS ONE : “The Materials and Methods section should provide enough detail to allow suitably skilled investigators to fully replicate your study. ” The emphases here are deliberate: the methods should enable readers to understand your paper, and replicate your study. However, there is no need to go into the level of detail that a lay-person would require—the focus is on the reader who is also trained in your field, with the suitable skills and knowledge to attempt a replication.

A constant principle of rigorous science

A methods section that enables other researchers to understand and replicate your results is a constant principle of rigorous, transparent, and Open Science. Aim to be thorough, even if a particular journal doesn’t require the same level of detail . Reproducibility is all of our responsibility. You cannot create any problems by exceeding a minimum standard of information. If a journal still has word-limits—either for the overall article or specific sections—and requires some methodological details to be in a supplemental section, that is OK as long as the extra details are searchable and findable .

Imagine replicating your own work, years in the future

As part of PLOS’ presentation on Reproducibility and Open Publishing (part of UCSF’s Reproducibility Series ) we recommend planning the level of detail in your methods section by imagining you are writing for your future self, replicating your own work. When you consider that you might be at a different institution, with different account logins, applications, resources, and access levels—you can help yourself imagine the level of specificity that you yourself would require to redo the exact experiment. Consider:

- Which details would you need to be reminded of?

- Which cell line, or antibody, or software, or reagent did you use, and does it have a Research Resource ID (RRID) that you can cite?

- Which version of a questionnaire did you use in your survey?

- Exactly which visual stimulus did you show participants, and is it publicly available?

- What participants did you decide to exclude?

- What process did you adjust, during your work?

Tip: Be sure to capture any changes to your protocols

You yourself would want to know about any adjustments, if you ever replicate the work, so you can surmise that anyone else would want to as well. Even if a necessary adjustment you made was not ideal, transparency is the key to ensuring this is not regarded as an issue in the future. It is far better to transparently convey any non-optimal methods, or methodological constraints, than to conceal them, which could result in reproducibility or ethical issues downstream.

Visual aids for methods help when reading the whole paper

Consider whether a visual representation of your methods could be appropriate or aid understanding your process. A visual reference readers can easily return to, like a flow-diagram, decision-tree, or checklist, can help readers to better understand the complete article, not just the methods section.

Ethical Considerations

In addition to describing what you did, it is just as important to assure readers that you also followed all relevant ethical guidelines when conducting your research. While ethical standards and reporting guidelines are often presented in a separate section of a paper, ensure that your methods and protocols actually follow these guidelines. Read more about ethics .

Existing standards, checklists, guidelines, partners

While the level of detail contained in a methods section should be guided by the universal principles of rigorous science outlined above, various disciplines, fields, and projects have worked hard to design and develop consistent standards, guidelines, and tools to help with reporting all types of experiment. Below, you’ll find some of the key initiatives. Ensure you read the submission guidelines for the specific journal you are submitting to, in order to discover any further journal- or field-specific policies to follow, or initiatives/tools to utilize.

Tip: Keep your paper moving forward by providing the proper paperwork up front

Be sure to check the journal guidelines and provide the necessary documents with your manuscript submission. Collecting the necessary documentation can greatly slow the first round of peer review, or cause delays when you submit your revision.

Randomized Controlled Trials – CONSORT The Consolidated Standards of Reporting Trials (CONSORT) project covers various initiatives intended to prevent the problems of inadequate reporting of randomized controlled trials. The primary initiative is an evidence-based minimum set of recommendations for reporting randomized trials known as the CONSORT Statement .

Systematic Reviews and Meta-Analyses – PRISMA The Preferred Reporting Items for Systematic Reviews and Meta-Analyses ( PRISMA ) is an evidence-based minimum set of items focusing on the reporting of reviews evaluating randomized trials and other types of research.

Research using Animals – ARRIVE The Animal Research: Reporting of In Vivo Experiments ( ARRIVE ) guidelines encourage maximizing the information reported in research using animals thereby minimizing unnecessary studies. (Original study and proposal , and updated guidelines , in PLOS Biology .)

Laboratory Protocols Protocols.io has developed a platform specifically for the sharing and updating of laboratory protocols , which are assigned their own DOI and can be linked from methods sections of papers to enhance reproducibility. Contextualize your protocol and improve discovery with an accompanying Lab Protocol article in PLOS ONE .

Consistent reporting of Materials, Design, and Analysis – the MDAR checklist A cross-publisher group of editors and experts have developed, tested, and rolled out a checklist to help establish and harmonize reporting standards in the Life Sciences . The checklist , which is available for use by authors to compile their methods, and editors/reviewers to check methods, establishes a minimum set of requirements in transparent reporting and is adaptable to any discipline within the Life Sciences, by covering a breadth of potentially relevant methodological items and considerations. If you are in the Life Sciences and writing up your methods section, try working through the MDAR checklist and see whether it helps you include all relevant details into your methods, and whether it reminded you of anything you might have missed otherwise.

Summary Writing tips

The main challenge you may find when writing your methods is keeping it readable AND covering all the details needed for reproducibility and replicability. While this is difficult, do not compromise on rigorous standards for credibility!

- Keep in mind future replicability, alongside understanding and readability.

- Follow checklists, and field- and journal-specific guidelines.

- Consider a commitment to rigorous and transparent science a personal responsibility, and not just adhering to journal guidelines.

- Establish whether there are persistent identifiers for any research resources you use that can be specifically cited in your methods section.

- Deposit your laboratory protocols in Protocols.io, establishing a permanent link to them. You can update your protocols later if you improve on them, as can future scientists who follow your protocols.

- Consider visual aids like flow-diagrams, lists, to help with reading other sections of the paper.

- Be specific about all decisions made during the experiments that someone reproducing your work would need to know.

Don’t

- Summarize or abbreviate methods without giving full details in a discoverable supplemental section.

- Presume you will always be able to remember how you performed the experiments, or have access to private or institutional notebooks and resources.

- Attempt to hide constraints or non-optimal decisions you had to make–transparency is the key to ensuring the credibility of your research.

- How to Write a Great Title

- How to Write an Abstract

- How to Report Statistics

- How to Write Discussions and Conclusions

- How to Edit Your Work

The contents of the Peer Review Center are also available as a live, interactive training session, complete with slides, talking points, and activities. …

The contents of the Writing Center are also available as a live, interactive training session, complete with slides, talking points, and activities. …

There’s a lot to consider when deciding where to submit your work. Learn how to choose a journal that will help your study reach its audience, while reflecting your values as a researcher…

Get science-backed answers as you write with Paperpal's Research feature

What is Research Methodology? Definition, Types, and Examples

Research methodology 1,2 is a structured and scientific approach used to collect, analyze, and interpret quantitative or qualitative data to answer research questions or test hypotheses. A research methodology is like a plan for carrying out research and helps keep researchers on track by limiting the scope of the research. Several aspects must be considered before selecting an appropriate research methodology, such as research limitations and ethical concerns that may affect your research.

The research methodology section in a scientific paper describes the different methodological choices made, such as the data collection and analysis methods, and why these choices were selected. The reasons should explain why the methods chosen are the most appropriate to answer the research question. A good research methodology also helps ensure the reliability and validity of the research findings. There are three types of research methodology—quantitative, qualitative, and mixed-method, which can be chosen based on the research objectives.

What is research methodology ?

A research methodology describes the techniques and procedures used to identify and analyze information regarding a specific research topic. It is a process by which researchers design their study so that they can achieve their objectives using the selected research instruments. It includes all the important aspects of research, including research design, data collection methods, data analysis methods, and the overall framework within which the research is conducted. While these points can help you understand what is research methodology, you also need to know why it is important to pick the right methodology.

Why is research methodology important?

Having a good research methodology in place has the following advantages: 3

- Helps other researchers who may want to replicate your research; the explanations will be of benefit to them.

- You can easily answer any questions about your research if they arise at a later stage.

- A research methodology provides a framework and guidelines for researchers to clearly define research questions, hypotheses, and objectives.

- It helps researchers identify the most appropriate research design, sampling technique, and data collection and analysis methods.

- A sound research methodology helps researchers ensure that their findings are valid and reliable and free from biases and errors.

- It also helps ensure that ethical guidelines are followed while conducting research.

- A good research methodology helps researchers in planning their research efficiently, by ensuring optimum usage of their time and resources.

Writing the methods section of a research paper? Let Paperpal help you achieve perfection

Types of research methodology.

There are three types of research methodology based on the type of research and the data required. 1

- Quantitative research methodology focuses on measuring and testing numerical data. This approach is good for reaching a large number of people in a short amount of time. This type of research helps in testing the causal relationships between variables, making predictions, and generalizing results to wider populations.

- Qualitative research methodology examines the opinions, behaviors, and experiences of people. It collects and analyzes words and textual data. This research methodology requires fewer participants but is still more time consuming because the time spent per participant is quite large. This method is used in exploratory research where the research problem being investigated is not clearly defined.

- Mixed-method research methodology uses the characteristics of both quantitative and qualitative research methodologies in the same study. This method allows researchers to validate their findings, verify if the results observed using both methods are complementary, and explain any unexpected results obtained from one method by using the other method.

What are the types of sampling designs in research methodology?

Sampling 4 is an important part of a research methodology and involves selecting a representative sample of the population to conduct the study, making statistical inferences about them, and estimating the characteristics of the whole population based on these inferences. There are two types of sampling designs in research methodology—probability and nonprobability.

- Probability sampling

In this type of sampling design, a sample is chosen from a larger population using some form of random selection, that is, every member of the population has an equal chance of being selected. The different types of probability sampling are:

- Systematic —sample members are chosen at regular intervals. It requires selecting a starting point for the sample and sample size determination that can be repeated at regular intervals. This type of sampling method has a predefined range; hence, it is the least time consuming.

- Stratified —researchers divide the population into smaller groups that don’t overlap but represent the entire population. While sampling, these groups can be organized, and then a sample can be drawn from each group separately.

- Cluster —the population is divided into clusters based on demographic parameters like age, sex, location, etc.

- Convenience —selects participants who are most easily accessible to researchers due to geographical proximity, availability at a particular time, etc.

- Purposive —participants are selected at the researcher’s discretion. Researchers consider the purpose of the study and the understanding of the target audience.

- Snowball —already selected participants use their social networks to refer the researcher to other potential participants.

- Quota —while designing the study, the researchers decide how many people with which characteristics to include as participants. The characteristics help in choosing people most likely to provide insights into the subject.

What are data collection methods?

During research, data are collected using various methods depending on the research methodology being followed and the research methods being undertaken. Both qualitative and quantitative research have different data collection methods, as listed below.

Qualitative research 5

- One-on-one interviews: Helps the interviewers understand a respondent’s subjective opinion and experience pertaining to a specific topic or event

- Document study/literature review/record keeping: Researchers’ review of already existing written materials such as archives, annual reports, research articles, guidelines, policy documents, etc.

- Focus groups: Constructive discussions that usually include a small sample of about 6-10 people and a moderator, to understand the participants’ opinion on a given topic.

- Qualitative observation : Researchers collect data using their five senses (sight, smell, touch, taste, and hearing).

Quantitative research 6

- Sampling: The most common type is probability sampling.

- Interviews: Commonly telephonic or done in-person.

- Observations: Structured observations are most commonly used in quantitative research. In this method, researchers make observations about specific behaviors of individuals in a structured setting.

- Document review: Reviewing existing research or documents to collect evidence for supporting the research.

- Surveys and questionnaires. Surveys can be administered both online and offline depending on the requirement and sample size.

Let Paperpal help you write the perfect research methods section. Start now!

What are data analysis methods.

The data collected using the various methods for qualitative and quantitative research need to be analyzed to generate meaningful conclusions. These data analysis methods 7 also differ between quantitative and qualitative research.

Quantitative research involves a deductive method for data analysis where hypotheses are developed at the beginning of the research and precise measurement is required. The methods include statistical analysis applications to analyze numerical data and are grouped into two categories—descriptive and inferential.

Descriptive analysis is used to describe the basic features of different types of data to present it in a way that ensures the patterns become meaningful. The different types of descriptive analysis methods are:

- Measures of frequency (count, percent, frequency)

- Measures of central tendency (mean, median, mode)

- Measures of dispersion or variation (range, variance, standard deviation)

- Measure of position (percentile ranks, quartile ranks)

Inferential analysis is used to make predictions about a larger population based on the analysis of the data collected from a smaller population. This analysis is used to study the relationships between different variables. Some commonly used inferential data analysis methods are:

- Correlation: To understand the relationship between two or more variables.

- Cross-tabulation: Analyze the relationship between multiple variables.

- Regression analysis: Study the impact of independent variables on the dependent variable.

- Frequency tables: To understand the frequency of data.

- Analysis of variance: To test the degree to which two or more variables differ in an experiment.

Qualitative research involves an inductive method for data analysis where hypotheses are developed after data collection. The methods include:

- Content analysis: For analyzing documented information from text and images by determining the presence of certain words or concepts in texts.

- Narrative analysis: For analyzing content obtained from sources such as interviews, field observations, and surveys. The stories and opinions shared by people are used to answer research questions.

- Discourse analysis: For analyzing interactions with people considering the social context, that is, the lifestyle and environment, under which the interaction occurs.

- Grounded theory: Involves hypothesis creation by data collection and analysis to explain why a phenomenon occurred.

- Thematic analysis: To identify important themes or patterns in data and use these to address an issue.

How to choose a research methodology?

Here are some important factors to consider when choosing a research methodology: 8

- Research objectives, aims, and questions —these would help structure the research design.

- Review existing literature to identify any gaps in knowledge.

- Check the statistical requirements —if data-driven or statistical results are needed then quantitative research is the best. If the research questions can be answered based on people’s opinions and perceptions, then qualitative research is most suitable.

- Sample size —sample size can often determine the feasibility of a research methodology. For a large sample, less effort- and time-intensive methods are appropriate.

- Constraints —constraints of time, geography, and resources can help define the appropriate methodology.

Got writer’s block? Kickstart your research paper writing with Paperpal now!

How to write a research methodology .

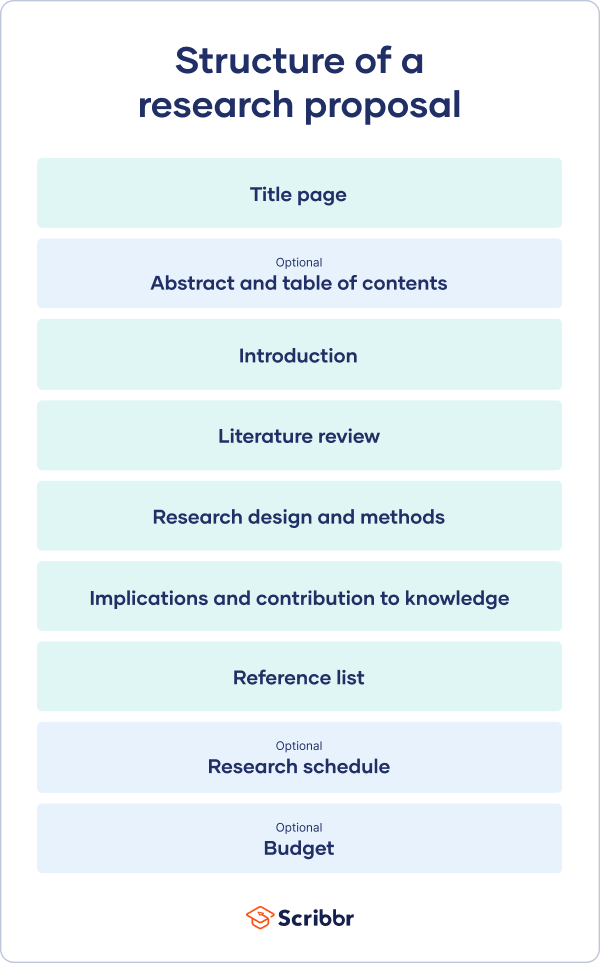

A research methodology should include the following components: 3,9

- Research design —should be selected based on the research question and the data required. Common research designs include experimental, quasi-experimental, correlational, descriptive, and exploratory.

- Research method —this can be quantitative, qualitative, or mixed-method.

- Reason for selecting a specific methodology —explain why this methodology is the most suitable to answer your research problem.

- Research instruments —explain the research instruments you plan to use, mainly referring to the data collection methods such as interviews, surveys, etc. Here as well, a reason should be mentioned for selecting the particular instrument.

- Sampling —this involves selecting a representative subset of the population being studied.

- Data collection —involves gathering data using several data collection methods, such as surveys, interviews, etc.

- Data analysis —describe the data analysis methods you will use once you’ve collected the data.

- Research limitations —mention any limitations you foresee while conducting your research.

- Validity and reliability —validity helps identify the accuracy and truthfulness of the findings; reliability refers to the consistency and stability of the results over time and across different conditions.

- Ethical considerations —research should be conducted ethically. The considerations include obtaining consent from participants, maintaining confidentiality, and addressing conflicts of interest.

Streamline Your Research Paper Writing Process with Paperpal

The methods section is a critical part of the research papers, allowing researchers to use this to understand your findings and replicate your work when pursuing their own research. However, it is usually also the most difficult section to write. This is where Paperpal can help you overcome the writer’s block and create the first draft in minutes with Paperpal Copilot, its secure generative AI feature suite.

With Paperpal you can get research advice, write and refine your work, rephrase and verify the writing, and ensure submission readiness, all in one place. Here’s how you can use Paperpal to develop the first draft of your methods section.

- Generate an outline: Input some details about your research to instantly generate an outline for your methods section

- Develop the section: Use the outline and suggested sentence templates to expand your ideas and develop the first draft.

- P araph ras e and trim : Get clear, concise academic text with paraphrasing that conveys your work effectively and word reduction to fix redundancies.

- Choose the right words: Enhance text by choosing contextual synonyms based on how the words have been used in previously published work.

- Check and verify text : Make sure the generated text showcases your methods correctly, has all the right citations, and is original and authentic. .

You can repeat this process to develop each section of your research manuscript, including the title, abstract and keywords. Ready to write your research papers faster, better, and without the stress? Sign up for Paperpal and start writing today!

Frequently Asked Questions

Q1. What are the key components of research methodology?

A1. A good research methodology has the following key components:

- Research design

- Data collection procedures

- Data analysis methods

- Ethical considerations

Q2. Why is ethical consideration important in research methodology?

A2. Ethical consideration is important in research methodology to ensure the readers of the reliability and validity of the study. Researchers must clearly mention the ethical norms and standards followed during the conduct of the research and also mention if the research has been cleared by any institutional board. The following 10 points are the important principles related to ethical considerations: 10

- Participants should not be subjected to harm.

- Respect for the dignity of participants should be prioritized.

- Full consent should be obtained from participants before the study.

- Participants’ privacy should be ensured.

- Confidentiality of the research data should be ensured.

- Anonymity of individuals and organizations participating in the research should be maintained.

- The aims and objectives of the research should not be exaggerated.

- Affiliations, sources of funding, and any possible conflicts of interest should be declared.

- Communication in relation to the research should be honest and transparent.

- Misleading information and biased representation of primary data findings should be avoided.

Q3. What is the difference between methodology and method?

A3. Research methodology is different from a research method, although both terms are often confused. Research methods are the tools used to gather data, while the research methodology provides a framework for how research is planned, conducted, and analyzed. The latter guides researchers in making decisions about the most appropriate methods for their research. Research methods refer to the specific techniques, procedures, and tools used by researchers to collect, analyze, and interpret data, for instance surveys, questionnaires, interviews, etc.

Research methodology is, thus, an integral part of a research study. It helps ensure that you stay on track to meet your research objectives and answer your research questions using the most appropriate data collection and analysis tools based on your research design.

Accelerate your research paper writing with Paperpal. Try for free now!

- Research methodologies. Pfeiffer Library website. Accessed August 15, 2023. https://library.tiffin.edu/researchmethodologies/whatareresearchmethodologies

- Types of research methodology. Eduvoice website. Accessed August 16, 2023. https://eduvoice.in/types-research-methodology/

- The basics of research methodology: A key to quality research. Voxco. Accessed August 16, 2023. https://www.voxco.com/blog/what-is-research-methodology/

- Sampling methods: Types with examples. QuestionPro website. Accessed August 16, 2023. https://www.questionpro.com/blog/types-of-sampling-for-social-research/

- What is qualitative research? Methods, types, approaches, examples. Researcher.Life blog. Accessed August 15, 2023. https://researcher.life/blog/article/what-is-qualitative-research-methods-types-examples/

- What is quantitative research? Definition, methods, types, and examples. Researcher.Life blog. Accessed August 15, 2023. https://researcher.life/blog/article/what-is-quantitative-research-types-and-examples/

- Data analysis in research: Types & methods. QuestionPro website. Accessed August 16, 2023. https://www.questionpro.com/blog/data-analysis-in-research/#Data_analysis_in_qualitative_research

- Factors to consider while choosing the right research methodology. PhD Monster website. Accessed August 17, 2023. https://www.phdmonster.com/factors-to-consider-while-choosing-the-right-research-methodology/

- What is research methodology? Research and writing guides. Accessed August 14, 2023. https://paperpile.com/g/what-is-research-methodology/

- Ethical considerations. Business research methodology website. Accessed August 17, 2023. https://research-methodology.net/research-methodology/ethical-considerations/

Paperpal is a comprehensive AI writing toolkit that helps students and researchers achieve 2x the writing in half the time. It leverages 21+ years of STM experience and insights from millions of research articles to provide in-depth academic writing, language editing, and submission readiness support to help you write better, faster.

Get accurate academic translations, rewriting support, grammar checks, vocabulary suggestions, and generative AI assistance that delivers human precision at machine speed. Try for free or upgrade to Paperpal Prime starting at US$19 a month to access premium features, including consistency, plagiarism, and 30+ submission readiness checks to help you succeed.

Experience the future of academic writing – Sign up to Paperpal and start writing for free!

Related Reads:

- Dangling Modifiers and How to Avoid Them in Your Writing

- Webinar: How to Use Generative AI Tools Ethically in Your Academic Writing

- Research Outlines: How to Write An Introduction Section in Minutes with Paperpal Copilot

- How to Paraphrase Research Papers Effectively

Language and Grammar Rules for Academic Writing

Climatic vs. climactic: difference and examples, you may also like, maintaining academic integrity with paperpal’s generative ai writing..., research funding basics: what should a grant proposal..., how to write an abstract in research papers..., how to write dissertation acknowledgements, how to structure an essay, leveraging generative ai to enhance student understanding of..., how to write a good hook for essays,..., addressing peer review feedback and mastering manuscript revisions..., how paperpal can boost comprehension and foster interdisciplinary..., what is the importance of a concept paper....

Reference management. Clean and simple.

What is research methodology?

The basics of research methodology

Why do you need a research methodology, what needs to be included, why do you need to document your research method, what are the different types of research instruments, qualitative / quantitative / mixed research methodologies, how do you choose the best research methodology for you, frequently asked questions about research methodology, related articles.

When you’re working on your first piece of academic research, there are many different things to focus on, and it can be overwhelming to stay on top of everything. This is especially true of budding or inexperienced researchers.

If you’ve never put together a research proposal before or find yourself in a position where you need to explain your research methodology decisions, there are a few things you need to be aware of.

Once you understand the ins and outs, handling academic research in the future will be less intimidating. We break down the basics below:

A research methodology encompasses the way in which you intend to carry out your research. This includes how you plan to tackle things like collection methods, statistical analysis, participant observations, and more.

You can think of your research methodology as being a formula. One part will be how you plan on putting your research into practice, and another will be why you feel this is the best way to approach it. Your research methodology is ultimately a methodological and systematic plan to resolve your research problem.

In short, you are explaining how you will take your idea and turn it into a study, which in turn will produce valid and reliable results that are in accordance with the aims and objectives of your research. This is true whether your paper plans to make use of qualitative methods or quantitative methods.

The purpose of a research methodology is to explain the reasoning behind your approach to your research - you'll need to support your collection methods, methods of analysis, and other key points of your work.

Think of it like writing a plan or an outline for you what you intend to do.

When carrying out research, it can be easy to go off-track or depart from your standard methodology.

Tip: Having a methodology keeps you accountable and on track with your original aims and objectives, and gives you a suitable and sound plan to keep your project manageable, smooth, and effective.

With all that said, how do you write out your standard approach to a research methodology?

As a general plan, your methodology should include the following information:

- Your research method. You need to state whether you plan to use quantitative analysis, qualitative analysis, or mixed-method research methods. This will often be determined by what you hope to achieve with your research.

- Explain your reasoning. Why are you taking this methodological approach? Why is this particular methodology the best way to answer your research problem and achieve your objectives?

- Explain your instruments. This will mainly be about your collection methods. There are varying instruments to use such as interviews, physical surveys, questionnaires, for example. Your methodology will need to detail your reasoning in choosing a particular instrument for your research.

- What will you do with your results? How are you going to analyze the data once you have gathered it?

- Advise your reader. If there is anything in your research methodology that your reader might be unfamiliar with, you should explain it in more detail. For example, you should give any background information to your methods that might be relevant or provide your reasoning if you are conducting your research in a non-standard way.

- How will your sampling process go? What will your sampling procedure be and why? For example, if you will collect data by carrying out semi-structured or unstructured interviews, how will you choose your interviewees and how will you conduct the interviews themselves?

- Any practical limitations? You should discuss any limitations you foresee being an issue when you’re carrying out your research.

In any dissertation, thesis, or academic journal, you will always find a chapter dedicated to explaining the research methodology of the person who carried out the study, also referred to as the methodology section of the work.

A good research methodology will explain what you are going to do and why, while a poor methodology will lead to a messy or disorganized approach.

You should also be able to justify in this section your reasoning for why you intend to carry out your research in a particular way, especially if it might be a particularly unique method.

Having a sound methodology in place can also help you with the following:

- When another researcher at a later date wishes to try and replicate your research, they will need your explanations and guidelines.

- In the event that you receive any criticism or questioning on the research you carried out at a later point, you will be able to refer back to it and succinctly explain the how and why of your approach.

- It provides you with a plan to follow throughout your research. When you are drafting your methodology approach, you need to be sure that the method you are using is the right one for your goal. This will help you with both explaining and understanding your method.

- It affords you the opportunity to document from the outset what you intend to achieve with your research, from start to finish.

A research instrument is a tool you will use to help you collect, measure and analyze the data you use as part of your research.

The choice of research instrument will usually be yours to make as the researcher and will be whichever best suits your methodology.

There are many different research instruments you can use in collecting data for your research.

Generally, they can be grouped as follows:

- Interviews (either as a group or one-on-one). You can carry out interviews in many different ways. For example, your interview can be structured, semi-structured, or unstructured. The difference between them is how formal the set of questions is that is asked of the interviewee. In a group interview, you may choose to ask the interviewees to give you their opinions or perceptions on certain topics.

- Surveys (online or in-person). In survey research, you are posing questions in which you ask for a response from the person taking the survey. You may wish to have either free-answer questions such as essay-style questions, or you may wish to use closed questions such as multiple choice. You may even wish to make the survey a mixture of both.

- Focus Groups. Similar to the group interview above, you may wish to ask a focus group to discuss a particular topic or opinion while you make a note of the answers given.

- Observations. This is a good research instrument to use if you are looking into human behaviors. Different ways of researching this include studying the spontaneous behavior of participants in their everyday life, or something more structured. A structured observation is research conducted at a set time and place where researchers observe behavior as planned and agreed upon with participants.

These are the most common ways of carrying out research, but it is really dependent on your needs as a researcher and what approach you think is best to take.

It is also possible to combine a number of research instruments if this is necessary and appropriate in answering your research problem.

There are three different types of methodologies, and they are distinguished by whether they focus on words, numbers, or both.

| Data type | What is it? | Methodology |

|---|---|---|

Quantitative | This methodology focuses more on measuring and testing numerical data. What is the aim of quantitative research? | Surveys, tests, existing databases. |

Qualitative | Qualitative research is a process of collecting and analyzing both words and textual data. | Observations, interviews, focus groups. |

Mixed-method | A mixed-method approach combines both of the above approaches. | Where you can use a mixed method of research, this can produce some incredibly interesting results. This is due to testing in a way that provides data that is both proven to be exact while also being exploratory at the same time. |

➡️ Want to learn more about the differences between qualitative and quantitative research, and how to use both methods? Check out our guide for that!

If you've done your due diligence, you'll have an idea of which methodology approach is best suited to your research.

It’s likely that you will have carried out considerable reading and homework before you reach this point and you may have taken inspiration from other similar studies that have yielded good results.

Still, it is important to consider different options before setting your research in stone. Exploring different options available will help you to explain why the choice you ultimately make is preferable to other methods.